805 KiB

🗣️ #258 - Quick-start Guide coming over from llama.cpp and ktransformers!

| Author | ubergarm |

|---|---|

| Created | 2025-03-14 |

| Updated | 2025-07-13 |

Description

ik_llama.cpp

Last Updated: Tue May 13 03:52:20 PM EDT 2025 (still needs more updates, can't keep up, check through comments below)

NEW: Two new custom quants great for CPU+GPU or CPU only inferencing fitting 32k+ context in under 24GB VRAM here on huggingface ubergarm/DeepSeek-V3-0324-GGUF! or start out with the quant you already have to kick the tires on ik_llama.cpp.

tl;dr;

ik_llama.cpp is a custom fork of llama.cpp introducing many interesting optimizations for MoE's like DeepSeek-R1 671B.

The new SOTA quant types can repack your existing GGUFs on the fly or you can roll your own to maximize quality and speed for your exact system VRAM and RAM availability.

I highly recommend you give ik_llama.cpp a try especially for CUDA+CPU or pure CPU inferencing. All the very similar ergonmics as vanilla llama-server that you already know and love.

- 64k context in under 24GB VRAM with over 15 tok/sec on a ThreadRipper Pro 24x core with 256GB RAM with single GPU.

- Gaming rig 9950X + 96GB RAM + 3090TI 24GB VRAM + NVMe for over 4 toks/sec!

- Fastest available implementation for DeepSeek-R1 671B on pure CPU dual socket Intel 6890P in my testing.

Install

# Install build dependencies and cuda toolkit as needed

# Clone

git clone https://github.com/ikawrakow/ik_llama.cpp

cd ik_llama.cpp

# Configure CUDA+CPU Backend

cmake -B ./build -DGGML_CUDA=ON -DGGML_BLAS=OFF

# *or* Configure CPU Only Backend

cmake -B ./build -DGGML_CUDA=OFF -DGGML_BLAS=OFF

# Build

cmake --build ./build --config Release -j $(nproc)

# Confirm

./build/bin/llama-server --version

version: 3597 (68a5b604)

Features

# Flash MLA & FlashMLA-2 & Flash Attention

# https://github.com/ikawrakow/ik_llama.cpp/pull/240

# https://github.com/ikawrakow/ik_llama.cpp/pull/253

# -fa, --flash-attn <0|1> (default: 0) # (for both CPU and CUDA)

# -mla, --mla-attn <0|1|2|3> (default: 0) # -mla 1 for CPU only, -mla 2 for both CPU and CUDA, -mla 3 for CPU only

# *NOTE*: for llama-bench use `-fa 1`

# *UPDATE*: you can use `-mla 3` now for CPU+GPU with new PR

# tl;dr; generally use -mla 2 for CPU+GPU and use -mla 3 for CPU assuming your model architecture supports MLA

-mla 2 -fa

## On-the-Fly MLA Tensors

# To run existing R1 671B quants that are missing MLA tensors *without* the need to roll your own

# https://github.com/ikawrakow/ik_llama.cpp/pull/259

# This means you can run your existing unsloth quants with full FlashMLA-2 support without downloading another quant!!!

# KV Cache Quantization

# https://github.com/ikawrakow/ik_llama.cpp/pull/208

# https://github.com/ikawrakow/ik_llama.cpp/pull/240#issue-2890555894

# -ctk, --cache-type-k TYPE KV cache data type for K (default: f16)

# -ctv, --cache-type-v TYPE KV cache data type for V (default: f16)

-ctk q8_0

# Re-Use K*Q tensor compute buffer specify size

# (for both CPU and CUDA)

# https://github.com/ikawrakow/ik_llama.cpp/pull/237

# (i = Size in MiB)

# -amb, --attn-max-batch <i> (default: 0)

-amb 512 # 512 MiB compute buffer is a good for DeepSeek-R1 671B on a single <24GB VRAM GPU

# Fused MoE

# (For CUDA and maybe CPU when not using computing an imatrix?)

# https://github.com/ikawrakow/ik_llama.cpp/pull/229

# -fmoe, --fused-moe <0|1> (default: 0)

# *NOTE*: for llama-bench use `-fmoe 1`

-fmoe

# Override Model Tensor Buffers

# (For CUDA or possibly RPC or other GPU backends)

# https://github.com/ikawrakow/ik_llama.cpp/pull/232

# -ot, --override-tensor pattern (default: none)

# *NOTE*: this now works with `mmap()` so run models too big for your RAM!

-ot exps=CPU -ngl 99 # put the MoE experts on CPU and the rest in GPU for max speed on lowish VRAM

# if you have multiple GPUs, this can get confusing, so take your time and start small and craft a regex for your setup

# Smart Expert Reduction

# https://github.com/ikawrakow/ik_llama.cpp/pull/239

# -ser, --smart-expert-reduction <i,f> (default: 0)

-ser 7,1 # or 6,1 or 5,1 for faster trading off quality for speed

# Run Time Repack

# Repack quants for improved performance for certain quants and hardware configs

# this disables mmap so need enough RAM to malloc all repacked quants (so pre-pack it yourself ahead of time with llama-quantize)

# (Optimize speed for repacked tensors on some CPUs - is good to use with hybrid GPU + CPU)

# https://github.com/ikawrakow/ik_llama.cpp/pull/147

# -rtr, --run-time-repack <0|1> (default: 0)

-rtr

# Offline Repacking Existing Quants

# Maximize quality, size, and speed

# Selecting quants for each tensor appropriate to your hybrid CPU/GPU configuration

# Remember repacked quants e.g. ending with `_R4` won't *run* on CUDA just sit there like expensive "RAM".

# https://github.com/ikawrakow/ik_llama.cpp/pull/274

# SoTA non-linear Quants with good CPU performance

# https://github.com/ikawrakow/ik_llama.cpp/pull/85

# ./bin/llama-quantize --help | grep non-linear

# Choose the repacked variants for CPU inferencing

# e.g. IQ2_K_R4 and friends for CPU tensors

# Supports both Explicit and Transparent Hugepages

# https://github.com/ikawrakow/ik_llama.cpp/pull/278#issuecomment-2746381515

# Pre-allocate Hugepages of 2MiB or 1GiB size to hold model weights

# or

# Configure system-wide THP support and confirm they are in use

Quick Start

Existing DeepSeek-R1 671B GGUF

Get 64k context with a single 24GB VRAM GPU using your existing unsloth quants like unsloth/DeepSeek-R1-UD-Q2-K_XL!

# CUDA GPU + CPU

# *NOTE*: This works on 68a5b604 but regression after that see GH ISSUE #271.

# *NOTE*: set --threads to number of physical cores

./build/bin/llama-server \

--alias unsloth/DeepSeek-R1-Q2_K_R4 \

--model /mnt/raid/models/unsloth/DeepSeek-R1-GGUF/DeepSeek-R1-UD-Q2_K_XL/DeepSeek-R1-UD-Q2_K_XL-00001-of-00005.gguf \

-rtr \

--ctx-size 65536 \

-ctk q8_0 \

-mla 2 -fa \

-amb 512 \

-fmoe \

--n-gpu-layers 63 \

--override-tensor exps=CPU \

--parallel 1 \

--threads 24 \

--host 127.0.0.1 \

--port 8080

.

.

.

llama_model_loader: - type f32: 361 tensors

llama_model_loader: - type q2_K: 171 tensors

llama_model_loader: - type q3_K: 3 tensors

llama_model_loader: - type q4_K: 306 tensors

llama_model_loader: - type q6_K: 184 tensors

.

.

.

llm_load_tensors: offloading 61 repeating layers to GPU

llm_load_tensors: offloading non-repeating layers to GPU

llm_load_tensors: offloaded 62/62 layers to GPU

llm_load_tensors: CPU buffer size = 205716.00 MiB

llm_load_tensors: CUDA_Host buffer size = 497.11 MiB

llm_load_tensors: CUDA0 buffer size = 9885.95 MiB

....................................................................................................

============ llm_load_tensors: need to compute 61 wk_b tensors

Computed blk.0.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.1.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.2.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

.

.

.

Computed blk.58.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.59.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.60.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

============ Repacked 174 tensors

llama_new_context_with_model: n_ctx = 65536

llama_new_context_with_model: n_batch = 2048

llama_new_context_with_model: n_ubatch = 512

llama_new_context_with_model: flash_attn = 1

llama_new_context_with_model: mla_attn = 2

llama_new_context_with_model: attn_max_b = 512

llama_new_context_with_model: fused_moe = 1

llama_new_context_with_model: ser = -1, 0

llama_new_context_with_model: freq_base = 10000.0

llama_new_context_with_model: freq_scale = 0.025

.

.

.

llama_kv_cache_init: CUDA0 KV buffer size = 2333.28 MiB

llama_new_context_with_model: KV self size = 2333.25 MiB, c^KV (q8_0): 2333.25 MiB, kv^T: not used

llama_new_context_with_model: CUDA_Host output buffer size = 0.99 MiB

llama_new_context_with_model: CUDA0 compute buffer size = 6081.00 MiB

llama_new_context_with_model: CUDA_Host compute buffer size = 240.01 MiB

llama_new_context_with_model: graph nodes = 13613

llama_new_context_with_model: graph splits = 118

.

.

.

INFO [ print_timings] prompt eval time = 2078.89 ms / 190 tokens ( 10.94 ms per token, 91.40 tokens per second) | tid="134221729001472" timestamp=1742422435 id_slot=0 id_task=753 t_prompt_processing=2078.885 n_prompt_tokens_processed=190 t_token=10.941500000000001 n_tokens_second=91.39514691769867

INFO [ print_timings] generation eval time = 107381.01 ms / 1557 runs ( 68.97 ms per token, 14.50 tokens per second) | tid="134221729001472" timestamp=1742422435 id_slot=0 id_task=753 t_token_generation=107381.013 n_decoded=1557 t_token=68.96661078998073 n_tokens_second=14.499770085052186

INFO [ print_timings] total time = 109459.90 ms | tid="134221729001472" timestamp=1742422435 id_slot=0 id_task=753 t_prompt_processing=2078.885 t_token_generation=107381.013 t_total=109459.898

Custom Quant

I rolled my own custom quant to improve quality while still fitting 32k context in under 24GB VRAM. No need to use -rtr as this quant is already repacked so you can still use mmap() allowing you to run on systems without enough RAM by paging the disk cache. This quant has lower perplexity than UD-Q2_K_XL while only being slightly larger/slower. Good size for 256GB RAM systems where Q4_K_M doesn't fit.

# CUDA GPU + CPU

./build/bin/llama-server \

--model /mnt/raid/models/ubergarm/DeepSeek-R1-GGUF/DeepSeek-R1-Q2_K_R4.gguf \

--alias ubergarm/DeepSeek-R1-Q2_K_R4 \

--ctx-size 32768 \

-ctk q8_0 \

-mla 2 -fa \

-amb 512 \

-fmoe \

--n-gpu-layers 63 \

--override-tensor exps=CPU \

--parallel 1 \

--threads 24 \

--host 127.0.0.1 \

--port 8080

.

.

.

llama_model_loader: - type f32: 361 tensors

llama_model_loader: - type q8_0: 612 tensors

llama_model_loader: - type q2_k_r4: 116 tensors

llama_model_loader: - type q3_k_r4: 58 tensors

.

.

.

llm_load_tensors: offloading 61 repeating layers to GPU

llm_load_tensors: offloading non-repeating layers to GPU

llm_load_tensors: offloaded 62/62 layers to GPU

llm_load_tensors: CPU buffer size = 225736.00 MiB

llm_load_tensors: CPU buffer size = 938.98 MiB

llm_load_tensors: CUDA0 buffer size = 17744.02 MiB

....................................................................................................

llama_new_context_with_model: n_ctx = 32768

llama_new_context_with_model: n_batch = 2048

llama_new_context_with_model: n_ubatch = 512

llama_new_context_with_model: flash_attn = 1

llama_new_context_with_model: mla_attn = 2

llama_new_context_with_model: attn_max_b = 512

llama_new_context_with_model: fused_moe = 1

llama_new_context_with_model: ser = -1, 0

llama_new_context_with_model: freq_base = 10000.0

llama_new_context_with_model: freq_scale = 0.025

.

.

.

llama_kv_cache_init: CUDA0 KV buffer size = 1166.65 MiB

llama_new_context_with_model: KV self size = 1166.62 MiB, c^KV (q8_0): 1166.62 MiB, kv^T: not used

llama_new_context_with_model: CUDA_Host output buffer size = 0.99 MiB

llama_new_context_with_model: CUDA0 compute buffer size = 3425.00 MiB

llama_new_context_with_model: CUDA_Host compute buffer size = 176.01 MiB

llama_new_context_with_model: graph nodes = 8245

llama_new_context_with_model: graph splits = 118

# CPU-only Example

# Configure BIOS for most RAM bandwidth in single NUMA node e.g.

# * AMD Epyc to NPS1 (or experiment with NPS0 on dual socket system)

# * Intel Xeon to SNC=Disable (no equivilent of NPS0 afaict)

# TODO: mention Explicit Huge Pages configuration and other Linux OS performance tweaks

$ numactl -N 0 -m 0 \

./build/bin/llama-server \

--alias repack/DeepSeek-R1-Q4_K_R4 \

--model /mnt/ai/models/unsloth/repack/DeepSeek-R1-Q4_K_R4.gguf \

--ctx-size 32768 \

-ctk q8_0 \

-mla 3 -fa \

-amb 1024 \

-fmoe \

--parallel 1 \

--threads 128 \

--numa numactl \

--host 127.0.0.1 \

--port 8080

Custom Quants

👇

Click here for how to make your own custom quants including repacking

# > The MLA attention tensors don't seem to quantize well at all and they are using 4bit for these, plus last time I checked they were only using 6 experts instead of 8.

# > I've got a custom llama.cpp quant with BF16 for all the _a and _b low-rank MLA attention tensors, Q6_K / Q5_K for all non-shared expert down_proj and up_proj/gate_proj respectively, and Q8_0 for everything else, and the story generation ability is on par with the official deepseek served models (and a lot better than many of the non-official versions being served on openrouter!).

# > Just changing the _b tensors for Q8_0 (and keeping everything else the same as above) starts to have really obvious negative effects on story generation, and using Q4_K or Q4_0 is severely degraded in comparison. I haven't rested this yet with the modified version of the MLA PR where I converted all the 3D batch matrix multiples to 2D though (this seemed to be a cause of some numerical problems too and might be the same reason for this). - jukofyork

# https://github.com/ikawrakow/ik_llama.cpp/pull/239#issuecomment-2708800842

# TODO: Show how to pack quants for speed and accuracy to fit into desired RAM size

# 0. Skip this and download an existing MLA supported quant e.g.

#https://huggingface.co/gghfez/DeepSeek-R1-11446-Q4_K

#https://huggingface.co/daydream-org/DeepSeek-R1-GGUF-11446/tree/main/DeepSeek-R1-Q3_K_M

#https://huggingface.co/gghfez/DeepSeek-R1-11446-Q2_K

# 1. Download original fp8 to target dir

uv venv ./venv --python 3.12 --python-preference=only-managed

source ./venv/bin/activate

uv pip install huggingface-hub hf_transfer huggingface-cli

HF_HUB_ENABLE_HF_TRANSFER=1 \

huggingface-cli \

download \

--resume-download \

--local-dir ./ \

deepseek-ai/DeepSeek-R1

# 2. Convert original fp8 to bf16

## Option A:

# Official DeepSeek pytorch implementation to convert fp8 to bf16 (may require newer/big GPU?):

# https://github.com/deepseek-ai/DeepSeek-V3/blob/main/inference/fp8_cast_bf16.py

# Then convert the output bf16 .safetensors to ~50GB splits GGUF format...

## Option B:

# Unofficial Triton CPU implementation (Converts fp8 safetensors directly to bf16 llama.cpp GGUF format):

# https://huggingface.co/daydream-org/DeepSeek-R1-GGUF-11446/discussions/1#67a327570051a98a96ded9e6

# Using Unofficial Instructions here:

mkdir fp8-to-bf16

cd fp8-to-bf16

uv venv ./venv --python 3.12 --python-preference=only-managed

source venv/bin/activate

uv pip install huggingface-cli

git clone https://github.com/evshiron/llama.cpp --recursive

cd llama.cpp

uv pip install -r requirements/requirements-convert_hf_to_gguf.txt --prerelease=allow --index-strategy unsafe-best-match

cmake -B build

cmake --build build --config Release -j$(nproc)

cd ..

git clone https://github.com/triton-lang/triton-cpu --recursive

cd triton-cpu

# apply saood06's patch https://github.com/ikawrakow/ik_llama.cpp/issues/383#issuecomment-2865306085

uv pip install ninja cmake wheel setuptools pybind11

MAX_JOBS=32 uv pip install -e python --no-build-isolation

# Be patient, "Preparing Packages" downloads a lot of stuff before build begins...

cd ..

# This outputs the <=~50GB gguf splits in the same directory as the original fp8 .safetensors

# you can use --output to specify a dir if you don't have enough space on the disk etc...

# Seems to use less than ~40GB RAM and as much extra RAM as disk cache as available.

# Does *not* use any GPU. A lot of disk i/o is nice to speed up reading/writing too.

# Only seems to use a single CPU thread most of the time.

# Getting just over 700Mbyte/s running on Thread Ripper Pro.

# Requires around 1.4TB of free space to hold the output files.

# Takes just over 30 minute at this speed.

python \

llama.cpp/convert_hf_to_gguf.py \

--outtype bf16 \

--split-max-size 50G \

path-to/fp8-safetensor-checkpoints/DeepSeek-R1

# Then mv *.gguf into its own directory as well as copy *.py and *.json

# 3. Convert bf16 to Custom MLA repacked quant to fit into your system RAM

# https://github.com/ikawrakow/ik_llama.cpp/pull/244

# importance matrix discussion: https://github.com/ikawrakow/ik_llama.cpp/pull/250

# example command: https://github.com/ikawrakow/ik_llama.cpp/pull/239#issuecomment-2708537218

# 3.5 Compute or download valid imatrix data file (good for <= ~Q4 quants or so)

# You can download either of these optional imatrix data if making smaller quants <= Q4ish

# but probably only for DeepSeek-R1 671B. For other models probably roll your own like so:

# (you might need like 1.5TB RAM to do this with bf16 model, but is easier to

# make q8_0_r8 quant first, and use that to generate the imatrix.dat with *only* ~715G RAM)

# https://github.com/ikawrakow/ik_llama.cpp/blob/main/examples/imatrix/README.md

# https://github.com/ggml-org/llama.cpp/discussions/5263

# https://gist.github.com/tristandruyen/9e207a95c7d75ddf37525d353e00659c

cd ik_llama.cpp

wget https://gist.githubusercontent.com/tristandruyen/9e207a95c7d75ddf37525d353e00659c/raw/571fda718462de863e5a0171078c175420c7649a/calibration_data_v5_rc.txt

numactl -N 0 -m 0 \

./build/bin/llama-imatrix \

--verbosity 1 \

-m /mnt/ai/models/ubergarm/DeepSeek-V3-0324-GGUF/DeepSeek-V3-0324-Q8_0_R8.gguf \

-f calibration_data_v5_rc.txt \

-o imatrix-DeepSeek-V3-0324.dat \

--ctx-size 512 \

--numa numactl \

--threads 128

# Download either of these optional imatrix data files specific to R1. or roll your own like above

# wget https://huggingface.co/bartowski/DeepSeek-R1-GGUF/resolve/main/DeepSeek-R1.imatrix -O imatrix-bartowski-DeepSeek-R1.dat

# wget https://huggingface.co/mradermacher/DeepSeek-R1-i1-GGUF/resolve/main/imatrix.dat -O imatrix-mradermacher-DeepSeek-R1.dat

# UPDATE: I don't recommend using these as only recent PR fixes MLA imatrix

# https://github.com/ikawrakow/ik_llama.cpp/pull/411

# Test

cd ik_llama.cpp

source venv/bin/activate

# ./build/bin/llama-quantize --help

# 138 or IQ2_K : 2.375 bpw non-linear quantization

# 338 or IQ2_K_R4 : IQ2_K repacked

# https://github.com/ikawrakow/ik_llama.cpp/discussions/242#discussioncomment-12489932

./build/bin/llama-quantize \

--imatrix /mnt/raid/models/deepseek-ai/DeepSeek-R1-bf16-GGUF/imatrix-bartowski-DeepSeek-R1.dat \

/mnt/raid/models/deepseek-ai/DeepSeek-R1-bf16-GGUF/DeepSeek-R1-256x21B-BF16-00001-of-00030.gguf \

/mnt/raid/models/ubergarm/DeepSeek-R1-GGUF/DeepSeek-R1-IQ2_K_R4.gguf \

IQ2_K_R4 \

$(nproc)

# Advanced Quants

# https://github.com/ikawrakow/ik_llama.cpp/discussions/242#discussioncomment-12452986

# https://github.com/ikawrakow/ik_llama.cpp/pull/239#issuecomment-2709032571

# Ignore these Notes

# BF16 for all the _a and _b low-rank MLA attention tensors

# Q6_K / Q5_K for all non-shared expert down_proj and up_proj/gate_proj respectively

# and Q8_0 for everything else

# Just changing the _b tensors for Q8_0 (and keeping everything else the same as above) negative effects

# https://github.com/ikawrakow/ik_llama.cpp/pull/239#issuecomment-2708800842

# might not need bf16, possibly numerican instability...

# llama_model_loader: - type f32: 361 tensors

# llama_model_loader: - type q8_0: 246 tensors

# llama_model_loader: - type q5_K: 116 tensors

# llama_model_loader: - type q6_K: 58 tensors

# llama_model_loader: - type bf16: 488 tensors

# print_info: file format = GGUF V3 (latest)

# print_info: file type = Q5_K - Medium

# print_info: file size = 467.54 GiB (5.98 BPW)

# Create a script:

#!/usr/bin/env bash 14:45:57 [43/1765]

custom="

# Token embedding and output tensors

token_embd\.weight=q8_0

output\.weight=q8_0

output_norm\.weight=q8_0

# First 3 dense layers (GPU0)

blk\.[0-2]\..*=q8_0

# Layers 3-4 (CPU) - MoE experts

blk\.[3-4]\.ffn_down_exps\.weight=q3_k_r4

blk\.[3-4]\.ffn_gate_exps\.weight=q2_k_r4

blk\.[3-4]\.ffn_up_exps\.weight=q2_k_r4

# Layers 5-11 (CPU) - MoE experts

blk\.[5-9]\.ffn_down_exps\.weight=q3_k_r4

blk\.[5-9]\.ffn_gate_exps\.weight=q2_k_r4

blk\.[5-9]\.ffn_up_exps\.weight=q2_k_r4

blk\.1[0-1]\.ffn_down_exps\.weight=q3_k_r4

blk\.1[0-1]\.ffn_gate_exps\.weight=q2_k_r4

blk\.1[0-1]\.ffn_up_exps\.weight=q2_k_r4

# Layers 12-18 (CPU) - MoE experts

blk\.1[2-8]\.ffn_down_exps\.weight=q3_k_r4

blk\.1[2-8]\.ffn_gate_exps\.weight=q2_k_r4

blk\.1[2-8]\.ffn_up_exps\.weight=q2_k_r4

# Layers 19-60 (CPU) - MoE experts

blk\.19\.ffn_down_exps\.weight=q3_k_r4

blk\.19\.ffn_gate_exps\.weight=q2_k_r4

blk\.19\.ffn_up_exps\.weight=q2_k_r4

blk\.[2-5][0-9]\.ffn_down_exps\.weight=q3_k_r4

blk\.[2-5][0-9]\.ffn_gate_exps\.weight=q2_k_r4

blk\.[2-5][0-9]\.ffn_up_exps\.weight=q2_k_r4

blk\.60\.ffn_down_exps\.weight=q3_k_r4

blk\.60\.ffn_gate_exps\.weight=q2_k_r4

blk\.60\.ffn_up_exps\.weight=q2_k_r4

# All attention tensors for MoE layers (3-60)

blk\.[3-9]\.attn_.*=q8_0

blk\.[1-5][0-9]\.attn_.*=q8_0

blk\.60\.attn_.*=q8_0

# Norm weights and bias for MoE layers (3-60)

blk\.[3-9]\.ffn_norm\.weight=q8_0

blk\.[1-5][0-9]\.ffn_norm\.weight=q8_0

blk\.60\.ffn_norm\.weight=q8_0

blk\.[3-9]\.exp_probs_b\.bias=q8_0

blk\.[1-5][0-9]\.exp_probs_b\.bias=q8_0

blk\.60\.exp_probs_b\.bias=q8_0

# Shared experts weights for MoE layers (3-60)

blk\.3\.ffn_.*shexp\.weight=q8_0

blk\.[4-9]\.ffn_.*shexp\.weight=q8_0

blk\.[1-5][0-9]\.ffn_.*shexp\.weight=q8_0

blk\.60\.ffn_.*shexp\.weight=q8_0

"

custom=$(

echo "$custom" | grep -v '^#' | \

sed -Ez 's:\n+:,:g;s:,$::;s:^,::'

)

./build/bin/llama-quantize \

--imatrix /mnt/raid/models/deepseek-ai/DeepSeek-R1-bf16-GGUF/imatrix-bartowski-DeepSeek-R1.dat \

--token-embedding-type q8_0 \

--output-tensor-type q8_0 \

--custom-q "$custom" \

/mnt/raid/models/deepseek-ai/DeepSeek-R1-bf16-GGUF/DeepSeek-R1-256x21B-BF16-00001-of-00030.gguf \

/mnt/raid/models/ubergarm/DeepSeek-R1-GGUF/DeepSeek-R1-GGUF-Q2_K_R4.gguf \

Q2_K_R4 \

$(nproc)

# I actually only ever tried half of $(nproc)

# not sure what most optimal speed will come from regarding CPU cores/threads / SMT etc...

# It has taken 40 minutes to 3.2 hours or so depending on exact quants used IQ's seem slow, q2_k_r4 is fast to pack

# TODO: There is no --dry-run but would be nice to have a way to predict final sizes before running?

☝️

Benchmarking

Test Rig

- AMD Ryzen Threadripper PRO 7965WX 24-Cores

- 256GB RAM (8x 32GB KF560R32-32 DDR5-6000 running at JEDEC 4800MHz psure)

- ~225GB/s

mlcmemory read bandwidth - RTX A6000 48GB VRAM

Linux TR24 6.13.0-061300-generic #202501302155 SMP PREEMPT_DYNAMIC Sat Feb 8 09:06:55 UTC 2025 x86_64 x86_64 x86_64 GNU/Linux- BIOS = NPS1 single NUMA node

llama-bench

Note ik_llama.cpp llama-bench doesn't seem to iterate over all variables so fix these manually for test cases:

-fmoe 0,1-rtr 0,1-otprobably, i didn't test this specifically as always usingexps=CPUfor this rig...

It does seem to iterate over variables for fa, mla, and amb.

# *NOTE*: this test was using `ik/prepare_wk_b` branch to support MLA on existing unsloth quants!

# *NOTE*: newer versions actually support `-ctk q8_0 -mla 2` etc.

# *NOTE*: -rtr 1 was only used with unsloth quant as the custom quant is pre-packed

./build/bin/llama-bench \

--model /mnt/raid/models/unsloth/DeepSeek-R1-GGUF/DeepSeek-R1-UD-Q2_K_XL/DeepSeek-R1-UD-Q2_K_XL-00001-of-00005.gguf \

-ctk q8_0 -ctv q8_0 \

-mla 2 -fa 1 \

-amb 2048 \

-fmoe 1 \

-rtr 1 \

--n-gpu-layers 63 \

--override-tensor exps=CPU \

--threads 24

build: f2fb15de (3596)

ggml_cuda_init: GGML_CUDA_FORCE_MMQ: no

ggml_cuda_init: GGML_CUDA_FORCE_CUBLAS: no

ggml_cuda_init: found 1 CUDA devices:

Device 0: NVIDIA RTX A6000, compute capability 8.6, VMM: yes

Computed blk.0.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.1.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.2.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.3.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

.

.

.

Computed blk.58.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.59.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

Computed blk.60.attn_v_b.weight as 128 x 512 x 128 and stored in buffer CUDA0

============ Repacked 174 tensors

| model | size | params | backend | ngl | type_k | type_v | fa | mla | amb | rtr | fmoe | test | t/s |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| DS-R1 671B unsloth UD-Q2_K_XL | 211.03 GiB | 671.03 B | CUDA | 63 | q8_0 | q8_0 | 1 | 2 | 2048 | 0 | 1 | pp512 | 69.85 ± 1.67 |

| DS-R1 671B unsloth UD-Q2_K_XL | 211.03 GiB | 671.03 B | CUDA | 63 | q8_0 | q8_0 | 1 | 2 | 2048 | 0 | 1 | tg128 | 7.35 ± 0.01 |

| DS-R1 671B unsloth UD-Q2_K_XL | 211.03 GiB | 671.03 B | CUDA | 63 | q8_0 | q8_0 | 1 | 2 | 2048 | 1 | 1 | pp512 | 110.79 ± 5.60 |

| DS-R1 671B unsloth UD-Q2_K_XL | 211.03 GiB | 671.03 B | CUDA | 63 | q8_0 | q8_0 | 1 | 2 | 2048 | 1 | 1 | tg128 | 13.13 ± 0.07 |

| DS-R1 671B unsloth UD-Q2_K_XL | 211.03 GiB | 671.03 B | CUDA | 63 | f16 | f16 | 1 | 2 | 2048 | 1 | 1 | pp512 | 114.56 ± 1.75 |

| DS-R1 671B unsloth UD-Q2_K_XL | 211.03 GiB | 671.03 B | CUDA | 63 | f16 | f16 | 1 | 2 | 2048 | 1 | 1 | tg128 | 13.68 ± 0.07 |

| DS-R1 671B ubergarm IQ2_XS_R4 | 213.11 GiB | 672.05 B | CUDA | 63 | q8_0 | q8_0 | 1 | 2 | 2048 | 0 | 1 | pp512 | 65.31 ± 1.52 |

| DS-R1 671B ubergarm IQ2_XS_R4 | 213.11 GiB | 672.05 B | CUDA | 63 | q8_0 | q8_0 | 1 | 2 | 2048 | 0 | 1 | tg128 | 10.48 ± 0.01 |

| DS-R1 671B ubergarm Q2_K_R4 | 238.69 GiB | 672.05 B | CUDA | 63 | f16 | f16 | 1 | 2 | 2048 | 0 | 1 | pp512 | 111.89 ± 2.68 |

| DS-R1 671B ubergarm Q2_K_R4 | 238.69 GiB | 672.05 B | CUDA | 63 | f16 | f16 | 1 | 2 | 2048 | 0 | 1 | tg128 | 11.55 ± 0.04 |

| DS-R1 671B ubergarm Q2_K_R4 | 238.69 GiB | 672.05 B | CUDA | 63 | q8_0 | q8_0 | 1 | 2 | 2048 | 0 | 1 | pp512 | 109.06 ± 2.86 |

| DS-R1 671B ubergarm Q2_K_R4 | 238.69 GiB | 672.05 B | CUDA | 63 | q8_0 | q8_0 | 1 | 2 | 2048 | 0 | 1 | tg128 | 11.10 ± 0.01 |

Perplexity

# Test your quant against known quants

# Lower is Better

# https://github.com/ikawrakow/ik_llama.cpp/pull/239#issuecomment-2701019253

# example command: https://github.com/ikawrakow/ik_llama.cpp/pull/239#issuecomment-2708537247

wget https://github.com/user-attachments/files/19090237/wiki.test.raw.gz

gunzip wiki.test.raw.gz

# this can takes an hour or more for full run

# but only really need first ~25 points or so

# also some quants give nan results even on vanilla llama.cpp

# *NOTE* I don't think `-ctk q8_0 -ctv q8_0` are valid with `-mla 2 -fa` yet so take this with a grain of salt.

CUDA_VISIBLE_DEVICES="0," \

./build/bin/llama-perplexity \

--model /mnt/raid/models/ubergarm/DeepSeek-R1-GGUF/DeepSeek-R1-IQ2_XS_R4.gguf \

-ctk q8_0 \

-mla 2 -fa \

-amb 512 \

-fmoe \

--ctx-size 512 \

--ubatch-size 512 \

-f wiki.test.raw \

--n-gpu-layers 63 \

--override-tensor exps=CPU \

--threads 24

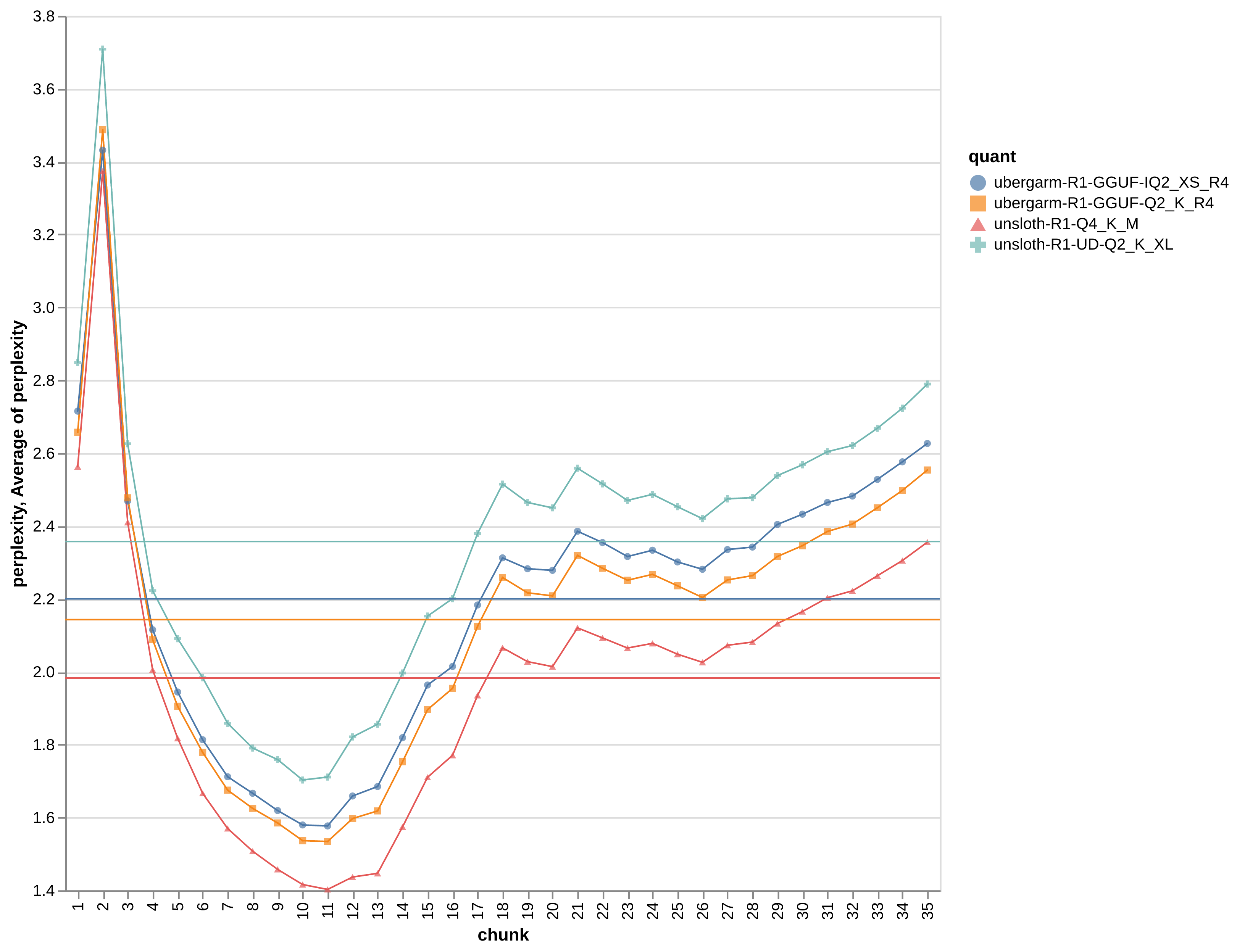

Even more perplexity logs

There is a lot going on here. There may be some issues with nan and "numerical instability" depending on exact quants and llama.cpp forks in use. So this is still evolving.

I made the above png graph using the first 35 chunks for easy comparison as generally nan didn't appear too early for most quants.

I also haven't compared perplexity across ik_llama.cpp with different settings (e.g. mla etc) vs vanilla llama.cpp and CPU vs CUDA backends etc.

The following exact detailed logs results are not included yet in the graph above.

Q8_0

I ran the unsloth Q8_0 on that intel6980P CPU only backend with vanilla llama.cpp/main@b1b132ef for a baseline. Note there is no MLA etc yet in this case.

numactl -N 0 -m 0 \

./build/bin/llama-perplexity \

--model /mnt/ai/models/unsloth/DeepSeek-R1-GGUF/DeepSeek-R1-Q8_0/DeepSeek-R1.Q8_0-00001-of-00015.gguf \

-ctk f16 -ctv f16 \

--ctx-size 512 \

--ubatch-size 512 \

-f wiki.test.raw \

--numa numactl \

--threads 80

perplexity: tokenizing the input ..

perplexity: tokenization took 724.131 ms

perplexity: calculating perplexity over 561 chunks, n_ctx=512, batch_size=2048, n_seq=4

perplexity: 60.35 seconds per pass - ETA 2 hours 21.05 minutes

[1]2.5013,[2]3.2882,[3]2.3700,[4]1.9826,[5]1.7891,[6]1.6469,[7]1.5544,[8]1.4883,[9]1.4387,[10]1.3997,[11]1.3842,[12]1.4194,[13]1.4299,[14]1.5576,[15]1.6890,[16]1.7483,[17]1.9110,[18]2.0408,[19]2.0033,[20]1.9911,[21]2.0982,[22]2.0702,[23]2.0430,[24]2.0560,[25]2.0267,[26]2.0035,[27]2.0524,[28]2.0598,[29]2.1085,[30]2.1396,[31]2.1742,[32]2.1918,[33]2.2304,[34]2.2706,[35]2.3192,[36]2.3717,[37]2.4071,[38]2.4526,[39]2.4940,[40]2.5527,[41]2.5950,[42]2.6072,[43]2.6559,[44]2.6723,[45]2.7517,[46]2.8023,[47]2.7573,[48]2.7107,[49]2.6842,[50]2.7039,[51]2.7504,[52]2.7650,[53]2.8143,[54]2.8275,[55]2.8585,[56]2.8898,[57]2.9036,[58]2.9402,[59]2.9512,[60]2.9968,[61]3.0366,[62]3.0894,[63]3.1213,[64]3.1652,[65]3.1751,[66]3.1579,[67]3.1353,[68]3.1665,[69]3.1618,[70]3.1771,[71]3.1956,[72]3.2115,[73]3.2259,[74]3.2494,[75]3.2284,[76]3.1816,[77]3.1389,[78]3.1344,[79]3.1122,[80]3.0929,[81]3.0561,[82]3.0596,[83]3.0282,[84]2.9923,[85]2.9572,[86]2.9321,[87]2.9257,[88]2.8971,[89]2.8805,[90]2.8542,[91]2.8245,[92]2.7997,[93]2.7731,[94]2.7463,[95]2.7224,[96]2.7210,[97]2.7283,[98]2.7132,[99]2.6960,[100]2.6985,[101]2.6899,[102]2.7065,[103]2.7327,[104]2.7513,[105]2.7482,[106]2.7706,[107]2.7948,[108]2.8154,[109]2.8493,[110]2.8832,[111]2.9028,[112]2.8771,[113]2.8641,[114]2.8419,[115]2.8266,[116]2.8114,[117]2.7885,[118]2.7677,[119]2.7465,[120]2.7277,[121]2.7122,[122]2.6947,[123]2.6785,[124]2.6597,[125]2.6422,[126]2.6257,[127]2.6117,[128]2.6027,[129]2.5920,[130]2.5797,[131]2.5724,[132]2.5798,[133]2.5894,[134]2.5959,[135]2.6064,[136]2.6225,[137]2.6379,[138]2.6461,[139]2.6576,[140]2.6586,[141]2.6603,[142]2.6594,[143]2.6599,[144]2.6569,[145]2.6481,[146]2.6467,[147]2.6512,[148]2.6510,[149]2.6527,[150]2.6476,[151]2.6458,[152]2.6429,[153]2.6392,[154]2.6399,[155]2.6443,[156]2.6465,[157]2.6527,[158]2.6615,[159]2.6634,[160]2.6723,[161]2.6806,[162]2.6900,[163]2.6941,[164]2.7141,[165]2.7378,[166]2.7551,[167]2.7673,[168]2.7915,[169]2.8139,[170]2.8354,[171]2.8586,[172]2.8427,[173]2.8264,[174]2.8128,[175]2.7995,[176]2.7872,[177]2.7756,[178]2.7630,[179]2.7493,[180]2.7532,[181]2.7671,[182]2.7822,[183]2.7970,[184]2.8112,[185]2.8216,[186]2.8381,[187]2.8534,[188]2.8675,[189]2.8782,[190]2.8785,[191]2.8859,[192]2.8899,[193]2.8950,[194]2.9146,[195]2.9234,[196]2.9368,[197]2.9468,[198]2.9513,[199]2.9570,[200]2.9566,[201]2.9717,[202]2.9671,[203]2.9724,[204]2.9760,[205]2.9759,[206]2.9785,[207]2.9874,[208]2.9970,[209]3.0063,[210]3.0069,[211]3.0022,[212]3.0021,[213]3.0097,[214]3.0116,[215]3.0174,[216]3.0180,[217]3.0140,[218]3.0142,[219]3.0152,[220]3.0146,[221]3.0148,[222]3.0149,[223]3.0155,[224]3.0205,[225]3.0224,[226]3.0144,[227]3.0122,[228]3.0145,[229]3.0191,[230]3.0256,[231]3.0318,[232]3.0236,[233]3.0158,[234]3.0158,[235]3.0142,[236]3.0230,[237]3.0315,[238]3.0410,[239]3.0508,[240]3.0601,[241]3.0713,[242]3.0857,[243]3.0992,[244]3.1073,[245]3.1183,[246]3.1288,[247]3.1276,[248]3.1235,[249]3.1216,[250]3.1154,[251]3.1133,[252]3.1158,[253]3.1196,[254]3.1267,[255]3.1331,[256]3.1369,[257]3.1393,[258]3.1405,[259]3.1438,[260]3.1459,[261]3.1473,[262]3.1465,[263]3.1522,[264]3.1545,[265]3.1550,[266]3.1568,[267]3.1597,[268]3.1634,[269]3.1665,[270]3.1659,[271]3.1644,[272]3.1577,[273]3.1576,[274]3.1507,[275]3.1399,[276]3.1291,[277]3.1308,[278]3.1410,[279]3.1472,[280]3.1551,[281]3.1625,[282]3.1687,[283]3.1751,[284]3.1818,[285]3.1954,[286]3.1979,[287]3.2013,[288]3.2060,[289]3.2087,[290]3.2005,[291]3.1911,[292]3.1892,[293]3.1883,[294]3.1855,[295]3.1829,[296]3.1848,[297]3.1853,[298]3.1902,[299]3.1961,[300]3.1992,[301]3.2030,[302]3.2052,[303]3.2072,[304]3.2067,[305]3.2186,[306]3.2261,[307]3.2370,[308]3.2258,[309]3.2204,[310]3.2109,[311]3.2145,[312]3.2167,[313]3.2230,[314]3.2251,[315]3.2283,[316]3.2297,[317]3.2315,[318]3.2321,[319]3.2324,[320]3.2367,[321]3.2370,[322]3.2390,[323]3.2454,[324]3.2463,[325]3.2516,[326]3.2563,[327]3.2604,[328]3.2634,[329]3.2652,[330]3.2715,[331]3.2752,[332]3.2800,[333]3.2786,[334]3.2787,[335]3.2792,[336]3.2794,[337]3.2805,[338]3.2808,[339]3.2835,[340]3.2871,[341]3.2925,[342]3.3015,[343]3.3108,[344]3.3161,[345]3.3074,[346]3.2997,[347]3.2945,[348]3.2872,[349]3.2835,[350]3.2817,[351]3.2864,[352]3.3013,[353]3.3104,[354]3.3232,[355]3.3318,[356]3.3371,[357]3.3487,[358]3.3583,[359]3.3615,[360]3.3680,[361]3.3772,[362]3.3858,[363]3.3915,[364]3.3981,[365]3.4044,[366]3.4148,[367]3.4234,[368]3.4301,[369]3.4380,[370]3.4465,[371]3.4602,[372]3.4689,[373]3.4722,[374]3.4758,[375]3.4808,[376]3.4936,[377]3.5048,[378]3.5075,[379]3.5069,[380]3.5037,[381]3.5083,[382]3.5139,[383]3.5175,[384]3.5218,[385]3.5257,[386]3.5319,[387]3.5377,[388]3.5411,[389]3.5308,[390]3.5213,[391]3.5107,[392]3.5051,[393]3.4955,[394]3.4865,[395]3.4772,[396]3.4672,[397]3.4584,[398]3.4488,[399]3.4385,[400]3.4296,[401]3.4196,[402]3.4093,[403]3.4007,[404]3.3905,[405]3.3811,[406]3.3711,[407]3.3619,[408]3.3531,[409]3.3446,[410]3.3386,[411]3.3392,[412]3.3345,[413]3.3363,[414]3.3385,[415]3.3353,[416]3.3351,[417]3.3375,[418]3.3317,[419]3.3332,[420]3.3308,[421]3.3298,[422]3.3312,[423]3.3304,[424]3.3346,[425]3.3341,[426]3.3346,[427]3.3335,[428]3.3360,[429]3.3378,[430]3.3406,[431]3.3413,[432]3.3403,[433]3.3366,[434]3.3366,[435]3.3289,[436]3.3226,[437]3.3185,[438]3.3167,[439]3.3134,[440]3.3183,[441]3.3237,[442]3.3311,[443]3.3293,[444]3.3302,[445]3.3315,[446]3.3363,[447]3.3396,[448]3.3421,[449]3.3452,[450]3.3490,[451]3.3520,[452]3.3540,[453]3.3557,[454]3.3543,[455]3.3564,[456]3.3567,[457]3.3594,[458]3.3646,[459]3.3653,[460]3.3654,[461]3.3622,[462]3.3659,[463]3.3732,[464]3.3785,[465]3.3714,[466]3.3696,[467]3.3677,[468]3.3688,[469]3.3658,[470]3.3631,[471]3.3634,[472]3.3640,[473]3.3632,[474]3.3624,[475]3.3635,[476]3.3619,[477]3.3610,[478]3.3617,[479]3.3633,[480]3.3660,[481]3.3620,[482]3.3654,[483]3.3646,[484]3.3682,[485]3.3746,[486]3.3775,[487]3.3812,[488]3.3864,[489]3.3889,[490]3.3935,[491]3.3997,[492]3.4042,[493]3.4040,[494]3.4052,[495]3.4076,[496]3.4095,[497]3.4124,[498]3.4127,[499]3.4122,[500]3.4163,[501]3.4209,[502]3.4200,[503]3.4185,[504]3.4205,[505]3.4239,[506]3.4323,[507]3.4350,[508]3.4385,[509]3.4312,[510]3.4254,[511]3.4188,[512]3.4142,[513]3.4080,[514]3.4065,[515]3.4084,[516]3.4033,[517]3.4032,[518]3.4024,[519]3.4029,[520]3.4073,[521]3.4062,[522]3.4047,[523]3.4105,[524]3.4092,[525]3.4076,[526]3.4028,[527]3.3979,[528]3.3942,[529]3.3913,[530]3.3883,[531]3.3852,[532]3.3797,[533]3.3735,[534]3.3692,[535]3.3700,[536]3.3728,[537]3.3759,[538]3.3785,[539]3.3812,[540]3.3865,[541]3.3898,[542]3.3922,[543]3.3865,[544]3.3822,[545]3.3819,[546]3.3753,[547]3.3688,[548]3.3624,[549]3.3557,[550]3.3497,[551]3.3436,[552]3.3378,[553]3.3319,[554]3.3298,[555]3.3283,[556]3.3311,[557]3.3351,[558]3.3410,[559]3.3455,[560]3.3508,[561]3.3490,

Final estimate: PPL = 3.3490 +/- 0.01849

llama_perf_context_print: load time = 226439.86 ms

llama_perf_context_print: prompt eval time = 8320298.42 ms / 287232 tokens ( 28.97 ms per token, 34.52 tokens per second)

llama_perf_context_print: eval time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_perf_context_print: total time = 8511632.28 ms / 287233 tokens

ubergarm Q2_K_R4

This is a custom quant I rolled with q8_0 for all attention/shared experts/embeddings loaded on GPU. The rest of the MoE down exps are q3_k_r4 and gate/up exps are q2_k_r4 which gives fast speed quant that fits nicely into under 256GB RAM and 24GB VRAM with about 32k context without sacrificing much perplexity.

This was run on ik_llama.cpp@127c6ee6

CUDA_VISIBLE_DEVICES="0," \

./build/bin/llama-perplexity \

--model /mnt/raid/models/ubergarm/DeepSeek-R1-GGUF/DeepSeek-R1-Q2_K_R4.gguf \

-ctk q8_0 \

-mla 2 -fa \

-amb 512 \

-fmoe \

--ctx-size 512 \

--ubatch-size 512 \

-f wiki.test.raw \

--seed 1337 \

--n-gpu-layers 63 \

--override-tensor exps=CPU \

--threads 24

main: build = 3597 (127c6ee6)

main: built with cc (Ubuntu 13.3.0-6ubuntu2~24.04) 13.3.0 for x86_64-linux-gnu

main: seed = 1337

llama_model_loader: - type f32: 361 tensors

llama_model_loader: - type q8_0: 612 tensors

llama_model_loader: - type q2_k_r4: 116 tensors

llama_model_loader: - type q3_k_r4: 58 tensors

llm_load_tensors: CPU buffer size = 241396.85 MiB

llm_load_tensors: CPU buffer size = 938.98 MiB

llm_load_tensors: CUDA0 buffer size = 17744.02 MiB

llama_kv_cache_init: CUDA0 KV buffer size = 72.94 MiB

llama_new_context_with_model: KV self size = 72.91 MiB, c^KV (q8_0): 72.91 MiB, kv^T: not used

llama_new_context_with_model: CUDA_Host output buffer size = 1.97 MiB

llama_new_context_with_model: CUDA0 compute buffer size = 503.00 MiB

llama_new_context_with_model: CUDA_Host compute buffer size = 162.01 MiB

llama_new_context_with_model: graph nodes = 3548

llama_new_context_with_model: graph splits = 118

system_info: n_threads = 24 / 48 | AVX = 1 | AVX_VNNI = 0 | AVX2 = 1 | AVX512 = 1 | AVX512_VBMI = 1 | AVX512_VNNI = 1 | AVX512_BF16 = 1 | FMA = 1 | NE

ON = 0 | SVE = 0 | ARM_FMA = 0 | F16C = 1 | FP16_VA = 0 | WASM_SIMD = 0 | BLAS = 1 | SSE3 = 1 | SSSE3 = 1 | VSX = 0 | MATMUL_INT8 = 0 | LLAMAFILE = 1

|

perplexity: tokenizing the input ..

perplexity: tokenization took 622.117 ms

perplexity: calculating perplexity over 561 chunks, n_ctx=512, batch_size=2048, n_seq=4

perplexity: 22.17 seconds per pass - ETA 51.82 minutes

[1]2.6638,[2]3.4777,[3]2.4750,[4]2.0889,[5]1.9114,[6]1.7840,[7]1.6778,[8]1.6280,[9]1.5861,[10]1.5368,[11]1.5350,[12]1.6021,[13]1.6219,[14]1.7566,[15]1.8981,[16]1.9568,[17]2.1267,[18]2.2596,[19]2.2162,[20]2.2076,[21]2.3177,[22]2.2827,[23]2.2506,[24]2.2664,[25]2.2356,[26]2.2031,[27]2.2509,[28]2.2621,[29]2.3150,[30]2.3456,[31]2.3842,[32]2.4047,[33]2.4491,[34]2.4968,[35]2.5548,[36]2.6101,[37]2.6450,[38]2.6943,[39]2.7349,[40]2.7982,[41]2.8432,[42]2.8527,[43]2.9058,[44]2.9198,[45]3.0016,[46]3.0547,[47]3.0161,[48]2.9682,[49]2.9447,[50]2.9692,[51]3.0185,[52]3.0358,[53]3.0904,[54]3.1052,[55]3.1362,[56]3.1730,[57]3.1878,[58]3.2298,[59]3.2355,[60]3.2852,[61]3.3261,[62]3.3815,[63]3.4167,[64]3.4623,[65]3.4705,[66]3.4568,[67]3.4360,[68]3.4732,[69]3.4763,[70]3.4917,[71]3.5079,[72]3.5222,[73]3.5335,[74]3.5558,[75]3.5337,[76]3.4827,[77]3.4411,[78]3.4385,[79]3.4195,[80]3.4069,[81]3.3681,[82]3.3782,[83]3.3509,[84]3.3178,[85]3.2861,[86]3.2623,[87]3.2651,[88]3.2385,[89]3.2313,[90]3.2041,[91]3.1805,[92]3.1557,[93]3.1293,[94]3.1076,[95]3.0903,[96]3.0928,[97]3.1020,[98]3.0908,[99]3.0718,[100]3.0734,[101]3.0656,[102]3.0834,[103]3.1118,[104]3.1334,[105]3.1289,[106]3.1553,[107]3.1798,[108]3.2007,[109]3.2368,[110]3.2717,[111]3.2932,[112]3.2641,[113]3.2514,[114]3.2308,[115]3.2142,[116]3.2089,[117]3.1865,[118]3.1646,[119]3.1440,[120]3.1220,[121]3.1077,[122]3.0867,[123]3.0684,[124]3.0491,[125]3.0306,[126]3.0122,[127]2.9989,[128]2.9941,[129]2.9858,[130]2.9752,[131]2.9681,[132]2.9766,[133]2.9844,[134]2.9892,[135]3.0006,[136]3.0188,[137]3.0355,[138]3.0423,[139]3.0529,[140]3.0518,[141]3.0514,[142]3.0485,[143]3.0472,[144]3.0406,[145]3.0305,[146]3.0274,[147]3.0301,[148]3.0286,[149]3.0286,[150]3.0209,[151]3.0173,[152]3.0128,[153]3.0070,[154]3.0063,[155]3.0096,[156]3.0102,[157]3.0149,[158]3.0234,[159]3.0244,[160]3.0334,[161]3.0417,[162]3.0509,[163]3.0566,[164]3.0781,[165]3.1021,[166]3.1200,[167]3.1341,[168]3.1601,[169]3.1830,[170]3.2043,[171]3.2285,[172]3.2094,[173]3.1897,[174]3.1763,[175]3.1635,[176]3.1512,[177]3.1393,[178]3.1260,[179]3.1114,[180]3.1151,[181]3.1294,[182]3.1451,[183]3.1596,[184]3.1737,[185]3.1836,[186]3.2002,[187]3.2150,[188]3.2297,[189]3.2397,[190]3.2401,[191]3.2467,[192]3.2485,[193]3.2522,[194]3.2726,[195]3.2824,[196]3.2955,[197]3.3053,[198]3.3084,[199]3.3139,[200]3.3115,[201]3.3268,[202]3.3208,[203]3.3263,[204]3.3285,[205]3.3289,[206]3.3309,[207]3.3401,[208]3.3495,[209]3.3596,[210]3.3591,[211]3.3530,[212]3.3525,[213]3.3601,[214]3.3613,[215]3.3673,[216]3.3670,[217]3.3614,[218]3.3608,[219]3.3607,[220]3.3586,[221]3.3583,[222]3.3578,[223]3.3582,[224]3.3630,[225]3.3651,[226]3.3555,[227]3.3541,[228]3.3557,[229]3.3600,[230]3.3664,[231]3.3725,[232]3.3629,[233]3.3560,[234]3.3588,[235]3.3588,[236]3.3679,[237]3.3768,[238]3.3863,[239]3.3968,[240]3.4056,[241]3.4171,[242]3.4330,[243]3.4464,[244]3.4550,[245]3.4673,[246]3.4779,[247]3.4755,[248]3.4711,[249]3.4687,[250]3.4611,[251]3.4578,[252]3.4592,[253]3.4623,[254]3.4688,[255]3.4747,[256]3.4776,[257]3.4796,[258]3.4799,[259]3.4823,[260]3.4840,[261]3.4844,[262]3.4823,[263]3.4878,[264]3.4897,[265]3.4893,[266]3.4911,[267]3.4934,[268]3.4977,[269]3.5007,[270]3.4989,[271]3.4964,[272]3.4887,[273]3.4893,[274]3.4830,[275]3.4721,[276]3.4619,[277]3.4634,[278]3.4747,[279]3.4802,[280]3.4880,[281]3.4954,[282]3.5012,[283]3.5084,[284]3.5151,[285]3.5294,[286]3.5318,[287]3.5344,[288]3.5386,[289]3.5405,[290]3.5319,[291]3.5245,[292]3.5265,[293]3.5266,[294]3.5257,[295]3.5240,[296]3.5264,[297]3.5278,[298]3.5327,[299]3.5397,[300]3.5427,[301]3.5466,[302]3.5492,[303]3.5500,[304]3.5482,[305]3.5604,[306]3.5677,[307]3.5791,[308]3.5665,[309]3.5614,[310]3.5521,[311]3.5569,[312]3.5602,[313]3.5680,[314]3.5700,[315]3.5730,[316]3.5737,[317]3.5747,[318]3.5748,[319]3.5752,[320]3.5794,[321]3.5793,[322]3.5807,[323]3.5867,[324]3.5868,[325]3.5913,[326]3.5962,[327]3.5998,[328]3.6018,[329]3.6030,[330]3.6091,[331]3.6139,[332]3.6182,[333]3.6161,[334]3.6152,[335]3.6149,[336]3.6146,[337]3.6152,[338]3.6152,[339]3.6172,[340]3.6206,[341]3.6262,[342]3.6355,[343]3.6454,[344]3.6503,[345]3.6426,[346]3.6354,[347]3.6331,[348]3.6250,[349]3.6211,[350]3.6196,[351]3.6242,[352]3.6400,[353]3.6490,[354]3.6624,[355]3.6718,[356]3.6773,[357]3.6895,[358]3.7002,[359]3.7034,[360]3.7098,[361]3.7190,[362]3.7284,[363]3.7341,[364]3.7405,[365]3.7472,[366]3.7586,[367]3.7673,[368]3.7743,[369]3.7824,[370]3.7911,[371]3.8057,[372]3.8153,[373]3.8182,[374]3.8215,[375]3.8263,[376]3.8395,[377]3.8505,[378]3.8528,[379]3.8518,[380]3.8480,[381]3.8524,[382]3.8581,[383]3.8616,[384]3.8662,[385]3.8700,[386]3.8763,[387]3.8823,[388]3.8854,[389]3.8739,[390]3.8638,[391]3.8534,[392]3.8475,[393]3.8382,[394]3.8292,[395]3.8196,[396]3.8089,[397]3.7993,[398]3.7888,[399]3.7777,[400]3.7692,[401]3.7583,[402]3.7471,[403]3.7373,[404]3.7257,[405]3.7151,[406]3.7038,[407]3.6937,[408]3.6845,[409]3.6753,[410]3.6691,[411]3.6709,[412]3.6663,[413]3.6695,[414]3.6725,[415]3.6698,[416]3.6700,[417]3.6722,[418]3.6661,[419]3.6677,[420]3.6650,[421]3.6640,[422]3.6657,[423]3.6652,[424]3.6696,[425]3.6691,[426]3.6693,[427]3.6687,[428]3.6715,[429]3.6729,[430]3.6760,[431]3.6769,[432]3.6759,[433]3.6722,[434]3.6730,[435]3.6667,[436]3.6610,[437]3.6572,[438]3.6553,[439]3.6538,[440]3.6589,[441]3.6640,[442]3.6715,[443]3.6693,[444]3.6698,[445]3.6710,[446]3.6763,[447]3.6788,[448]3.6813,[449]3.6840,[450]3.6879,[451]3.6915,[452]3.6939,[453]3.6952,[454]3.6932,[455]3.6955,[456]3.6953,[457]3.6978,[458]3.7028,[459]3.7032,[460]3.7027,[461]3.6988,[462]3.7024,[463]3.7098,[464]3.7157,[465]3.7091,[466]3.7079,[467]3.7076,[468]3.7093,[469]3.7067,[470]3.7041,[471]3.7044,[472]3.7055,[473]3.7047,[474]3.7034,[475]3.7047,[476]3.7031,[477]3.7023,[478]3.7030,[479]3.7053,[480]3.7078,[481]3.7041,[482]3.7078,[483]3.7063,[484]3.7096,[485]3.7163,[486]3.7190,[487]3.7225,[488]3.7279,[489]3.7299,[490]3.7346,[491]3.7405,[492]3.7450,[493]3.7447,[494]3.7457,[495]3.7479,[496]3.7495,[497]3.7526,[498]3.7526,[499]3.7518,[500]3.7555,[501]3.7599,[502]3.7587,[503]3.7567,[504]3.7593,[505]3.7622,[506]3.7705,[507]3.7730,[508]3.7763,[509]3.7681,[510]3.7634,[511]3.7571,[512]3.7529,[513]3.7470,[514]3.7466,[515]3.7497,[516]3.7454,[517]3.7459,[518]3.7450,[519]3.7460,[520]3.7510,[521]3.7495,[522]3.7477,[523]3.7541,[524]3.7529,[525]3.7515,[526]3.7476,[527]3.7418,[528]3.7389,[529]3.7353,[530]3.7325,[531]3.7289,[532]3.7221,[533]3.7155,[534]3.7116,[535]3.7130,[536]3.7160,[537]3.7199,[538]3.7231,[539]3.7259,[540]3.7314,[541]3.7352,[542]3.7375,[543]3.7323,[544]3.7285,[545]3.7281,[546]3.7207,[547]3.7147,[548]3.7080,[549]3.7014,[550]3.6956,[551]3.6899,[552]3.6844,[553]3.6791,[554]3.6786,[555]3.6772,[556]3.6796,[557]3.6838,[558]3.6899,[559]3.6946,[560]3.7001,[561]3.6975,

Final estimate: PPL = 3.6975 +/- 0.02115

llama_print_timings: load time = 14720.43 ms

llama_print_timings: sample time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: prompt eval time = 2646411.18 ms / 287232 tokens ( 9.21 ms per token, 108.54 tokens per second)

llama_print_timings: eval time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: total time = 2649939.46 ms / 287233 tokens

ubergarm Q2_K_R4 with various -ser N,1

Testing same quant and config as above but with -ser 4,1 etc to get a feel for quality vs speed tradeoffs.

These were run on ik_llama.cpp@127c6ee6

CUDA_VISIBLE_DEVICES="0," \

./build/bin/llama-perplexity \

--model /mnt/raid/models/ubergarm/DeepSeek-R1-GGUF/DeepSeek-R1-Q2_K_R4.gguf \

-ctk q8_0 \

-mla 2 -fa \

-amb 512 \

-fmoe \

-ser 4,1 \

--ctx-size 512 \

--ubatch-size 512 \

-f wiki.test.raw \

--seed 1337 \

--n-gpu-layers 63 \

--override-tensor exps=CPU \

--threads 24

Device 0: NVIDIA RTX A6000, compute capability 8.6, VMM: yes

main: build = 3597 (127c6ee6)

main: built with cc (Ubuntu 13.3.0-6ubuntu2~24.04) 13.3.0 for x86_64-linux-gnu

main: seed = 1337

llama_model_loader: loaded meta data with 48 key-value pairs and 1147 tensors from /mnt/raid/models/ubergarm/DeepSeek-R1-GGUF/DeepSeek-R1-Q2_K_R4.gguf

(version GGUF V3 (latest))

llama_model_loader: - type f32: 361 tensors

llama_model_loader: - type q8_0: 612 tensors

llama_model_loader: - type q2_k_r4: 116 tensors

llama_model_loader: - type q3_k_r4: 58 tensors

llm_load_tensors: CPU buffer size = 241396.85 MiB

llm_load_tensors: CPU buffer size = 938.98 MiB

llm_load_tensors: CUDA0 buffer size = 17744.02 MiB

llama_kv_cache_init: CUDA0 KV buffer size = 72.94 MiB

llama_new_context_with_model: KV self size = 72.91 MiB, c^KV (q8_0): 72.91 MiB, kv^T: not used

llama_new_context_with_model: CUDA_Host output buffer size = 1.97 MiB

llama_new_context_with_model: CUDA0 compute buffer size = 503.00 MiB

llama_new_context_with_model: CUDA_Host compute buffer size = 162.01 MiB

llama_new_context_with_model: graph nodes = 3548

llama_new_context_with_model: graph splits = 118

system_info: n_threads = 24 / 48 | AVX = 1 | AVX_VNNI = 0 | AVX2 = 1 | AVX512 = 1 | AVX512_VBMI = 1 | AVX512_VNNI = 1 | AVX512_BF16 = 1 | FMA = 1 | NE

ON = 0 | SVE = 0 | ARM_FMA = 0 | F16C = 1 | FP16_VA = 0 | WASM_SIMD = 0 | BLAS = 1 | SSE3 = 1 | SSSE3 = 1 | VSX = 0 | MATMUL_INT8 = 0 | LLAMAFILE = 1

|

# with -ser 4,1

perplexity: tokenizing the input ..

perplexity: tokenization took 604.75 ms

perplexity: calculating perplexity over 561 chunks, n_ctx=512, batch_size=2048, n_seq=4

perplexity: 13.04 seconds per pass - ETA 30.48 minutes

[1]2.7566,[2]3.5635,[3]2.5376,[4]2.2133,[5]2.0562,[6]1.9544,[7]1.8575,[8]1.8206,[9]1.7899,[10]1.7276,[11]1.7315,[12]1.8148,[13]1.8621,[14]1.9970,[15]2.1476,[16]2.2009,[17]2.3909,[18]2.5311,[19]2.4924,[20]2.4660,[21]2.5846,[22]2.5381,[23]2.4909,[24]2.5169,[25]2.4747,[26]2.4415,[27]2.4895,[28]2.4900,[29]2.5527,[30]2.5844,[31]2.6249,[32]2.6419,[33]2.6900,[34]2.7411,[35]2.8049,[36]2.8666,[37]2.9000,[38]2.9508,[39]2.9934,[40]3.0584,[41]3.0966,[42]3.1029,[43]3.1541,[44]3.1631,[45]3.2510,[46]3.3056,[47]3.2714,[48]3.2337,[49]3.2203,[50]3.2441,[51]3.2937,[52]3.3088,[53]3.3648,[54]3.3842,[55]3.4177,[56]3.4566,[57]3.4802,[58]3.5231,[59]3.5286,[60]3.5828,[61]3.6248,[62]3.6818,[63]3.7188,[64]3.7669,[65]3.7770,[66]3.7741,[67]3.7554,[68]3.7894,[69]3.7957,[70]3.8155,[71]3.8336,[72]3.8482,[73]3.8581,[74]3.8803,[75]3.8576,[76]3.8006,[77]3.7567,[78]3.7570,[79]3.7380,[80]3.7306,[81]3.6892,[82]3.6976,[83]3.6788,[84]3.6468,[85]3.6175,[86]3.5977,[87]3.6166,[88]3.5909,[89]3.5849,[90]3.5628,[91]3.5419,[92]3.5188,[93]3.4947,[94]3.4766,[95]3.4582,[96]3.4635,[97]3.4770,[98]3.4648,[99]3.4479,[100]3.4481,[101]3.4369,[102]3.4545,[103]3.4847,[104]3.5091,[105]3.5066,[106]3.5396,[107]3.5644,[108]3.5854,[109]3.6243,[110]3.6607,[111]3.6853,[112]3.6525,[113]3.6384,[114]3.6172,[115]3.5987,[116]3.5923,[117]3.5714,[118]3.5475,[119]3.5258,[120]3.5023,[121]3.4869,[122]3.4619,[123]3.4426,[124]3.4229,[125]3.4047,[126]3.3876,[127]3.3766,[128]3.3707,[129]3.3639,[130]3.3555,[131]3.3492,[132]3.3556,[133]3.3630,[134]3.3679,[135]3.3806,[136]3.3993,[137]3.4173,[138]3.4236,[139]3.4345,[140]3.4313,[141]3.4291,[142]3.4229,[143]3.4184,[144]3.4084,[145]3.3970,[146]3.3921,[147]3.3929,[148]3.3895,[149]3.3881,[150]3.3773,[151]3.3724,[152]3.3654,[153]3.3570,[154]3.3543,[155]3.3575,[156]3.3558,[157]3.3599,[158]3.3687,[159]3.3700,[160]3.3792,[161]3.3861,[162]3.3940,[163]3.4013,[164]3.4242,[165]3.4507,[166]3.4707,[167]3.4853,[168]3.5134,[169]3.5376,[170]3.5636,[171]3.5889,[172]3.5672,[173]3.5461,[174]3.5336,[175]3.5224,[176]3.5099,[177]3.4987,[178]3.4862,[179]3.4722,[180]3.4760,[181]3.4907,[182]3.5072,[183]3.5225,[184]3.5380,[185]3.5492,[186]3.5669,[187]3.5825,[188]3.5986,[189]3.6102,[190]3.6092,[191]3.6161,[192]3.6179,[193]3.6219,[194]3.6438,[195]3.6527,[196]3.6656,[197]3.6750,[198]3.6773,[199]3.6828,[200]3.6787,[201]3.6945,[202]3.6859,[203]3.6899,[204]3.6913,[205]3.6913,[206]3.6915,[207]3.7009,[208]3.7091,[209]3.7186,[210]3.7168,[211]3.7094,[212]3.7082,[213]3.7154,[214]3.7162,[215]3.7221,[216]3.7205,[217]3.7133,[218]3.7120,[219]3.7115,[220]3.7083,[221]3.7062,[222]3.7049,[223]3.7052,[224]3.7097,[225]3.7106,[226]3.7010,[227]3.6990,[228]3.7001,[229]3.7028,[230]3.7086,[231]3.7142,[232]3.7035,[233]3.6969,[234]3.7003,[235]3.7000,[236]3.7105,[237]3.7196,[238]3.7296,[239]3.7397,[240]3.7490,[241]3.7612,[242]3.7780,[243]3.7920,[244]3.8010,[245]3.8136,[246]3.8253,[247]3.8218,[248]3.8166,[249]3.8127,[250]3.8035,[251]3.7989,[252]3.7990,[253]3.8014,[254]3.8078,[255]3.8131,[256]3.8157,[257]3.8173,[258]3.8165,[259]3.8192,[260]3.8210,[261]3.8216,[262]3.8184,[263]3.8242,[264]3.8259,[265]3.8253,[266]3.8270,[267]3.8292,[268]3.8335,[269]3.8366,[270]3.8339,[271]3.8310,[272]3.8212,[273]3.8237,[274]3.8171,[275]3.8064,[276]3.7978,[277]3.8000,[278]3.8117,[279]3.8180,[280]3.8261,[281]3.8342,[282]3.8406,[283]3.8481,[284]3.8552,[285]3.8705,[286]3.8717,[287]3.8735,[288]3.8772,[289]3.8784,[290]3.8700,[291]3.8628,[292]3.8670,[293]3.8667,[294]3.8666,[295]3.8643,[296]3.8674,[297]3.8695,[298]3.8749,[299]3.8810,[300]3.8834,[301]3.8873,[302]3.8905,[303]3.8920,[304]3.8897,[305]3.9028,[306]3.9107,[307]3.9233,[308]3.9105,[309]3.9049,[310]3.8953,[311]3.9003,[312]3.9029,[313]3.9102,[314]3.9117,[315]3.9139,[316]3.9146,[317]3.9158,[318]3.9153,[319]3.9149,[320]3.9197,[321]3.9192,[322]3.9198,[323]3.9267,[324]3.9268,[325]3.9321,[326]3.9366,[327]3.9413,[328]3.9428,[329]3.9432,[330]3.9494,[331]3.9548,[332]3.9594,[333]3.9565,[334]3.9546,[335]3.9540,[336]3.9526,[337]3.9527,[338]3.9517,[339]3.9532,[340]3.9559,[341]3.9612,[342]3.9708,[343]3.9821,[344]3.9881,[345]3.9815,[346]3.9747,[347]3.9737,[348]3.9658,[349]3.9626,[350]3.9605,[351]3.9653,[352]3.9825,[353]3.9922,[354]4.0070,[355]4.0165,[356]4.0224,[357]4.0353,[358]4.0467,[359]4.0498,[360]4.0566,[361]4.0663,[362]4.0752,[363]4.0821,[364]4.0883,[365]4.0951,[366]4.1072,[367]4.1167,[368]4.1239,[369]4.1321,[370]4.1405,[371]4.1558,[372]4.1662,[373]4.1686,[374]4.1717,[375]4.1765,[376]4.1906,[377]4.2018,[378]4.2036,[379]4.2020,[380]4.1979,[381]4.2015,[382]4.2068,[383]4.2105,[384]4.2151,[385]4.2190,[386]4.2261,[387]4.2320,[388]4.2353,[389]4.2226,[390]4.2128,[391]4.2012,[392]4.1953,[393]4.1874,[394]4.1781,[395]4.1686,[396]4.1579,[397]4.1479,[398]4.1364,[399]4.1252,[400]4.1158,[401]4.1039,[402]4.0928,[403]4.0826,[404]4.0696,[405]4.0578,[406]4.0457,[407]4.0346,[408]4.0253,[409]4.0163,[410]4.0103,[411]4.0126,[412]4.0087,[413]4.0125,[414]4.0162,[415]4.0133,[416]4.0137,[417]4.0178,[418]4.0120,[419]4.0138,[420]4.0103,[421]4.0092,[422]4.0116,[423]4.0108,[424]4.0153,[425]4.0150,[426]4.0145,[427]4.0133,[428]4.0172,[429]4.0179,[430]4.0210,[431]4.0221,[432]4.0206,[433]4.0161,[434]4.0172,[435]4.0101,[436]4.0042,[437]3.9999,[438]3.9976,[439]3.9962,[440]4.0016,[441]4.0068,[442]4.0145,[443]4.0118,[444]4.0119,[445]4.0124,[446]4.0178,[447]4.0204,[448]4.0229,[449]4.0258,[450]4.0300,[451]4.0332,[452]4.0355,[453]4.0372,[454]4.0350,[455]4.0366,[456]4.0358,[457]4.0386,[458]4.0437,[459]4.0436,[460]4.0429,[461]4.0385,[462]4.0420,[463]4.0498,[464]4.0555,[465]4.0492,[466]4.0484,[467]4.0478,[468]4.0507,[469]4.0484,[470]4.0456,[471]4.0462,[472]4.0475,[473]4.0461,[474]4.0448,[475]4.0461,[476]4.0445,[477]4.0431,[478]4.0452,[479]4.0474,[480]4.0498,[481]4.0451,[482]4.0485,[483]4.0468,[484]4.0501,[485]4.0570,[486]4.0598,[487]4.0636,[488]4.0693,[489]4.0709,[490]4.0753,[491]4.0819,[492]4.0865,[493]4.0859,[494]4.0871,[495]4.0892,[496]4.0911,[497]4.0942,[498]4.0940,[499]4.0930,[500]4.0963,[501]4.1008,[502]4.0998,[503]4.0970,[504]4.0993,[505]4.1025,[506]4.1110,[507]4.1133,[508]4.1169,[509]4.1081,[510]4.1046,[511]4.0984,[512]4.0942,[513]4.0882,[514]4.0876,[515]4.0906,[516]4.0874,[517]4.0874,[518]4.0871,[519]4.0877,[520]4.0927,[521]4.0910,[522]4.0893,[523]4.0966,[524]4.0959,[525]4.0942,[526]4.0906,[527]4.0840,[528]4.0809,[529]4.0771,[530]4.0736,[531]4.0699,[532]4.0620,[533]4.0548,[534]4.0513,[535]4.0528,[536]4.0558,[537]4.0596,[538]4.0640,[539]4.0670,[540]4.0730,[541]4.0768,[542]4.0797,[543]4.0759,[544]4.0717,[545]4.0708,[546]4.0626,[547]4.0565,[548]4.0490,[549]4.0425,[550]4.0367,[551]4.0308,[552]4.0249,[553]4.0194,[554]4.0198,[555]4.0182,[556]4.0211,[557]4.0259,[558]4.0322,[559]4.0371,[560]4.0430,[561]4.0400,

Final estimate: PPL = 4.0400 +/- 0.02311

llama_print_timings: load time = 36413.72 ms

llama_print_timings: sample time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: prompt eval time = 1702951.63 ms / 287232 tokens ( 5.93 ms per token, 168.67 tokens per second)

llama_print_timings: eval time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: total time = 1706441.65 ms / 287233 tokens

## again with -ser 6,1

llama_kv_cache_init: CUDA0 KV buffer size = 72.94 MiB

llama_new_context_with_model: KV self size = 72.91 MiB, c^KV (q8_0): 72.91 MiB, kv^T: not used

llama_new_context_with_model: CUDA_Host output buffer size = 1.97 MiB

llama_new_context_with_model: CUDA0 compute buffer size = 503.00 MiB

llama_new_context_with_model: CUDA_Host compute buffer size = 162.01 MiB

llama_new_context_with_model: graph nodes = 3548

llama_new_context_with_model: graph splits = 118

system_info: n_threads = 24 / 48 | AVX = 1 | AVX_VNNI = 0 | AVX2 = 1 | AVX512 = 1 | AVX512_VBMI = 1 | AVX512_VNNI = 1 | AVX512_BF16 = 1 | FMA = 1 | NEON = 0 | SVE = 0 | ARM_FMA = 0 | F16C = 1 | FP16_VA = 0 | WASM_SIMD = 0 | BLAS = 1 | SSE3 = 1 | SSSE3 = 1 | VSX = 0 | MATMUL_INT8 = 0 | LLAMAFILE = 1 |

perplexity: tokenizing the input ..

perplexity: tokenization took 608.059 ms

perplexity: calculating perplexity over 561 chunks, n_ctx=512, batch_size=2048, n_seq=4

perplexity: 15.81 seconds per pass - ETA 36.93 minutes

[1]2.6383,[2]3.4392,[3]2.4566,[4]2.0850,[5]1.9090,[6]1.7848,[7]1.6805,[8]1.6308,[9]1.5919,[10]1.5463,[11]1.5494,[12]1.6200,[13]1.6404,[14]1.7746,[15]1.9251,[16]1.9812,[17]2.1567,[18]2.2874,[19]2.2496,[20]2.2360,[21]2.3495,[22]2.3124,[23]2.2781,[24]2.2966,[25]2.2613,[26]2.2293,[27]2.2764,[28]2.2883,[29]2.3441,[30]2.3747,[31]2.4141,[32]2.4356,[33]2.4773,[34]2.5225,[35]2.5798,[36]2.6357,[37]2.6692,[38]2.7190,[39]2.7605,[40]2.8239,[41]2.8673,[42]2.8753,[43]2.9274,[44]2.9418,[45]3.0241,[46]3.0761,[47]3.0411,[48]2.9954,[49]2.9720,[50]2.9965,[51]3.0450,[52]3.0606,[53]3.1138,[54]3.1304,[55]3.1600,[56]3.1970,[57]3.2131,[58]3.2561,[59]3.2645,[60]3.3166,[61]3.3573,[62]3.4157,[63]3.4524,[64]3.4987,[65]3.5063,[66]3.4949,[67]3.4740,[68]3.5101,[69]3.5120,[70]3.5317,[71]3.5477,[72]3.5616,[73]3.5728,[74]3.5932,[75]3.5705,[76]3.5180,[77]3.4777,[78]3.4751,[79]3.4568,[80]3.4439,[81]3.4042,[82]3.4112,[83]3.3874,[84]3.3539,[85]3.3213,[86]3.2985,[87]3.3058,[88]3.2793,[89]3.2703,[90]3.2456,[91]3.2217,[92]3.1996,[93]3.1747,[94]3.1517,[95]3.1352,[96]3.1383,[97]3.1483,[98]3.1361,[99]3.1177,[100]3.1197,[101]3.1118,[102]3.1278,[103]3.1563,[104]3.1767,[105]3.1733,[106]3.2008,[107]3.2254,[108]3.2456,[109]3.2812,[110]3.3161,[111]3.3382,[112]3.3082,[113]3.2952,[114]3.2755,[115]3.2586,[116]3.2518,[117]3.2286,[118]3.2061,[119]3.1864,[120]3.1644,[121]3.1488,[122]3.1277,[123]3.1089,[124]3.0897,[125]3.0718,[126]3.0538,[127]3.0404,[128]3.0348,[129]3.0265,[130]3.0165,[131]3.0092,[132]3.0150,[133]3.0224,[134]3.0265,[135]3.0378,[136]3.0561,[137]3.0727,[138]3.0800,[139]3.0907,[140]3.0892,[141]3.0880,[142]3.0845,[143]3.0826,[144]3.0758,[145]3.0663,[146]3.0631,[147]3.0662,[148]3.0649,[149]3.0643,[150]3.0564,[151]3.0524,[152]3.0471,[153]3.0411,[154]3.0400,[155]3.0432,[156]3.0431,[157]3.0477,[158]3.0567,[159]3.0579,[160]3.0669,[161]3.0749,[162]3.0838,[163]3.0901,[164]3.1119,[165]3.1367,[166]3.1548,[167]3.1696,[168]3.1962,[169]3.2196,[170]3.2420,[171]3.2661,[172]3.2467,[173]3.2266,[174]3.2125,[175]3.1996,[176]3.1862,[177]3.1753,[178]3.1621,[179]3.1475,[180]3.1508,[181]3.1650,[182]3.1807,[183]3.1952,[184]3.2096,[185]3.2197,[186]3.2367,[187]3.2520,[188]3.2670,[189]3.2774,[190]3.2771,[191]3.2836,[192]3.2861,[193]3.2902,[194]3.3108,[195]3.3200,[196]3.3329,[197]3.3423,[198]3.3456,[199]3.3513,[200]3.3487,[201]3.3644,[202]3.3578,[203]3.3627,[204]3.3650,[205]3.3660,[206]3.3680,[207]3.3772,[208]3.3868,[209]3.3968,[210]3.3965,[211]3.3901,[212]3.3888,[213]3.3963,[214]3.3974,[215]3.4026,[216]3.4023,[217]3.3963,[218]3.3952,[219]3.3949,[220]3.3928,[221]3.3922,[222]3.3914,[223]3.3920,[224]3.3971,[225]3.3990,[226]3.3893,[227]3.3880,[228]3.3893,[229]3.3934,[230]3.3995,[231]3.4054,[232]3.3962,[233]3.3892,[234]3.3920,[235]3.3917,[236]3.4013,[237]3.4105,[238]3.4201,[239]3.4303,[240]3.4394,[241]3.4509,[242]3.4661,[243]3.4791,[244]3.4880,[245]3.5000,[246]3.5109,[247]3.5084,[248]3.5043,[249]3.5017,[250]3.4936,[251]3.4902,[252]3.4911,[253]3.4942,[254]3.5007,[255]3.5065,[256]3.5093,[257]3.5113,[258]3.5115,[259]3.5142,[260]3.5159,[261]3.5164,[262]3.5145,[263]3.5205,[264]3.5225,[265]3.5218,[266]3.5235,[267]3.5258,[268]3.5298,[269]3.5330,[270]3.5310,[271]3.5287,[272]3.5208,[273]3.5217,[274]3.5154,[275]3.5044,[276]3.4937,[277]3.4956,[278]3.5066,[279]3.5124,[280]3.5204,[281]3.5275,[282]3.5336,[283]3.5407,[284]3.5479,[285]3.5618,[286]3.5638,[287]3.5661,[288]3.5702,[289]3.5723,[290]3.5640,[291]3.5573,[292]3.5601,[293]3.5595,[294]3.5590,[295]3.5572,[296]3.5593,[297]3.5607,[298]3.5658,[299]3.5727,[300]3.5756,[301]3.5796,[302]3.5822,[303]3.5835,[304]3.5817,[305]3.5937,[306]3.6013,[307]3.6130,[308]3.6006,[309]3.5950,[310]3.5858,[311]3.5906,[312]3.5932,[313]3.6006,[314]3.6025,[315]3.6052,[316]3.6060,[317]3.6070,[318]3.6071,[319]3.6076,[320]3.6119,[321]3.6119,[322]3.6134,[323]3.6199,[324]3.6201,[325]3.6247,[326]3.6300,[327]3.6338,[328]3.6362,[329]3.6374,[330]3.6436,[331]3.6484,[332]3.6528,[333]3.6507,[334]3.6496,[335]3.6493,[336]3.6487,[337]3.6491,[338]3.6492,[339]3.6512,[340]3.6547,[341]3.6600,[342]3.6695,[343]3.6796,[344]3.6848,[345]3.6765,[346]3.6696,[347]3.6677,[348]3.6601,[349]3.6564,[350]3.6545,[351]3.6590,[352]3.6751,[353]3.6840,[354]3.6979,[355]3.7068,[356]3.7124,[357]3.7248,[358]3.7354,[359]3.7387,[360]3.7452,[361]3.7545,[362]3.7639,[363]3.7694,[364]3.7756,[365]3.7823,[366]3.7938,[367]3.8022,[368]3.8094,[369]3.8175,[370]3.8264,[371]3.8411,[372]3.8507,[373]3.8534,[374]3.8566,[375]3.8612,[376]3.8748,[377]3.8859,[378]3.8879,[379]3.8866,[380]3.8829,[381]3.8870,[382]3.8927,[383]3.8964,[384]3.9009,[385]3.9048,[386]3.9115,[387]3.9175,[388]3.9207,[389]3.9090,[390]3.8992,[391]3.8885,[392]3.8827,[393]3.8740,[394]3.8651,[395]3.8553,[396]3.8447,[397]3.8354,[398]3.8246,[399]3.8137,[400]3.8050,[401]3.7938,[402]3.7825,[403]3.7724,[404]3.7607,[405]3.7501,[406]3.7389,[407]3.7288,[408]3.7196,[409]3.7106,[410]3.7044,[411]3.7062,[412]3.7017,[413]3.7045,[414]3.7075,[415]3.7046,[416]3.7048,[417]3.7074,[418]3.7013,[419]3.7033,[420]3.7006,[421]3.6995,[422]3.7013,[423]3.7008,[424]3.7054,[425]3.7051,[426]3.7051,[427]3.7042,[428]3.7072,[429]3.7086,[430]3.7119,[431]3.7130,[432]3.7119,[433]3.7080,[434]3.7090,[435]3.7024,[436]3.6967,[437]3.6930,[438]3.6911,[439]3.6894,[440]3.6946,[441]3.6996,[442]3.7070,[443]3.7049,[444]3.7051,[445]3.7062,[446]3.7114,[447]3.7139,[448]3.7160,[449]3.7188,[450]3.7230,[451]3.7264,[452]3.7286,[453]3.7301,[454]3.7282,[455]3.7304,[456]3.7301,[457]3.7328,[458]3.7378,[459]3.7382,[460]3.7377,[461]3.7339,[462]3.7376,[463]3.7451,[464]3.7509,[465]3.7444,[466]3.7430,[467]3.7421,[468]3.7442,[469]3.7417,[470]3.7389,[471]3.7392,[472]3.7403,[473]3.7394,[474]3.7383,[475]3.7398,[476]3.7378,[477]3.7367,[478]3.7376,[479]3.7398,[480]3.7420,[481]3.7381,[482]3.7415,[483]3.7402,[484]3.7436,[485]3.7502,[486]3.7532,[487]3.7565,[488]3.7623,[489]3.7642,[490]3.7687,[491]3.7748,[492]3.7793,[493]3.7789,[494]3.7798,[495]3.7820,[496]3.7838,[497]3.7869,[498]3.7871,[499]3.7865,[500]3.7901,[501]3.7947,[502]3.7934,[503]3.7912,[504]3.7933,[505]3.7963,[506]3.8046,[507]3.8071,[508]3.8105,[509]3.8022,[510]3.7980,[511]3.7914,[512]3.7870,[513]3.7813,[514]3.7809,[515]3.7836,[516]3.7793,[517]3.7794,[518]3.7790,[519]3.7798,[520]3.7846,[521]3.7831,[522]3.7814,[523]3.7880,[524]3.7868,[525]3.7852,[526]3.7814,[527]3.7752,[528]3.7717,[529]3.7682,[530]3.7650,[531]3.7615,[532]3.7545,[533]3.7481,[534]3.7443,[535]3.7456,[536]3.7485,[537]3.7524,[538]3.7561,[539]3.7590,[540]3.7645,[541]3.7680,[542]3.7704,[543]3.7656,[544]3.7619,[545]3.7613,[546]3.7538,[547]3.7477,[548]3.7409,[549]3.7342,[550]3.7282,[551]3.7222,[552]3.7165,[553]3.7113,[554]3.7108,[555]3.7094,[556]3.7121,[557]3.7164,[558]3.7226,[559]3.7273,[560]3.7330,[561]3.7305,

Final estimate: PPL = 3.7305 +/- 0.02118

llama_print_timings: load time = 9810.20 ms

llama_print_timings: sample time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: prompt eval time = 2166647.49 ms / 287232 tokens ( 7.54 ms per token, 132.57 tokens per second)

llama_print_timings: eval time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: total time = 2170176.48 ms / 287233 tokens

## again with -ser 5,1

perplexity: tokenizing the input ..

perplexity: tokenization took 607.579 ms

perplexity: calculating perplexity over 561 chunks, n_ctx=512, batch_size=2048, n_seq=4

perplexity: 14.10 seconds per pass - ETA 32.95 minutes

[1]2.6830,[2]3.4757,[3]2.4956,[4]2.1153,[5]1.9387,[6]1.8172,[7]1.7104,[8]1.6689,[9]1.6385,[10]1.5935,[11]1.5975,[12]1.6683,[13]1.6956,[14]1.8311,[15]1.9839,[16]2.0386,[17]2.2173,[18]2.3501,[19]2.3057,[20]2.2880,[21]2.4071,[22]2.3703,[23]2.3309,[24]2.3495,[25]2.3106,[26]2.2796,[27]2.3271,[28]2.3352,[29]2.3927,[30]2.4247,[31]2.4685,[32]2.4886,[33]2.5350,[34]2.5831,[35]2.6447,[36]2.7047,[37]2.7373,[38]2.7885,[39]2.8292,[40]2.8929,[41]2.9324,[42]2.9404,[43]2.9917,[44]3.0038,[45]3.0875,[46]3.1397,[47]3.1067,[48]3.0629,[49]3.0412,[50]3.0654,[51]3.1151,[52]3.1300,[53]3.1847,[54]3.2018,[55]3.2332,[56]3.2701,[57]3.2880,[58]3.3306,[59]3.3381,[60]3.3905,[61]3.4318,[62]3.4917,[63]3.5281,[64]3.5750,[65]3.5844,[66]3.5767,[67]3.5584,[68]3.5947,[69]3.5962,[70]3.6180,[71]3.6343,[72]3.6481,[73]3.6594,[74]3.6812,[75]3.6589,[76]3.6034,[77]3.5623,[78]3.5605,[79]3.5415,[80]3.5290,[81]3.4895,[82]3.4956,[83]3.4736,[84]3.4393,[85]3.4083,[86]3.3866,[87]3.3964,[88]3.3691,[89]3.3597,[90]3.3349,[91]3.3109,[92]3.2869,[93]3.2642,[94]3.2418,[95]3.2256,[96]3.2276,[97]3.2380,[98]3.2244,[99]3.2081,[100]3.2099,[101]3.2009,[102]3.2179,[103]3.2462,[104]3.2681,[105]3.2647,[106]3.2941,[107]3.3188,[108]3.3387,[109]3.3750,[110]3.4104,[111]3.4332,[112]3.4029,[113]3.3898,[114]3.3697,[115]3.3519,[116]3.3468,[117]3.3244,[118]3.3007,[119]3.2811,[120]3.2597,[121]3.2429,[122]3.2212,[123]3.2017,[124]3.1820,[125]3.1638,[126]3.1461,[127]3.1339,[128]3.1291,[129]3.1218,[130]3.1121,[131]3.1057,[132]3.1129,[133]3.1208,[134]3.1263,[135]3.1380,[136]3.1564,[137]3.1732,[138]3.1802,[139]3.1912,[140]3.1892,[141]3.1872,[142]3.1827,[143]3.1799,[144]3.1723,[145]3.1624,[146]3.1591,[147]3.1619,[148]3.1597,[149]3.1587,[150]3.1496,[151]3.1454,[152]3.1394,[153]3.1328,[154]3.1312,[155]3.1343,[156]3.1337,[157]3.1383,[158]3.1474,[159]3.1488,[160]3.1576,[161]3.1651,[162]3.1739,[163]3.1800,[164]3.2023,[165]3.2275,[166]3.2462,[167]3.2601,[168]3.2868,[169]3.3099,[170]3.3323,[171]3.3567,[172]3.3367,[173]3.3164,[174]3.3017,[175]3.2902,[176]3.2771,[177]3.2670,[178]3.2535,[179]3.2393,[180]3.2429,[181]3.2571,[182]3.2732,[183]3.2874,[184]3.3014,[185]3.3122,[186]3.3295,[187]3.3446,[188]3.3599,[189]3.3705,[190]3.3696,[191]3.3765,[192]3.3786,[193]3.3824,[194]3.4032,[195]3.4122,[196]3.4251,[197]3.4347,[198]3.4375,[199]3.4438,[200]3.4407,[201]3.4567,[202]3.4494,[203]3.4545,[204]3.4569,[205]3.4574,[206]3.4587,[207]3.4683,[208]3.4772,[209]3.4874,[210]3.4869,[211]3.4797,[212]3.4785,[213]3.4861,[214]3.4870,[215]3.4923,[216]3.4914,[217]3.4849,[218]3.4840,[219]3.4835,[220]3.4817,[221]3.4806,[222]3.4792,[223]3.4798,[224]3.4851,[225]3.4867,[226]3.4768,[227]3.4749,[228]3.4761,[229]3.4794,[230]3.4856,[231]3.4916,[232]3.4821,[233]3.4752,[234]3.4783,[235]3.4784,[236]3.4883,[237]3.4971,[238]3.5062,[239]3.5170,[240]3.5263,[241]3.5383,[242]3.5543,[243]3.5684,[244]3.5778,[245]3.5897,[246]3.6008,[247]3.5980,[248]3.5934,[249]3.5902,[250]3.5814,[251]3.5777,[252]3.5788,[253]3.5821,[254]3.5884,[255]3.5943,[256]3.5970,[257]3.5990,[258]3.5989,[259]3.6015,[260]3.6031,[261]3.6035,[262]3.6012,[263]3.6072,[264]3.6090,[265]3.6087,[266]3.6106,[267]3.6128,[268]3.6166,[269]3.6194,[270]3.6171,[271]3.6147,[272]3.6056,[273]3.6071,[274]3.6006,[275]3.5897,[276]3.5795,[277]3.5817,[278]3.5930,[279]3.5989,[280]3.6071,[281]3.6147,[282]3.6212,[283]3.6288,[284]3.6360,[285]3.6504,[286]3.6522,[287]3.6548,[288]3.6587,[289]3.6605,[290]3.6523,[291]3.6454,[292]3.6481,[293]3.6476,[294]3.6476,[295]3.6457,[296]3.6483,[297]3.6491,[298]3.6545,[299]3.6611,[300]3.6639,[301]3.6679,[302]3.6708,[303]3.6722,[304]3.6700,[305]3.6824,[306]3.6901,[307]3.7024,[308]3.6897,[309]3.6841,[310]3.6748,[311]3.6796,[312]3.6824,[313]3.6903,[314]3.6917,[315]3.6941,[316]3.6951,[317]3.6964,[318]3.6963,[319]3.6963,[320]3.7011,[321]3.7008,[322]3.7018,[323]3.7083,[324]3.7083,[325]3.7132,[326]3.7180,[327]3.7228,[328]3.7249,[329]3.7262,[330]3.7325,[331]3.7374,[332]3.7421,[333]3.7397,[334]3.7384,[335]3.7381,[336]3.7373,[337]3.7375,[338]3.7375,[339]3.7392,[340]3.7424,[341]3.7477,[342]3.7571,[343]3.7674,[344]3.7732,[345]3.7656,[346]3.7595,[347]3.7577,[348]3.7500,[349]3.7461,[350]3.7443,[351]3.7491,[352]3.7655,[353]3.7748,[354]3.7888,[355]3.7978,[356]3.8035,[357]3.8162,[358]3.8266,[359]3.8295,[360]3.8362,[361]3.8455,[362]3.8548,[363]3.8607,[364]3.8666,[365]3.8735,[366]3.8853,[367]3.8941,[368]3.9014,[369]3.9097,[370]3.9182,[371]3.9331,[372]3.9430,[373]3.9457,[374]3.9491,[375]3.9535,[376]3.9673,[377]3.9784,[378]3.9803,[379]3.9791,[380]3.9754,[381]3.9794,[382]3.9849,[383]3.9887,[384]3.9933,[385]3.9970,[386]4.0037,[387]4.0098,[388]4.0131,[389]4.0013,[390]3.9915,[391]3.9804,[392]3.9748,[393]3.9663,[394]3.9575,[395]3.9481,[396]3.9370,[397]3.9280,[398]3.9172,[399]3.9061,[400]3.8974,[401]3.8860,[402]3.8745,[403]3.8643,[404]3.8524,[405]3.8414,[406]3.8296,[407]3.8191,[408]3.8097,[409]3.8006,[410]3.7943,[411]3.7961,[412]3.7921,[413]3.7947,[414]3.7976,[415]3.7945,[416]3.7950,[417]3.7982,[418]3.7921,[419]3.7941,[420]3.7913,[421]3.7901,[422]3.7917,[423]3.7912,[424]3.7959,[425]3.7956,[426]3.7953,[427]3.7944,[428]3.7974,[429]3.7985,[430]3.8016,[431]3.8023,[432]3.8011,[433]3.7969,[434]3.7978,[435]3.7909,[436]3.7853,[437]3.7815,[438]3.7793,[439]3.7775,[440]3.7831,[441]3.7881,[442]3.7956,[443]3.7933,[444]3.7935,[445]3.7942,[446]3.7993,[447]3.8019,[448]3.8041,[449]3.8064,[450]3.8106,[451]3.8140,[452]3.8162,[453]3.8180,[454]3.8158,[455]3.8178,[456]3.8176,[457]3.8202,[458]3.8254,[459]3.8258,[460]3.8249,[461]3.8211,[462]3.8246,[463]3.8320,[464]3.8378,[465]3.8311,[466]3.8299,[467]3.8291,[468]3.8314,[469]3.8288,[470]3.8260,[471]3.8262,[472]3.8274,[473]3.8264,[474]3.8252,[475]3.8266,[476]3.8244,[477]3.8232,[478]3.8247,[479]3.8268,[480]3.8294,[481]3.8253,[482]3.8287,[483]3.8271,[484]3.8303,[485]3.8367,[486]3.8398,[487]3.8433,[488]3.8490,[489]3.8508,[490]3.8555,[491]3.8619,[492]3.8663,[493]3.8663,[494]3.8674,[495]3.8694,[496]3.8712,[497]3.8744,[498]3.8743,[499]3.8737,[500]3.8771,[501]3.8816,[502]3.8804,[503]3.8779,[504]3.8800,[505]3.8830,[506]3.8912,[507]3.8936,[508]3.8972,[509]3.8887,[510]3.8849,[511]3.8786,[512]3.8741,[513]3.8681,[514]3.8672,[515]3.8700,[516]3.8659,[517]3.8660,[518]3.8658,[519]3.8667,[520]3.8716,[521]3.8700,[522]3.8683,[523]3.8753,[524]3.8744,[525]3.8725,[526]3.8689,[527]3.8627,[528]3.8593,[529]3.8558,[530]3.8524,[531]3.8487,[532]3.8416,[533]3.8349,[534]3.8315,[535]3.8326,[536]3.8353,[537]3.8394,[538]3.8435,[539]3.8464,[540]3.8524,[541]3.8558,[542]3.8583,[543]3.8540,[544]3.8505,[545]3.8501,[546]3.8424,[547]3.8364,[548]3.8295,[549]3.8224,[550]3.8164,[551]3.8104,[552]3.8046,[553]3.7992,[554]3.7993,[555]3.7979,[556]3.8006,[557]3.8049,[558]3.8112,[559]3.8159,[560]3.8216,[561]3.8189,

Final estimate: PPL = 3.8189 +/- 0.02171

llama_print_timings: load time = 9779.02 ms

llama_print_timings: sample time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: prompt eval time = 1940210.95 ms / 287232 tokens ( 6.75 ms per token, 148.04 tokens per second)

llama_print_timings: eval time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: total time = 1943740.46 ms / 287233 tokens

## again with -ser 7,1

perplexity: tokenizing the input ..

perplexity: tokenization took 643.261 ms

perplexity: calculating perplexity over 561 chunks, n_ctx=512, batch_size=2048, n_seq=4

perplexity: 17.39 seconds per pass - ETA 40.65 minutes

[1]2.6392,[2]3.4663,[3]2.4744,[4]2.0865,[5]1.9050,[6]1.7817,[7]1.6767,[8]1.6264,[9]1.5874,[10]1.5396,[11]1.5359,[12]1.5994,[13]1.6198,[14]1.7544,[15]1.8973,[16]1.9543,[17]2.1251,[18]2.2555,[19]2.2165,[20]2.2059,[21]2.3162,[22]2.2807,[23]2.2487,[24]2.2607,[25]2.2276,[26]2.1968,[27]2.2454,[28]2.2572,[29]2.3090,[30]2.3405,[31]2.3812,[32]2.4012,[33]2.4438,[34]2.4915,[35]2.5495,[36]2.6048,[37]2.6393,[38]2.6890,[39]2.7297,[40]2.7933,[41]2.8382,[42]2.8479,[43]2.9002,[44]2.9142,[45]2.9968,[46]3.0486,[47]3.0113,[48]2.9637,[49]2.9420,[50]2.9654,[51]3.0145,[52]3.0313,[53]3.0853,[54]3.1012,[55]3.1321,[56]3.1682,[57]3.1823,[58]3.2248,[59]3.2321,[60]3.2823,[61]3.3229,[62]3.3765,[63]3.4111,[64]3.4569,[65]3.4644,[66]3.4514,[67]3.4316,[68]3.4678,[69]3.4693,[70]3.4852,[71]3.5018,[72]3.5164,[73]3.5284,[74]3.5502,[75]3.5286,[76]3.4770,[77]3.4378,[78]3.4341,[79]3.4135,[80]3.4004,[81]3.3619,[82]3.3706,[83]3.3457,[84]3.3122,[85]3.2805,[86]3.2571,[87]3.2615,[88]3.2350,[89]3.2276,[90]3.2025,[91]3.1788,[92]3.1552,[93]3.1294,[94]3.1079,[95]3.0899,[96]3.0916,[97]3.0997,[98]3.0887,[99]3.0710,[100]3.0725,[101]3.0650,[102]3.0820,[103]3.1103,[104]3.1317,[105]3.1281,[106]3.1544,[107]3.1789,[108]3.1998,[109]3.2355,[110]3.2700,[111]3.2921,[112]3.2632,[113]3.2498,[114]3.2292,[115]3.2128,[116]3.2061,[117]3.1829,[118]3.1616,[119]3.1423,[120]3.1206,[121]3.1059,[122]3.0852,[123]3.0665,[124]3.0471,[125]3.0289,[126]3.0109,[127]2.9971,[128]2.9924,[129]2.9836,[130]2.9734,[131]2.9656,[132]2.9724,[133]2.9806,[134]2.9854,[135]2.9966,[136]3.0146,[137]3.0308,[138]3.0382,[139]3.0493,[140]3.0483,[141]3.0475,[142]3.0444,[143]3.0431,[144]3.0362,[145]3.0261,[146]3.0228,[147]3.0255,[148]3.0242,[149]3.0242,[150]3.0166,[151]3.0126,[152]3.0077,[153]3.0019,[154]3.0012,[155]3.0044,[156]3.0049,[157]3.0096,[158]3.0182,[159]3.0192,[160]3.0282,[161]3.0365,[162]3.0456,[163]3.0515,[164]3.0728,[165]3.0971,[166]3.1149,[167]3.1290,[168]3.1550,[169]3.1779,[170]3.1994,[171]3.2232,[172]3.2041,[173]3.1846,[174]3.1711,[175]3.1587,[176]3.1460,[177]3.1348,[178]3.1216,[179]3.1073,[180]3.1105,[181]3.1247,[182]3.1406,[183]3.1551,[184]3.1695,[185]3.1793,[186]3.1961,[187]3.2114,[188]3.2263,[189]3.2365,[190]3.2364,[191]3.2432,[192]3.2455,[193]3.2494,[194]3.2696,[195]3.2793,[196]3.2925,[197]3.3020,[198]3.3051,[199]3.3105,[200]3.3081,[201]3.3239,[202]3.3179,[203]3.3232,[204]3.3256,[205]3.3260,[206]3.3277,[207]3.3366,[208]3.3465,[209]3.3566,[210]3.3560,[211]3.3497,[212]3.3488,[213]3.3563,[214]3.3575,[215]3.3631,[216]3.3630,[217]3.3574,[218]3.3565,[219]3.3566,[220]3.3549,[221]3.3545,[222]3.3541,[223]3.3547,[224]3.3597,[225]3.3615,[226]3.3520,[227]3.3506,[228]3.3522,[229]3.3562,[230]3.3624,[231]3.3685,[232]3.3590,[233]3.3520,[234]3.3549,[235]3.3552,[236]3.3645,[237]3.3734,[238]3.3831,[239]3.3936,[240]3.4023,[241]3.4141,[242]3.4296,[243]3.4427,[244]3.4513,[245]3.4632,[246]3.4738,[247]3.4713,[248]3.4672,[249]3.4649,[250]3.4570,[251]3.4537,[252]3.4549,[253]3.4578,[254]3.4644,[255]3.4702,[256]3.4732,[257]3.4754,[258]3.4757,[259]3.4781,[260]3.4798,[261]3.4804,[262]3.4783,[263]3.4841,[264]3.4862,[265]3.4857,[266]3.4876,[267]3.4900,[268]3.4939,[269]3.4968,[270]3.4949,[271]3.4925,[272]3.4846,[273]3.4856,[274]3.4794,[275]3.4687,[276]3.4590,[277]3.4607,[278]3.4718,[279]3.4774,[280]3.4855,[281]3.4927,[282]3.4986,[283]3.5056,[284]3.5126,[285]3.5268,[286]3.5292,[287]3.5318,[288]3.5360,[289]3.5381,[290]3.5297,[291]3.5227,[292]3.5246,[293]3.5242,[294]3.5236,[295]3.5216,[296]3.5240,[297]3.5254,[298]3.5305,[299]3.5374,[300]3.5404,[301]3.5446,[302]3.5470,[303]3.5480,[304]3.5461,[305]3.5583,[306]3.5655,[307]3.5769,[308]3.5646,[309]3.5591,[310]3.5501,[311]3.5548,[312]3.5580,[313]3.5652,[314]3.5670,[315]3.5698,[316]3.5707,[317]3.5720,[318]3.5722,[319]3.5725,[320]3.5769,[321]3.5770,[322]3.5785,[323]3.5849,[324]3.5853,[325]3.5900,[326]3.5948,[327]3.5986,[328]3.6009,[329]3.6023,[330]3.6085,[331]3.6134,[332]3.6180,[333]3.6159,[334]3.6149,[335]3.6146,[336]3.6140,[337]3.6145,[338]3.6145,[339]3.6167,[340]3.6202,[341]3.6257,[342]3.6349,[343]3.6448,[344]3.6498,[345]3.6419,[346]3.6350,[347]3.6328,[348]3.6249,[349]3.6209,[350]3.6193,[351]3.6241,[352]3.6398,[353]3.6486,[354]3.6622,[355]3.6711,[356]3.6768,[357]3.6890,[358]3.6995,[359]3.7026,[360]3.7092,[361]3.7183,[362]3.7276,[363]3.7332,[364]3.7395,[365]3.7463,[366]3.7577,[367]3.7663,[368]3.7733,[369]3.7814,[370]3.7902,[371]3.8046,[372]3.8141,[373]3.8168,[374]3.8200,[375]3.8245,[376]3.8377,[377]3.8488,[378]3.8510,[379]3.8499,[380]3.8463,[381]3.8505,[382]3.8562,[383]3.8599,[384]3.8644,[385]3.8682,[386]3.8749,[387]3.8807,[388]3.8838,[389]3.8723,[390]3.8624,[391]3.8519,[392]3.8461,[393]3.8373,[394]3.8284,[395]3.8192,[396]3.8083,[397]3.7990,[398]3.7885,[399]3.7776,[400]3.7689,[401]3.7578,[402]3.7465,[403]3.7367,[404]3.7251,[405]3.7145,[406]3.7033,[407]3.6934,[408]3.6843,[409]3.6751,[410]3.6690,[411]3.6709,[412]3.6667,[413]3.6695,[414]3.6725,[415]3.6699,[416]3.6702,[417]3.6727,[418]3.6666,[419]3.6682,[420]3.6656,[421]3.6645,[422]3.6661,[423]3.6656,[424]3.6699,[425]3.6693,[426]3.6695,[427]3.6686,[428]3.6715,[429]3.6730,[430]3.6760,[431]3.6770,[432]3.6760,[433]3.6721,[434]3.6730,[435]3.6666,[436]3.6609,[437]3.6574,[438]3.6554,[439]3.6539,[440]3.6591,[441]3.6641,[442]3.6716,[443]3.6695,[444]3.6698,[445]3.6709,[446]3.6760,[447]3.6784,[448]3.6809,[449]3.6835,[450]3.6875,[451]3.6911,[452]3.6935,[453]3.6950,[454]3.6930,[455]3.6951,[456]3.6949,[457]3.6973,[458]3.7023,[459]3.7026,[460]3.7022,[461]3.6982,[462]3.7019,[463]3.7091,[464]3.7150,[465]3.7085,[466]3.7072,[467]3.7065,[468]3.7085,[469]3.7060,[470]3.7033,[471]3.7035,[472]3.7045,[473]3.7038,[474]3.7026,[475]3.7040,[476]3.7021,[477]3.7011,[478]3.7019,[479]3.7039,[480]3.7062,[481]3.7024,[482]3.7060,[483]3.7046,[484]3.7080,[485]3.7146,[486]3.7175,[487]3.7210,[488]3.7266,[489]3.7286,[490]3.7332,[491]3.7393,[492]3.7437,[493]3.7435,[494]3.7445,[495]3.7468,[496]3.7485,[497]3.7517,[498]3.7516,[499]3.7509,[500]3.7546,[501]3.7590,[502]3.7577,[503]3.7556,[504]3.7581,[505]3.7609,[506]3.7694,[507]3.7719,[508]3.7754,[509]3.7672,[510]3.7628,[511]3.7567,[512]3.7522,[513]3.7464,[514]3.7458,[515]3.7487,[516]3.7445,[517]3.7447,[518]3.7440,[519]3.7449,[520]3.7497,[521]3.7481,[522]3.7462,[523]3.7527,[524]3.7515,[525]3.7499,[526]3.7462,[527]3.7402,[528]3.7371,[529]3.7336,[530]3.7307,[531]3.7272,[532]3.7204,[533]3.7139,[534]3.7102,[535]3.7115,[536]3.7145,[537]3.7184,[538]3.7216,[539]3.7244,[540]3.7301,[541]3.7338,[542]3.7364,[543]3.7313,[544]3.7275,[545]3.7269,[546]3.7196,[547]3.7134,[548]3.7066,[549]3.7000,[550]3.6942,[551]3.6884,[552]3.6828,[553]3.6777,[554]3.6773,[555]3.6761,[556]3.6787,[557]3.6829,[558]3.6891,[559]3.6938,[560]3.6994,[561]3.6968,

Final estimate: PPL = 3.6968 +/- 0.02105

llama_print_timings: load time = 10199.69 ms

llama_print_timings: sample time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: prompt eval time = 2403207.35 ms / 287232 tokens ( 8.37 ms per token, 119.52 tokens per second)

llama_print_timings: eval time = 0.00 ms / 1 runs ( 0.00 ms per token, inf tokens per second)

llama_print_timings: total time = 2406766.55 ms / 287233 tokens

ubergarm IQ2_BN_R4