* Convolution bwd weight device implementation

* Merge branch 'grouped_conv_bwd_weight_device_impl_wmma' into 'feature/conv_bwd_weight_wmma'

Convolution bwd weight device implementation

See merge request amd/ai/composable_kernel!38

* Fix bug and disable splitK=-1 tests for wmma

* Add generic instances for bf16 f32 bf16

* check gridwise level validity in device impl for 1 stage D0

* Fix bugs in device implementation:

- rdna3 compilation error

- gridwise layouts (need to be correct to ensure that CheckValidaity()

works correctly)

* Add padding in conv to gemm transformers for 1x1Stride1Pad0 specialization

* Remove workaround for 1x1Stride1Pad0 conv specialization

* Add instances for xdl parity (for pipeline v1)

* Add two stage instances (xdl parity)

* Add multiple Ds instances

* Add examples

* Uncomment scale instances

* Fix copyright

* Fix examples compilation

* Add atomic add float4

* Fix compilation error

* Fix instances

* Compute tolerances in examples instead of using default ones

* Compute tolerances instead of using default ones in bilinear and scale tests

* Merge branch 'grouped_conv_bwd_weight_instances_examples' into 'feature/conv_bwd_weight_wmma'

Grouped conv: Instances and example bwd weight

See merge request amd/ai/composable_kernel!47

* Device implementation of explicit gemm for grouped conv bwd weight

Based on batched gemm multiple D

* Add instances for pipeline v1 and v3

* Add support for occupancy-based splitk

* Fix ckProfiler dependencies

* Review fixes

* Merge branch 'explicit_bwd_weight' into 'feature/conv_bwd_weight_wmma'

Device implementation of explicit gemm for grouped conv bwd weight

See merge request amd/ai/composable_kernel!52

* Fix cmake file for tests

* fix clang format

* fix instance factory error

* Adapt all grouped conv bwd weight vanilla Xdl instances to 16x16. MRepeat doubled for all but 12 of them (some static assert failure). Also added custom reduced profiler target for building grouped conv bwd weight vanilla only profiler. Verified with gtest test.

* Revert "Adapt all grouped conv bwd weight vanilla Xdl instances to 16x16. MRepeat doubled for all but 12 of them (some static assert failure). Also added custom reduced profiler target for building grouped conv bwd weight vanilla only profiler. Verified with gtest test."

This reverts commit da8e4cfb7917d45d46339ec74eb72e2f585f14cf.

* Disable splitk for 2stage xdl on rdna (bug to be fixed)

* Fix add_test_executable

* Always ForceThreadTileTransfer for now, WaveTileTransfer does not work for convolution yet.

* Grab device and gridwise files from bkp branch, this should enable splitK support for convolution and also we no longer ForceThreadTileTransfer for explicit gemm. Also grab some updates from 7e7243783008b11e904f127ecf1df55ef95e9af2 to fix building on clang20.

* Fix bug in various bwd wei device implementations / profiler where the occupancy based split_k value could not be found because the Argument did not derive from ArgumentSplitK, leading to incorrect error tolerances.

* Actually print the reason when a device implementation is not supported.

* Print number of valid instances in profiler and tests.

* Fix clang format for Two Stage implementation

* Fix copyright

* Address review comments

* Fix explicit conv bwd weight struct

* Fix gridwise common

* Fix gridwise ab scale

* Remove autodeduce 1 stage

* Restore example tolerance calculation

* Fix compilation error

* Fix gridwise common

* Fix gridwise gemm

* Fix typo

* Fix splitk

* Fix splitk ab scale

* Adapt all grouped conv bwd weight vanilla Xdl instances to 16x16. MRepeat doubled for all but 12 of them (some static assert failure). Also added custom reduced profiler target for building grouped conv bwd weight vanilla only profiler. Verified with gtest test.

* Reduce instances to only the tuned wmma V3 ones for implicit v1 intra and explicit v1 intra pad/nopad.

* Add explicit oddMN support with custom tuned instances

* Add two stage instances based on the parameters from the tuned cshuffle V3 instances. CShuffleBlockTranserScalarPerVector adapted to 4, and mergegroups fixed to 1 for now. No more special instance lists.

* Replace cshuffle non-v3 lists with v3 lists, making sure to not have duplications. Also removing stride1pad0 support for NHWGC since we can use explicit for those cases.

* Remove some instances that give incorrect results (f16 NHWGC)

* Add bf16 f32 bf16 instances based on tuned b16 NHWGC GKYXC instances.

* Add back some generic instances to make sure we have the same shape / layout / datatype support as before the instance selection process.

* Add instances for scale and bilinear based on the bf16 NHWGC GKYXC tuning. Keep generic instances for support.

* Disable two stage f16 instances which produce incorrect results.

* Remove more instances which fail verification, for bf16_f32_bf16 and for f16 scale / bilinear.

* Disable all non-generic two-stage instances in the instance lists for NHWGC. They are never faster and support is already carried by CShuffleV3 and Explicit.

* Remove unused instance lists and related add_x_instance() functions, fwd declarations, cmakelists entries. Also merge the "wmma" and "wmma v3" instance list files, which are both v3.

* Re-enable all xdl instances (un-16x16-adapted) and dl instances. Remove custom ckProfiler target.

* Remove straggler comments

* Remove [[maybe_unused]]

* Fix clang format

* Remove unwanted instances. This includes all instances which are not NHWGCxGKYXC and F16 or BF16 (no mixed in-out types).

* Add comment

---------

Co-authored-by: kiefer <kiefer.van.teutem@streamhpc.com>

Co-authored-by: Kiefer van Teutem <50830967+krithalith@users.noreply.github.com>

[ROCm/composable_kernel commit: 87dd073887]

Composable Kernel

Note

The published documentation is available at Composable Kernel in an organized, easy-to-read format, with search and a table of contents. The documentation source files reside in the

docsfolder of this repository. As with all ROCm projects, the documentation is open source. For more information on contributing to the documentation, see Contribute to ROCm documentation.

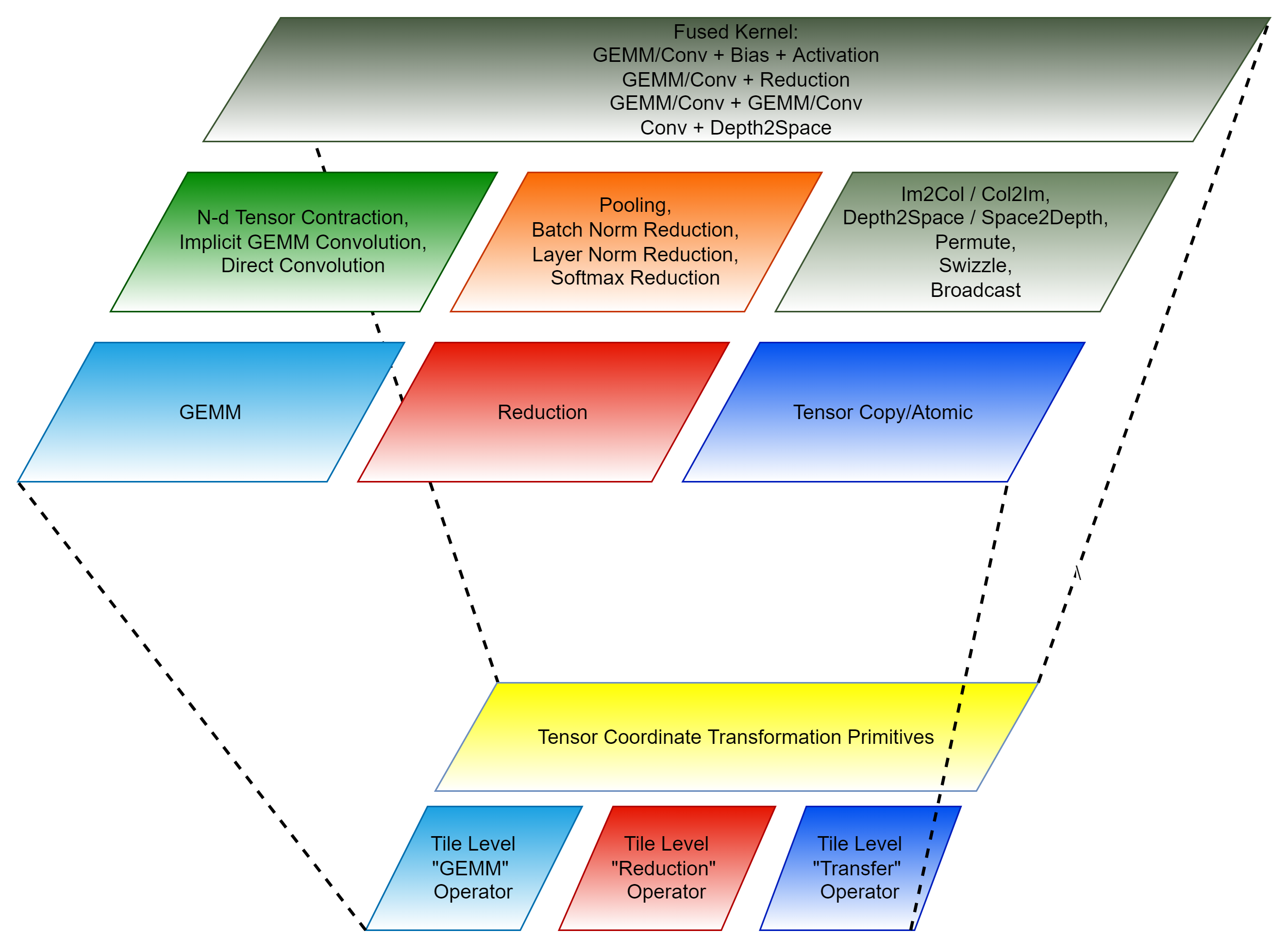

The Composable Kernel (CK) library provides a programming model for writing performance-critical kernels for machine learning workloads across multiple architectures (GPUs, CPUs, etc.). The CK library uses general purpose kernel languages, such as HIP C++.

CK uses two concepts to achieve performance portability and code maintainability:

- A tile-based programming model

- Algorithm complexity reduction for complex machine learning (ML) operators. This uses an innovative technique called Tensor Coordinate Transformation.

The current CK library is structured into four layers:

- Templated Tile Operators

- Templated Kernel and Invoker

- Instantiated Kernel and Invoker

- Client API

General information

- CK supported operations

- CK Tile supported operations

- CK wrapper

- CK codegen

- CK profiler

- Examples (Custom use of CK supported operations)

- Client examples (Use of CK supported operations with instance factory)

- Terminology

- Contributors

CK is released under the MIT license.

Building CK

We recommend building CK inside Docker containers, which include all necessary packages. Pre-built Docker images are available on DockerHub.

-

To build a new Docker image, use the Dockerfile provided with the source code:

DOCKER_BUILDKIT=1 docker build -t ck:latest -f Dockerfile . -

Launch the Docker container:

docker run \ -it \ --privileged \ --group-add sudo \ -w /root/workspace \ -v ${PATH_TO_LOCAL_WORKSPACE}:/root/workspace \ ck:latest \ /bin/bash -

Clone CK source code from the GitHub repository and start the build:

git clone https://github.com/ROCm/composable_kernel.git && \ cd composable_kernel && \ mkdir build && \ cd buildYou must set the

GPU_TARGETSmacro to specify the GPU target architecture(s) you want to run CK on. You can specify single or multiple architectures. If you specify multiple architectures, use a semicolon between each; for example,gfx908;gfx90a;gfx942.cmake \ -D CMAKE_PREFIX_PATH=/opt/rocm \ -D CMAKE_CXX_COMPILER=/opt/rocm/bin/hipcc \ -D CMAKE_BUILD_TYPE=Release \ -D GPU_TARGETS="gfx908;gfx90a" \ ..If you don't set

GPU_TARGETSon the cmake command line, CK is built for all GPU targets supported by the current compiler (this may take a long time). Tests and examples will only get built if the GPU_TARGETS is set by the user on the cmake command line.NOTE: If you try setting

GPU_TARGETSto a list of architectures, the build will only work if the architectures are similar, e.g.,gfx908;gfx90a, orgfx1100;gfx1101;gfx11012. Otherwise, if you want to build the library for a list of different architectures, you should use theGPU_ARCHSbuild argument, for exampleGPU_ARCHS=gfx908;gfx1030;gfx1100;gfx942.Convenience script for development builds:

Alternatively, you can use the provided convenience script

script/cmake-ck-dev.shwhich automatically configures CK for development with sensible defaults. In the build directory:../script/cmake-ck-dev.shThis script:

- Cleans CMake cache files before configuring

- Sets

BUILD_DEV=ONfor development mode - Defaults to GPU targets:

gfx908;gfx90a;gfx942 - Enables verbose makefile output

- Sets additional compiler flags for better error messages

By default, it considers the parent directory to be the project source directory.

You can specify the source directory as the first argument. You can specify custom GPU targets (semicolon-separated) as the second argument:

../script/cmake-ck-dev.sh .. gfx1100Or pass additional cmake arguments:

../script/cmake-ck-dev.sh .. gfx90a -DCMAKE_BUILD_TYPE=Release -

Build the entire CK library:

make -j"$(nproc)" -

Install CK:

make -j install

Optional post-install steps

-

Build examples and tests:

make -j examples tests -

Build and run all examples and tests:

make -j checkYou can find instructions for running each individual example in example.

-

Build and run smoke/regression examples and tests:

make -j smoke # tests and examples that run for < 30 seconds eachmake -j regression # tests and examples that run for >= 30 seconds each -

Build ckProfiler:

make -j ckProfilerYou can find instructions for running ckProfiler in profiler.

-

Build our documentation locally:

cd docs pip3 install -r sphinx/requirements.txt python3 -m sphinx -T -E -b html -d _build/doctrees -D language=en . _build/html

Notes

The -j option for building with multiple threads in parallel, which speeds up the build significantly.

However, -j launches unlimited number of threads, which can cause the build to run out of memory and

crash. On average, you should expect each thread to use ~2Gb of RAM.

Depending on the number of CPU cores and the amount of RAM on your system, you may want to

limit the number of threads. For example, if you have a 128-core CPU and 128 Gb of RAM it's advisable to use -j32.

Additional cmake flags can be used to significantly speed-up the build:

-

DTYPES(default is not set) can be set to any subset of "fp64;fp32;tf32;fp16;fp8;bf16;int8" to build instances of select data types only. The main default data types are fp32 and fp16; you can safely skip other data types. -

DISABLE_DL_KERNELS(default is OFF) must be set to ON in order not to build instances, such asgemm_dlorbatched_gemm_multi_d_dl. These instances are useful on architectures like the NAVI2x, as most other platforms have faster instances, such asxdlorwmma, available. -

DISABLE_DPP_KERNELS(default is OFF) must be set to ON in order not to build instances, such asgemm_dpp. These instances offer a slightly better performance of fp16 gemms on NAVI2x. But on other architectures faster alternatives are available. -

CK_USE_FP8_ON_UNSUPPORTED_ARCH(default is OFF) must be set to ON in order to build instances, such asgemm_universal,gemm_universal_streamkandgemm_multiply_multiplyfor fp8 data type for GPU targets which do not have native support for fp8 data type, such as gfx908 or gfx90a. These instances are useful on architectures like the MI100/MI200 for the functional support only.

Using sccache for building

The default CK Docker images come with a pre-installed version of sccache, which supports clang being used as hip-compiler (" -x hip"). Using sccache can help reduce the time to re-build code from hours to 1-2 minutes. In order to invoke sccache, you need to run:

sccache --start-server

then add the following flags to the cmake command line:

-DCMAKE_HIP_COMPILER_LAUNCHER=sccache -DCMAKE_CXX_COMPILER_LAUNCHER=sccache -DCMAKE_C_COMPILER_LAUNCHER=sccache

You may need to clean up the build folder and repeat the cmake and make steps in order to take advantage of the sccache during subsequent builds.

Using CK as pre-built kernel library

You can find instructions for using CK as a pre-built kernel library in client_example.

Contributing to CK

When you contribute to CK, make sure you run clang-format on all changed files. We highly

recommend using git hooks that are managed by the pre-commit framework. To install hooks, run:

sudo script/install_precommit.sh

With this approach, pre-commit adds the appropriate hooks to your local repository and

automatically runs clang-format (and possibly additional checks) before any commit is created.

If you need to uninstall hooks from the repository, you can do so by running the following command:

script/uninstall_precommit.sh

If you need to temporarily disable pre-commit hooks, you can add the --no-verify option to the

git commit command.