## Motivation Profiling with `-ftime-trace` on representative translation units (e.g., `device_grouped_conv2d_fwd_xdl_nhwgc_gkyxc_nhwgk_f16_comp_instance.cpp`) revealed that **92% of frontend time was spent in template instantiation**. The primary bottleneck was redundant instantiation of identical helper logic across multiple threadwise transfer class variants. Each `ThreadwiseTensorSliceTransfer_v*` class independently contained its own copy of the same helper computations — serpentine traversal, coordinate stepping, thread scratch descriptors, lambda-like functors, and compile-time constants — duplicated across 13 header files. When a typical GEMM or convolution kernel TU includes blockwise operations (e.g., `blockwise_gemm_xdlops.hpp`), it pulls in multiple transfer variants simultaneously, causing the compiler to instantiate the same helper logic multiple times with the same template arguments. This was compounded by the helpers being defined as members of the outer `ThreadwiseTensorSliceTransfer_v*` classes, which carry 14+ template parameters. Functions like `ComputeForwardSweep` depend only on their two argument types, but as inline members of the outer class, the compiler was forced to create separate instantiations for every unique combination of all outer parameters (data types, descriptors, vector widths, etc.) — even when most of those parameters had no effect on the helper's output. ## Technical Details ### The Fix: Shared Helper Struct Hierarchy Duplicated logic was extracted into a standalone helper hierarchy in `threadwise_tensor_slice_transfer_util.hpp`: ``` ThreadwiseTransferHelper_Base (I0..I16, MoveSliceWindow, ComputeThreadScratchDescriptor, | ComputeForwardSteps, ComputeBackwardSteps, MakeVectorContainerTuple) +-- ThreadwiseTransferHelper_Serpentine (ComputeForwardSweep, ComputeMoveOnDim, ComputeDataIndex, | ComputeCoordinateResetStep, VectorSizeLookupTable, VectorOffsetsLookupTable) +-- ThreadwiseTransferHelper_SFC (ComputeSFCCoordinateResetStep) ``` Each helper method is now parameterized **only by what it actually uses**: - `ComputeForwardSweep(idx, lengths)` — parameterized only by the two argument types, not by `SrcData`, `DstData`, `SrcDesc`, etc. - `ComputeForwardSteps(desc, scalar_per_access)` — parameterized only by the descriptor and access sequence types. - `ComputeCoordinateResetStep<SliceLengths, VectorDim, ScalarPerVector, DimAccessOrder>()` — parameterized only by the four values it actually needs. This reduces template instantiation work in two ways: 1. **Across different transfer variants** (v3r1 vs v3r2 vs v3r1_gather): the compiler reuses a single instantiation instead of creating one per variant. 2. **Across different outer class instantiations** (fp16 vs bf16 vs int8): the compiler reuses the helper instantiation because the helper doesn't depend on the data type at all. ### Refactored Headers **13 headers** now delegate to the shared helpers instead of duplicating logic: - Serpentine family: v3r1, v3r2, v3r1_gather, v3r1_dequant - SFC family: v6r1, v6r1r2, v6r2, v6r3, v7r2, v7r3, v7r3_scatter - Dead code removed: v4r1, v5r1 ### Additional Fixes Found During Refactoring - Two latent bugs in v3r2 (`forward_sweep` indexing, `GetDstCoordinateResetStep` extraction) - Dead `SrcCoordStep` variables in v4r1 and v5r1 - Unused `scale_element_op_` member in v3r1_dequant (restored with note) ### Net Code Change +1,428 / -2,297 lines (~870 lines removed). ## Test Plan ### Unit Tests 28 host-side gtests in `test/threadwise_transfer_helper/test_threadwise_transfer_helper.cpp` covering the full helper hierarchy: | Suite | Tests | What is verified | |-------|-------|------------------| | ThreadwiseTransferHelperBase | 6 | Compile-time constants, inheritance, `MoveSliceWindow` with `ResetCoordinateAfterRun` true/false in 2D and 3D | | ThreadwiseTransferHelperSerpentine | 9 | `ComputeForwardSweep` (even/odd row, 1D), `ComputeMoveOnDim` (inner complete/incomplete), `ComputeDataIndex`, `ComputeCoordinateResetStep`, `VectorSizeLookupTable`, `VectorOffsetsLookupTable` | | ThreadwiseTransferHelperSFC | 6 | `ComputeSFCCoordinateResetStep` — single access, 2D row-major, 2D column-major, 3D batch, even/odd inner access counts | | ThreadwiseTransferHelperInheritance | 3 | Serpentine and SFC derive from Base, are not related to each other | | DetailFunctors | 4 | `lambda_scalar_per_access`, `lambda_scalar_step_in_vector`, `lambda_scalar_per_access_for_src_and_dst` (same dim, different dims) | ### Semantic Equivalence GPU ISA comparison using `--cuda-device-only -S` confirmed identical assembly output (modulo `__hip_cuid_*` metadata) between baseline and refactored code. ## Test Results All measurements on a 384-core machine, `-j64`, freshly rebooted, near-idle. ### Targeted Builds (affected targets only) | Target | Baseline | Refactored | Wall-clock Delta | CPU Delta | |--------|----------|------------|-----------------|-----------| | `device_grouped_conv2d_fwd_instance` (160 TUs) | 7m 37s / 189m CPU | 6m 53s / 161m CPU | **-9.7%** | **-14.9%** | | `device_grouped_conv3d_fwd_instance` (185 TUs) | 9m 49s / 202m CPU | 6m 42s / 182m CPU | **-31.8%** | **-10.0%** | | **Combined** | **17m 27s / 392m CPU** | **13m 35s / 344m CPU** | **-22.2%** | **-12.4%** | ### Full Project Build (8,243 targets) | Metric | Baseline | Refactored | Delta | |--------|----------|------------|-------| | Wall-clock | 103m 38s | 111m 56s | +8.0%* | | CPU time | 4705m 7s | 4648m 17s | **-1.2%** | \*Wall-clock inflated by external load spike during refactored build (load 90 vs 66). CPU time is the reliable metric. ### Context ~15% of all build targets (1,262 / 8,243) transitively include the modified headers. These are primarily GEMM and convolution kernel instantiations — the core compute workloads. The 12-15% CPU savings on affected targets is diluted to 1.2% across the full project because 85% of targets are unaffected. ## Submission Checklist - [ ] Look over the contributing guidelines at https://github.com/ROCm/ROCm/blob/develop/CONTRIBUTING.md#pull-requests. --------- Co-authored-by: Claude Opus 4.6 <noreply@anthropic.com>

Composable Kernel

Note

The published documentation is available at Composable Kernel in an organized, easy-to-read format, with search and a table of contents. The documentation source files reside in the

docsfolder of this repository. As with all ROCm projects, the documentation is open source. For more information on contributing to the documentation, see Contribute to ROCm documentation.

The Composable Kernel (CK) library provides a programming model for writing performance-critical kernels for machine learning workloads across multiple architectures (GPUs, CPUs, etc.). The CK library uses general purpose kernel languages, such as HIP C++.

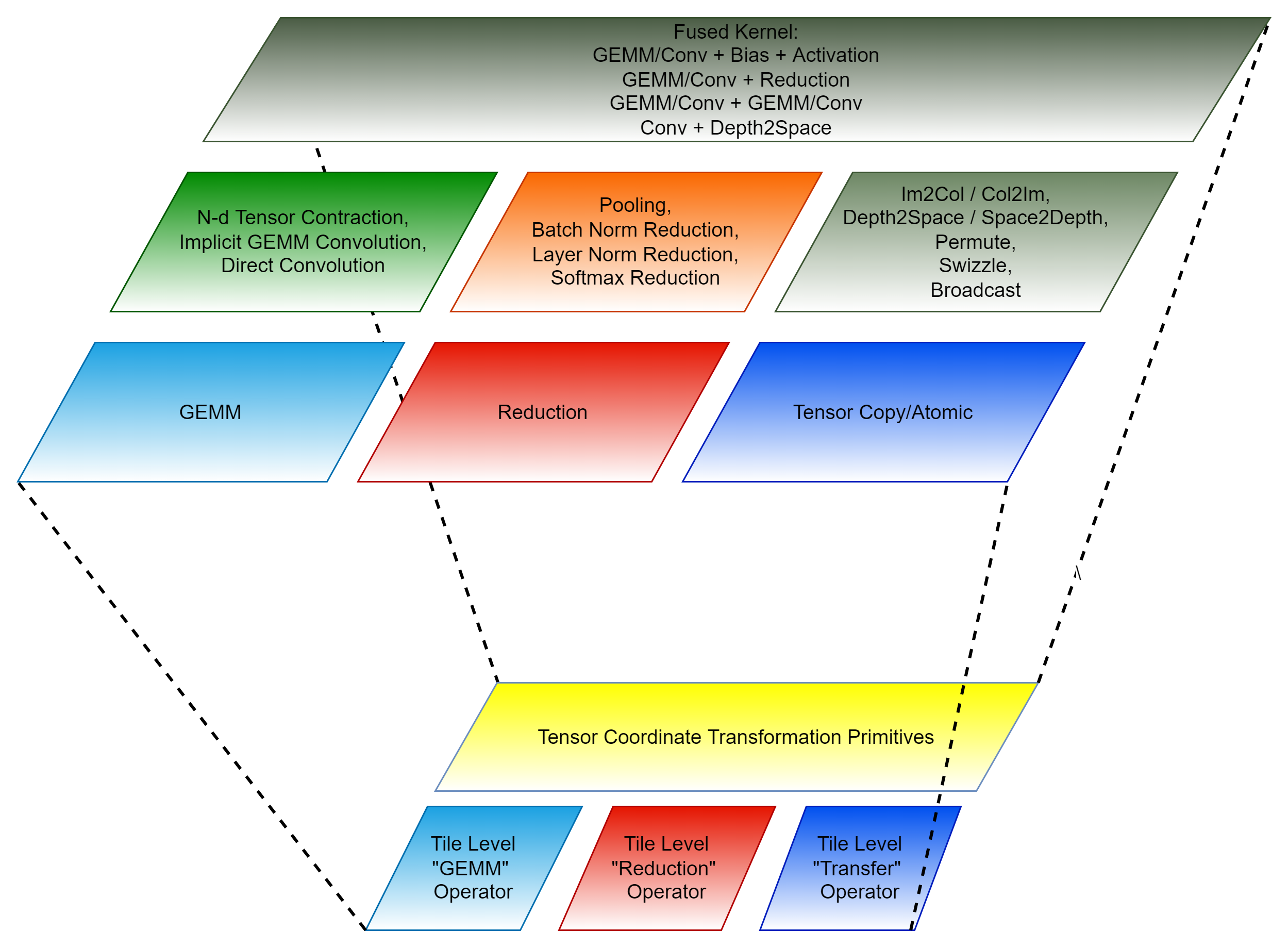

CK uses two concepts to achieve performance portability and code maintainability:

- A tile-based programming model

- Algorithm complexity reduction for complex machine learning (ML) operators. This uses an innovative technique called Tensor Coordinate Transformation.

The current CK library is structured into four layers:

- Templated Tile Operators

- Templated Kernel and Invoker

- Instantiated Kernel and Invoker

- Client API

General information

- CK supported operations

- CK Tile supported operations

- CK wrapper

- CK codegen

- CK profiler

- Examples (Custom use of CK supported operations)

- Client examples (Use of CK supported operations with instance factory)

- Terminology

- Contributors

CK is released under the MIT license.

Building CK

We recommend building CK inside Docker containers, which include all necessary packages. Pre-built Docker images are available on DockerHub.

-

To build a new Docker image, use the Dockerfile provided with the source code:

DOCKER_BUILDKIT=1 docker build -t ck:latest -f Dockerfile . -

Launch the Docker container:

docker run \ -it \ --privileged \ --group-add sudo \ -w /root/workspace \ -v ${PATH_TO_LOCAL_WORKSPACE}:/root/workspace \ ck:latest \ /bin/bash -

Clone CK source code from the GitHub repository and start the build:

git clone https://github.com/ROCm/composable_kernel.git && \ cd composable_kernel && \ mkdir build && \ cd buildYou must set the

GPU_TARGETSmacro to specify the GPU target architecture(s) you want to run CK on. You can specify single or multiple architectures. If you specify multiple architectures, use a semicolon between each; for example,gfx908;gfx90a;gfx942.cmake \ -D CMAKE_PREFIX_PATH=/opt/rocm \ -D CMAKE_CXX_COMPILER=/opt/rocm/bin/hipcc \ -D CMAKE_BUILD_TYPE=Release \ -D GPU_TARGETS="gfx908;gfx90a" \ ..If you don't set

GPU_TARGETSon the cmake command line, CK is built for all GPU targets supported by the current compiler (this may take a long time). Tests and examples will only get built if the GPU_TARGETS is set by the user on the cmake command line.NOTE: If you try setting

GPU_TARGETSto a list of architectures, the build will only work if the architectures are similar, e.g.,gfx908;gfx90a, orgfx1100;gfx1101;gfx11012. Otherwise, if you want to build the library for a list of different architectures, you should use theGPU_ARCHSbuild argument, for exampleGPU_ARCHS=gfx908;gfx1030;gfx1100;gfx942.Convenience script for development builds:

Alternatively, you can use the provided convenience script

script/cmake-ck-dev.shwhich automatically configures CK for development with sensible defaults. In the build directory:../script/cmake-ck-dev.shThis script:

- Cleans CMake cache files before configuring

- Sets

BUILD_DEV=ONfor development mode - Defaults to GPU targets:

gfx908;gfx90a;gfx942 - Enables verbose makefile output

- Sets additional compiler flags for better error messages

By default, it considers the parent directory to be the project source directory.

You can specify the source directory as the first argument. You can specify custom GPU targets (semicolon-separated) as the second argument:

../script/cmake-ck-dev.sh .. gfx1100Or pass additional cmake arguments:

../script/cmake-ck-dev.sh .. gfx90a -DCMAKE_BUILD_TYPE=Release -

Build the entire CK library:

make -j"$(nproc)" -

Install CK:

make -j install

Building for Windows

Install TheRock and run CMake configure as

cmake \

-D CMAKE_PREFIX_PATH="C:/dist/TheRock" \

-D CMAKE_CXX_COMPILER="C:/dist/TheRock/bin/hipcc.exe" \

-D CMAKE_BUILD_TYPE=Release \

-D GPU_TARGETS="gfx1151" \

-G Ninja \

..

Use Ninja to build either the whole library or individual targets.

Optional post-install steps

-

Build examples and tests:

make -j examples tests -

Build and run all examples and tests:

make -j checkYou can find instructions for running each individual example in example.

-

Build and run smoke/regression examples and tests:

make -j smoke # tests and examples that run for < 30 seconds eachmake -j regression # tests and examples that run for >= 30 seconds each -

Build ckProfiler:

make -j ckProfilerYou can find instructions for running ckProfiler in profiler.

-

Build our documentation locally:

cd docs pip3 install -r sphinx/requirements.txt python3 -m sphinx -T -E -b html -d _build/doctrees -D language=en . _build/html

Notes

The -j option for building with multiple threads in parallel, which speeds up the build significantly.

However, -j launches unlimited number of threads, which can cause the build to run out of memory and

crash. On average, you should expect each thread to use ~2Gb of RAM.

Depending on the number of CPU cores and the amount of RAM on your system, you may want to

limit the number of threads. For example, if you have a 128-core CPU and 128 Gb of RAM it's advisable to use -j32.

Additional cmake flags can be used to significantly speed-up the build:

-

DTYPES(default is not set) can be set to any subset of "fp64;fp32;tf32;fp16;fp8;bf16;int8" to build instances of select data types only. The main default data types are fp32 and fp16; you can safely skip other data types. -

DISABLE_DL_KERNELS(default is OFF) must be set to ON in order not to build instances, such asgemm_dlorbatched_gemm_multi_d_dl. These instances are useful on architectures like the NAVI2x, as most other platforms have faster instances, such asxdlorwmma, available. -

DISABLE_DPP_KERNELS(default is OFF) must be set to ON in order not to build instances, such asgemm_dpp. These instances offer a slightly better performance of fp16 gemms on NAVI2x. But on other architectures faster alternatives are available. -

CK_USE_FP8_ON_UNSUPPORTED_ARCH(default is OFF) must be set to ON in order to build instances, such asgemm_universal,gemm_universal_streamkandgemm_multiply_multiplyfor fp8 data type for GPU targets which do not have native support for fp8 data type, such as gfx908 or gfx90a. These instances are useful on architectures like the MI100/MI200 for the functional support only.

Using sccache for building

The default CK Docker images come with a pre-installed version of sccache, which supports clang being used as hip-compiler (" -x hip"). Using sccache can help reduce the time to re-build code from hours to 1-2 minutes. In order to invoke sccache, you need to run:

sccache --start-server

then add the following flags to the cmake command line:

-DCMAKE_HIP_COMPILER_LAUNCHER=sccache -DCMAKE_CXX_COMPILER_LAUNCHER=sccache -DCMAKE_C_COMPILER_LAUNCHER=sccache

You may need to clean up the build folder and repeat the cmake and make steps in order to take advantage of the sccache during subsequent builds.

Using CK as pre-built kernel library

You can find instructions for using CK as a pre-built kernel library in client_example.

Contributing to CK

When you contribute to CK, make sure you run clang-format on all changed files. We highly

recommend using git hooks that are managed by the pre-commit framework. To install hooks, run:

sudo script/install_precommit.sh

With this approach, pre-commit adds the appropriate hooks to your local repository and

automatically runs clang-format (and possibly additional checks) before any commit is created.

If you need to uninstall hooks from the repository, you can do so by running the following command:

script/uninstall_precommit.sh

If you need to temporarily disable pre-commit hooks, you can add the --no-verify option to the

git commit command.