[CK] Optimize multi-dimensional static for loop decomposition (#4447) MIME-Version: 1.0 Content-Type: text/plain; charset=UTF-8 Content-Transfer-Encoding: 8bit ## Motivation Recursive template implementations might initially seem attractive to minimize necessary coding. Unfortunately, this style is often affects readability and requires significant resources from the compiler to generate instantiation chains. In "high-traffic" code (e.g., used in many places + compilation units), this generally does not scale well and can bloat the overall compile times to unnecessary lengths. The aim of this PR is to take some of most high-traffic utility code and try our best to eliminate recursive templates in favor of fold expansions and constexpr function helpers. In local tests with clang build analyzer, device_grouped_conv2d_fwd_xdl_ngchw_gkcyx_ngkhw_f16_16x16_instance.cpp showed high hit-rates on slow template instantiations in static_for, dimensional static_for (static_ford), which are subsequently affected by implementation of the Sequence class and associated transforms. Example: **** Templates that took longest to instantiate: 70111 ms: ck::detail::applier<int, 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 1... (372 times, avg 188 ms) // **70 seconds!** The above is part of the implementation of static_for which uses Sequence classes.. ## Technical Details ### Summary of Optimization Techniques | Technique | Used In | Benefit | |-----------|---------|---------| | __Constexpr for-loop computation__ | sequence_reverse_inclusive_scan, sequence_map_inverse | Moves O(N) work from template instantiation to constexpr evaluation | | __Pack expansion with indexing__ | sequence_reverse, Sequence::Modify | Single template instantiation instead of recursive | | __Flat iteration + decomposition__ | ford, static_ford | O(1) template depth instead of O(N^D) | | __Pre-computed strides__ | index_decomposer | Enables O(1) linear-to-multi-index conversion | ### Impact on Compile Time These optimizations reduce template instantiation depth from O(N) or O(N^D) to O(1), which: 1. Reduces compiler memory usage 2. Reduces compile time exponentially for deep instantiation chains 3. Enables larger iteration spaces without hitting template depth limits ## Test Plan * Existing tests for Sequence are re-used to affirm correctness * Unit tests for ford and static_ford are added (dimensional looping) * 8 new regression tests specifically verify the fixes for the PR feedback: - `NonTrivialOrder3D_201` - Tests Orders<2,0,1> for static_ford - `NonTrivialOrder3D_201_Runtime` - Tests Orders<2,0,1> for ford - `ConsistencyWithNonTrivialOrder_201` - Verifies static_ford and ford consistency - `NonTrivialOrder3D_120` - Tests Orders<1,2,0> for static_ford - `NonTrivialOrder3D_120_Runtime` - Tests Orders<1,2,0> for ford - `NonTrivialOrder4D` - Tests 4D with Orders<3,1,0,2> for static_ford - `NonTrivialOrder4D_Runtime` - Tests 4D with Orders<3,1,0,2> for ford - `AsymmetricDimensionsWithOrder` - Tests asymmetric dimensions with non-trivial ordering ## Test Result ### Compile Time Comparison: `8b72bc8` (base) → `477e0686` (optimized) #### Commits in Range (8 commits) 1. `fd4ca17f48` - Optimize sequence_reverse_inclusive_scan and sequence_reverse 2. `7a7e3fdeef` - Optimize sequence_map_inverse 3. `92855c9913` - Optimize ford and static_ford calls to eliminate nested template recursion 4. `88a564032b` - Add unit tests for ford and static_ford 5. `1a0fb22217` - Fix clang-format 6. `8a0d26bddf` - Increase template recursion depth to 1024 7. `dc53bb6e20` - Address copilot feedback and add regression tests 8. `477e06861d` - Increase bracket depth to 1024 #### Build Timing Results | File | Base (8b72bc8759d9 | HEAD(a0438bd398) | Improvement | |------|------|------|-------------| | grouped_conv2d_fwd (f16) -j1 | 313.31s | 272.93s | __12.9% faster__ | | grouped_conv1d_fwd (bf16) -j1 | 79.33s | 68.61s | __13.5% faster__ | | grouped_conv1d_bwd_weight (f16) -j1| 15.77s | 14.31s | __9.2% faster__ | | device_grouped_conv2d_fwd_instance -j64 | s | s | __% faster__ | #### Key Optimizations 1. __sequence_reverse_inclusive_scan/sequence_reverse__: O(N) → O(1) template depth 2. __sequence_map_inverse__: O(N) → O(1) template depth 3. __ford/static_ford__: O(N^D) → O(1) template depth using flat iteration with index decomposition 4. __Copilot feedback fixes__: Corrected New2Old mapping for non-trivial orderings ## Submission Checklist - [ ] Look over the contributing guidelines at https://github.com/ROCm/ROCm/blob/develop/CONTRIBUTING.md#pull-requests.

Composable Kernel

Note

The published documentation is available at Composable Kernel in an organized, easy-to-read format, with search and a table of contents. The documentation source files reside in the

docsfolder of this repository. As with all ROCm projects, the documentation is open source. For more information on contributing to the documentation, see Contribute to ROCm documentation.

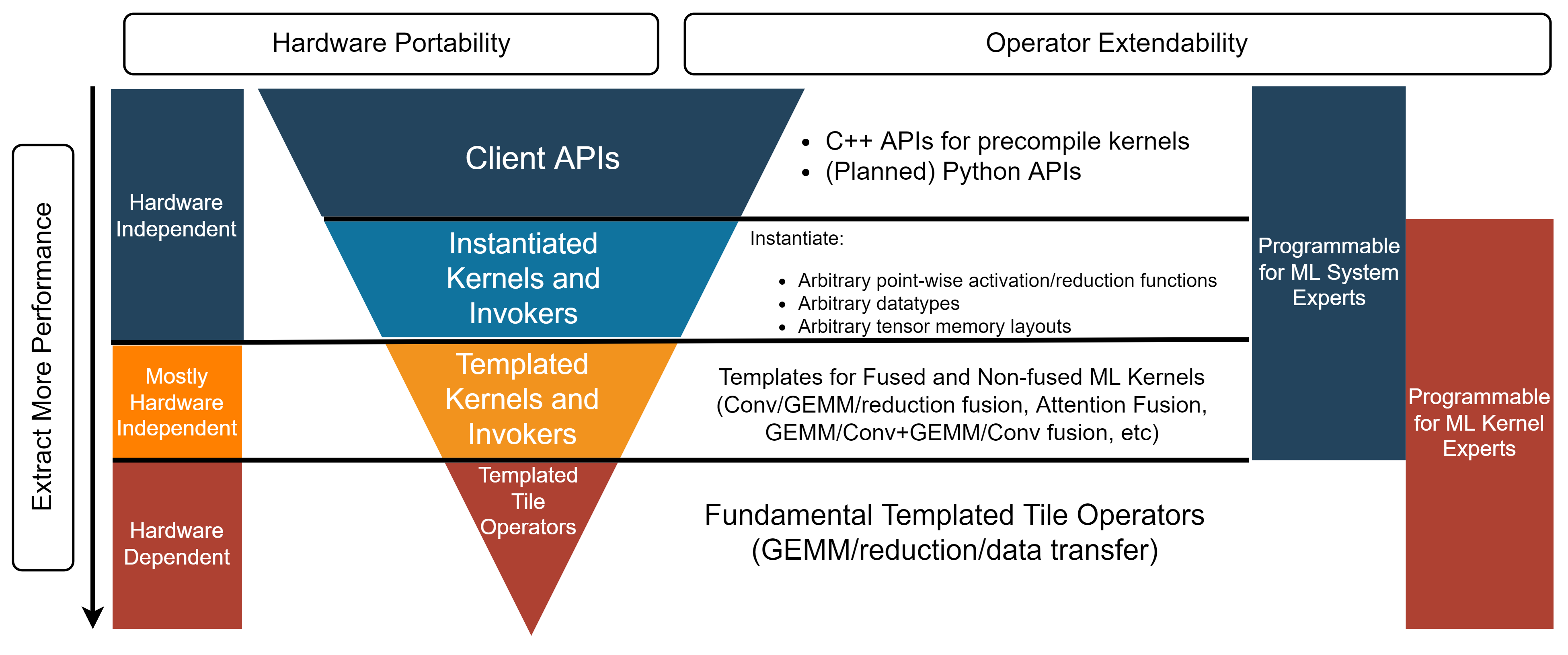

The Composable Kernel (CK) library provides a programming model for writing performance-critical kernels for machine learning workloads across multiple architectures (GPUs, CPUs, etc.). The CK library uses general purpose kernel languages, such as HIP C++.

CK uses two concepts to achieve performance portability and code maintainability:

- A tile-based programming model

- Algorithm complexity reduction for complex machine learning (ML) operators. This uses an innovative technique called Tensor Coordinate Transformation.

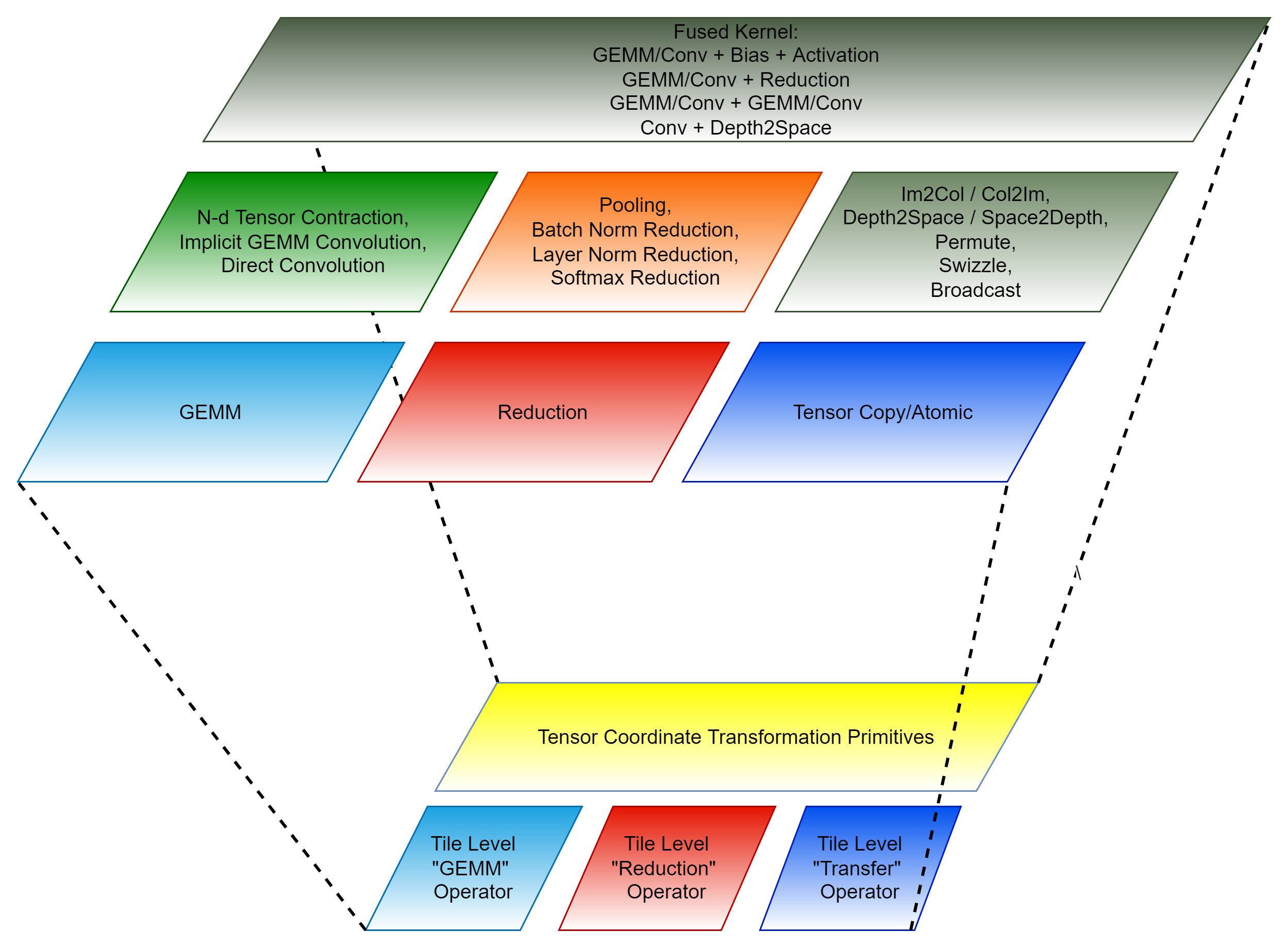

The current CK library is structured into four layers:

- Templated Tile Operators

- Templated Kernel and Invoker

- Instantiated Kernel and Invoker

- Client API

General information

- CK supported operations

- CK Tile supported operations

- CK wrapper

- CK codegen

- CK profiler

- Examples (Custom use of CK supported operations)

- Client examples (Use of CK supported operations with instance factory)

- Terminology

- Contributors

CK is released under the MIT license.

Building CK

We recommend building CK inside Docker containers, which include all necessary packages. Pre-built Docker images are available on DockerHub.

-

To build a new Docker image, use the Dockerfile provided with the source code:

DOCKER_BUILDKIT=1 docker build -t ck:latest -f Dockerfile . -

Launch the Docker container:

docker run \ -it \ --privileged \ --group-add sudo \ -w /root/workspace \ -v ${PATH_TO_LOCAL_WORKSPACE}:/root/workspace \ ck:latest \ /bin/bash -

Clone CK source code from the GitHub repository and start the build:

git clone https://github.com/ROCm/composable_kernel.git && \ cd composable_kernel && \ mkdir build && \ cd buildYou must set the

GPU_TARGETSmacro to specify the GPU target architecture(s) you want to run CK on. You can specify single or multiple architectures. If you specify multiple architectures, use a semicolon between each; for example,gfx908;gfx90a;gfx942.cmake \ -D CMAKE_PREFIX_PATH=/opt/rocm \ -D CMAKE_CXX_COMPILER=/opt/rocm/bin/hipcc \ -D CMAKE_BUILD_TYPE=Release \ -D GPU_TARGETS="gfx908;gfx90a" \ ..If you don't set

GPU_TARGETSon the cmake command line, CK is built for all GPU targets supported by the current compiler (this may take a long time). Tests and examples will only get built if the GPU_TARGETS is set by the user on the cmake command line.NOTE: If you try setting

GPU_TARGETSto a list of architectures, the build will only work if the architectures are similar, e.g.,gfx908;gfx90a, orgfx1100;gfx1101;gfx11012. Otherwise, if you want to build the library for a list of different architectures, you should use theGPU_ARCHSbuild argument, for exampleGPU_ARCHS=gfx908;gfx1030;gfx1100;gfx942.Convenience script for development builds:

Alternatively, you can use the provided convenience script

script/cmake-ck-dev.shwhich automatically configures CK for development with sensible defaults. In the build directory:../script/cmake-ck-dev.shThis script:

- Cleans CMake cache files before configuring

- Sets

BUILD_DEV=ONfor development mode - Defaults to GPU targets:

gfx908;gfx90a;gfx942 - Enables verbose makefile output

- Sets additional compiler flags for better error messages

By default, it considers the parent directory to be the project source directory.

You can specify the source directory as the first argument. You can specify custom GPU targets (semicolon-separated) as the second argument:

../script/cmake-ck-dev.sh .. gfx1100Or pass additional cmake arguments:

../script/cmake-ck-dev.sh .. gfx90a -DCMAKE_BUILD_TYPE=Release -

Build the entire CK library:

make -j"$(nproc)" -

Install CK:

make -j install

Building for Windows

Install TheRock and run CMake configure as

cmake \

-D CMAKE_PREFIX_PATH="C:/dist/TheRock" \

-D CMAKE_CXX_COMPILER="C:/dist/TheRock/bin/hipcc.exe" \

-D CMAKE_BUILD_TYPE=Release \

-D GPU_TARGETS="gfx1151" \

-G Ninja \

..

Use Ninja to build either the whole library or individual targets.

Optional post-install steps

-

Build examples and tests:

make -j examples tests -

Build and run all examples and tests:

make -j checkYou can find instructions for running each individual example in example.

-

Build and run smoke/regression examples and tests:

make -j smoke # tests and examples that run for < 30 seconds eachmake -j regression # tests and examples that run for >= 30 seconds each -

Build ckProfiler:

make -j ckProfilerYou can find instructions for running ckProfiler in profiler.

-

Build our documentation locally:

cd docs pip3 install -r sphinx/requirements.txt python3 -m sphinx -T -E -b html -d _build/doctrees -D language=en . _build/html

Notes

The -j option for building with multiple threads in parallel, which speeds up the build significantly.

However, -j launches unlimited number of threads, which can cause the build to run out of memory and

crash. On average, you should expect each thread to use ~2Gb of RAM.

Depending on the number of CPU cores and the amount of RAM on your system, you may want to

limit the number of threads. For example, if you have a 128-core CPU and 128 Gb of RAM it's advisable to use -j32.

Additional cmake flags can be used to significantly speed-up the build:

-

DTYPES(default is not set) can be set to any subset of "fp64;fp32;tf32;fp16;fp8;bf16;int8" to build instances of select data types only. The main default data types are fp32 and fp16; you can safely skip other data types. -

DISABLE_DL_KERNELS(default is OFF) must be set to ON in order not to build instances, such asgemm_dlorbatched_gemm_multi_d_dl. These instances are useful on architectures like the NAVI2x, as most other platforms have faster instances, such asxdlorwmma, available. -

DISABLE_DPP_KERNELS(default is OFF) must be set to ON in order not to build instances, such asgemm_dpp. These instances offer a slightly better performance of fp16 gemms on NAVI2x. But on other architectures faster alternatives are available. -

CK_USE_FP8_ON_UNSUPPORTED_ARCH(default is OFF) must be set to ON in order to build instances, such asgemm_universal,gemm_universal_streamkandgemm_multiply_multiplyfor fp8 data type for GPU targets which do not have native support for fp8 data type, such as gfx908 or gfx90a. These instances are useful on architectures like the MI100/MI200 for the functional support only.

Using sccache for building

The default CK Docker images come with a pre-installed version of sccache, which supports clang being used as hip-compiler (" -x hip"). Using sccache can help reduce the time to re-build code from hours to 1-2 minutes. In order to invoke sccache, you need to run:

sccache --start-server

then add the following flags to the cmake command line:

-DCMAKE_HIP_COMPILER_LAUNCHER=sccache -DCMAKE_CXX_COMPILER_LAUNCHER=sccache -DCMAKE_C_COMPILER_LAUNCHER=sccache

You may need to clean up the build folder and repeat the cmake and make steps in order to take advantage of the sccache during subsequent builds.

Using CK as pre-built kernel library

You can find instructions for using CK as a pre-built kernel library in client_example.

Contributing to CK

When you contribute to CK, make sure you run clang-format on all changed files. We highly

recommend using git hooks that are managed by the pre-commit framework. To install hooks, run:

sudo script/install_precommit.sh

With this approach, pre-commit adds the appropriate hooks to your local repository and

automatically runs clang-format (and possibly additional checks) before any commit is created.

If you need to uninstall hooks from the repository, you can do so by running the following command:

script/uninstall_precommit.sh

If you need to temporarily disable pre-commit hooks, you can add the --no-verify option to the

git commit command.