mirror of

https://github.com/lllyasviel/stable-diffusion-webui-forge.git

synced 2026-02-26 01:33:56 +00:00

remove cn first

This commit is contained in:

185

extensions-builtin/sd_forge_controlnet/.gitignore

vendored

185

extensions-builtin/sd_forge_controlnet/.gitignore

vendored

@@ -1,185 +0,0 @@

|

||||

# Byte-compiled / optimized / DLL files

|

||||

__pycache__/

|

||||

*.py[cod]

|

||||

*$py.class

|

||||

|

||||

# C extensions

|

||||

*.so

|

||||

|

||||

# Distribution / packaging

|

||||

.Python

|

||||

build/

|

||||

develop-eggs/

|

||||

dist/

|

||||

downloads/

|

||||

eggs/

|

||||

.eggs/

|

||||

lib/

|

||||

lib64/

|

||||

parts/

|

||||

sdist/

|

||||

var/

|

||||

wheels/

|

||||

share/python-wheels/

|

||||

*.egg-info/

|

||||

.installed.cfg

|

||||

*.egg

|

||||

MANIFEST

|

||||

|

||||

# PyInstaller

|

||||

# Usually these files are written by a python script from a template

|

||||

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

||||

*.manifest

|

||||

*.spec

|

||||

|

||||

# Installer logs

|

||||

pip-log.txt

|

||||

pip-delete-this-directory.txt

|

||||

|

||||

# Unit test / coverage reports

|

||||

htmlcov/

|

||||

.tox/

|

||||

.nox/

|

||||

.coverage

|

||||

.coverage.*

|

||||

.cache

|

||||

nosetests.xml

|

||||

coverage.xml

|

||||

*.cover

|

||||

*.py,cover

|

||||

.hypothesis/

|

||||

.pytest_cache/

|

||||

cover/

|

||||

|

||||

# Translations

|

||||

*.mo

|

||||

*.pot

|

||||

|

||||

# Django stuff:

|

||||

*.log

|

||||

local_settings.py

|

||||

db.sqlite3

|

||||

db.sqlite3-journal

|

||||

|

||||

# Flask stuff:

|

||||

instance/

|

||||

.webassets-cache

|

||||

|

||||

# Scrapy stuff:

|

||||

.scrapy

|

||||

|

||||

# Sphinx documentation

|

||||

docs/_build/

|

||||

|

||||

# PyBuilder

|

||||

.pybuilder/

|

||||

target/

|

||||

|

||||

# Jupyter Notebook

|

||||

.ipynb_checkpoints

|

||||

|

||||

# IPython

|

||||

profile_default/

|

||||

ipython_config.py

|

||||

|

||||

# pyenv

|

||||

# For a library or package, you might want to ignore these files since the code is

|

||||

# intended to run in multiple environments; otherwise, check them in:

|

||||

# .python-version

|

||||

|

||||

# pipenv

|

||||

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

||||

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

||||

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

||||

# install all needed dependencies.

|

||||

#Pipfile.lock

|

||||

|

||||

# poetry

|

||||

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

||||

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

||||

# commonly ignored for libraries.

|

||||

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

||||

#poetry.lock

|

||||

|

||||

# pdm

|

||||

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

||||

#pdm.lock

|

||||

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

||||

# in version control.

|

||||

# https://pdm.fming.dev/#use-with-ide

|

||||

.pdm.toml

|

||||

|

||||

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

||||

__pypackages__/

|

||||

|

||||

# Celery stuff

|

||||

celerybeat-schedule

|

||||

celerybeat.pid

|

||||

|

||||

# SageMath parsed files

|

||||

*.sage.py

|

||||

|

||||

# Environments

|

||||

.env

|

||||

.venv

|

||||

env/

|

||||

venv/

|

||||

ENV/

|

||||

env.bak/

|

||||

venv.bak/

|

||||

|

||||

# Spyder project settings

|

||||

.spyderproject

|

||||

.spyproject

|

||||

|

||||

# Rope project settings

|

||||

.ropeproject

|

||||

|

||||

# mkdocs documentation

|

||||

/site

|

||||

|

||||

# mypy

|

||||

.mypy_cache/

|

||||

.dmypy.json

|

||||

dmypy.json

|

||||

|

||||

# Pyre type checker

|

||||

.pyre/

|

||||

|

||||

# pytype static type analyzer

|

||||

.pytype/

|

||||

|

||||

# Cython debug symbols

|

||||

cython_debug/

|

||||

|

||||

# PyCharm

|

||||

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

||||

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

||||

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

||||

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

||||

#.idea

|

||||

*.pt

|

||||

*.pth

|

||||

*.ckpt

|

||||

*.bin

|

||||

*.safetensors

|

||||

|

||||

# Editor setting metadata

|

||||

.idea/

|

||||

.vscode/

|

||||

detected_maps/

|

||||

annotator/downloads/

|

||||

|

||||

# test results and expectations

|

||||

web_tests/results/

|

||||

web_tests/expectations/

|

||||

tests/web_api/full_coverage/results/

|

||||

tests/web_api/full_coverage/expectations/

|

||||

|

||||

*_diff.png

|

||||

|

||||

# Presets

|

||||

presets/

|

||||

|

||||

# Ignore existing dir of hand refiner if exists.

|

||||

annotator/hand_refiner_portable

|

||||

@@ -1,243 +0,0 @@

|

||||

# ControlNet for Stable Diffusion WebUI

|

||||

|

||||

The WebUI extension for ControlNet and other injection-based SD controls.

|

||||

|

||||

|

||||

|

||||

This extension is for AUTOMATIC1111's [Stable Diffusion web UI](https://github.com/AUTOMATIC1111/stable-diffusion-webui), allows the Web UI to add [ControlNet](https://github.com/lllyasviel/ControlNet) to the original Stable Diffusion model to generate images. The addition is on-the-fly, the merging is not required.

|

||||

|

||||

# Installation

|

||||

|

||||

1. Open "Extensions" tab.

|

||||

2. Open "Install from URL" tab in the tab.

|

||||

3. Enter `https://github.com/Mikubill/sd-webui-controlnet.git` to "URL for extension's git repository".

|

||||

4. Press "Install" button.

|

||||

5. Wait for 5 seconds, and you will see the message "Installed into stable-diffusion-webui\extensions\sd-webui-controlnet. Use Installed tab to restart".

|

||||

6. Go to "Installed" tab, click "Check for updates", and then click "Apply and restart UI". (The next time you can also use these buttons to update ControlNet.)

|

||||

7. Completely restart A1111 webui including your terminal. (If you do not know what is a "terminal", you can reboot your computer to achieve the same effect.)

|

||||

8. Download models (see below).

|

||||

9. After you put models in the correct folder, you may need to refresh to see the models. The refresh button is right to your "Model" dropdown.

|

||||

|

||||

# Download Models

|

||||

|

||||

Right now all the 14 models of ControlNet 1.1 are in the beta test.

|

||||

|

||||

Download the models from ControlNet 1.1: https://huggingface.co/lllyasviel/ControlNet-v1-1/tree/main

|

||||

|

||||

You need to download model files ending with ".pth" .

|

||||

|

||||

Put models in your "stable-diffusion-webui\extensions\sd-webui-controlnet\models". You only need to download "pth" files.

|

||||

|

||||

Do not right-click the filenames in HuggingFace website to download. Some users right-clicked those HuggingFace HTML websites and saved those HTML pages as PTH/YAML files. They are not downloading correct files. Instead, please click the small download arrow “↓” icon in HuggingFace to download.

|

||||

|

||||

# Download Models for SDXL

|

||||

|

||||

See instructions [here](https://github.com/Mikubill/sd-webui-controlnet/discussions/2039).

|

||||

|

||||

# Features in ControlNet 1.1

|

||||

|

||||

### Perfect Support for All ControlNet 1.0/1.1 and T2I Adapter Models.

|

||||

|

||||

Now we have perfect support all available models and preprocessors, including perfect support for T2I style adapter and ControlNet 1.1 Shuffle. (Make sure that your YAML file names and model file names are same, see also YAML files in "stable-diffusion-webui\extensions\sd-webui-controlnet\models".)

|

||||

|

||||

### Perfect Support for A1111 High-Res. Fix

|

||||

|

||||

Now if you turn on High-Res Fix in A1111, each controlnet will output two different control images: a small one and a large one. The small one is for your basic generating, and the big one is for your High-Res Fix generating. The two control images are computed by a smart algorithm called "super high-quality control image resampling". This is turned on by default, and you do not need to change any setting.

|

||||

|

||||

### Perfect Support for All A1111 Img2Img or Inpaint Settings and All Mask Types

|

||||

|

||||

Now ControlNet is extensively tested with A1111's different types of masks, including "Inpaint masked"/"Inpaint not masked", and "Whole picture"/"Only masked", and "Only masked padding"&"Mask blur". The resizing perfectly matches A1111's "Just resize"/"Crop and resize"/"Resize and fill". This means you can use ControlNet in nearly everywhere in your A1111 UI without difficulty!

|

||||

|

||||

### The New "Pixel-Perfect" Mode

|

||||

|

||||

Now if you turn on pixel-perfect mode, you do not need to set preprocessor (annotator) resolutions manually. The ControlNet will automatically compute the best annotator resolution for you so that each pixel perfectly matches Stable Diffusion.

|

||||

|

||||

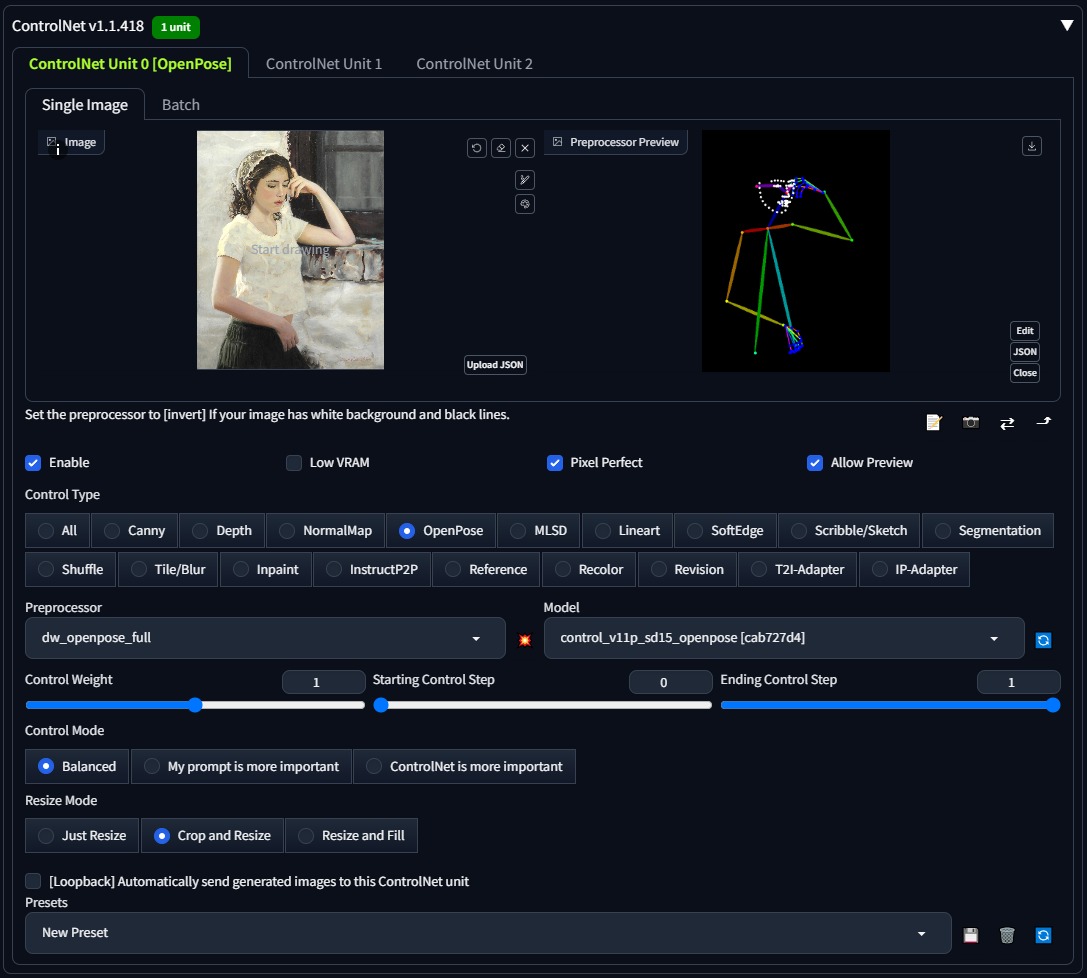

### User-Friendly GUI and Preprocessor Preview

|

||||

|

||||

We reorganized some previously confusing UI like "canvas width/height for new canvas" and it is in the 📝 button now. Now the preview GUI is controlled by the "allow preview" option and the trigger button 💥. The preview image size is better than before, and you do not need to scroll up and down - your a1111 GUI will not be messed up anymore!

|

||||

|

||||

### Support for Almost All Upscaling Scripts

|

||||

|

||||

Now ControlNet 1.1 can support almost all Upscaling/Tile methods. ControlNet 1.1 support the script "Ultimate SD upscale" and almost all other tile-based extensions. Please do not confuse ["Ultimate SD upscale"](https://github.com/Coyote-A/ultimate-upscale-for-automatic1111) with "SD upscale" - they are different scripts. Note that the most recommended upscaling method is ["Tiled VAE/Diffusion"](https://github.com/pkuliyi2015/multidiffusion-upscaler-for-automatic1111) but we test as many methods/extensions as possible. Note that "SD upscale" is supported since 1.1.117, and if you use it, you need to leave all ControlNet images as blank (We do not recommend "SD upscale" since it is somewhat buggy and cannot be maintained - use the "Ultimate SD upscale" instead).

|

||||

|

||||

### More Control Modes (previously called Guess Mode)

|

||||

|

||||

We have fixed many bugs in previous 1.0’s Guess Mode and now it is called Control Mode

|

||||

|

||||

|

||||

|

||||

Now you can control which aspect is more important (your prompt or your ControlNet):

|

||||

|

||||

* "Balanced": ControlNet on both sides of CFG scale, same as turning off "Guess Mode" in ControlNet 1.0

|

||||

|

||||

* "My prompt is more important": ControlNet on both sides of CFG scale, with progressively reduced SD U-Net injections (layer_weight*=0.825**I, where 0<=I <13, and the 13 means ControlNet injected SD 13 times). In this way, you can make sure that your prompts are perfectly displayed in your generated images.

|

||||

|

||||

* "ControlNet is more important": ControlNet only on the Conditional Side of CFG scale (the cond in A1111's batch-cond-uncond). This means the ControlNet will be X times stronger if your cfg-scale is X. For example, if your cfg-scale is 7, then ControlNet is 7 times stronger. Note that here the X times stronger is different from "Control Weights" since your weights are not modified. This "stronger" effect usually has less artifact and give ControlNet more room to guess what is missing from your prompts (and in the previous 1.0, it is called "Guess Mode").

|

||||

|

||||

<table width="100%">

|

||||

<tr>

|

||||

<td width="25%" style="text-align: center">Input (depth+canny+hed)</td>

|

||||

<td width="25%" style="text-align: center">"Balanced"</td>

|

||||

<td width="25%" style="text-align: center">"My prompt is more important"</td>

|

||||

<td width="25%" style="text-align: center">"ControlNet is more important"</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="25%" style="text-align: center"><img src="samples/cm1.png"></td>

|

||||

<td width="25%" style="text-align: center"><img src="samples/cm2.png"></td>

|

||||

<td width="25%" style="text-align: center"><img src="samples/cm3.png"></td>

|

||||

<td width="25%" style="text-align: center"><img src="samples/cm4.png"></td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

### Reference-Only Control

|

||||

|

||||

Now we have a `reference-only` preprocessor that does not require any control models. It can guide the diffusion directly using images as references.

|

||||

|

||||

(Prompt "a dog running on grassland, best quality, ...")

|

||||

|

||||

|

||||

|

||||

This method is similar to inpaint-based reference but it does not make your image disordered.

|

||||

|

||||

Many professional A1111 users know a trick to diffuse image with references by inpaint. For example, if you have a 512x512 image of a dog, and want to generate another 512x512 image with the same dog, some users will connect the 512x512 dog image and a 512x512 blank image into a 1024x512 image, send to inpaint, and mask out the blank 512x512 part to diffuse a dog with similar appearance. However, that method is usually not very satisfying since images are connected and many distortions will appear.

|

||||

|

||||

This `reference-only` ControlNet can directly link the attention layers of your SD to any independent images, so that your SD will read arbitrary images for reference. You need at least ControlNet 1.1.153 to use it.

|

||||

|

||||

To use, just select `reference-only` as preprocessor and put an image. Your SD will just use the image as reference.

|

||||

|

||||

*Note that this method is as "non-opinioned" as possible. It only contains very basic connection codes, without any personal preferences, to connect the attention layers with your reference images. However, even if we tried best to not include any opinioned codes, we still need to write some subjective implementations to deal with weighting, cfg-scale, etc - tech report is on the way.*

|

||||

|

||||

More examples [here](https://github.com/Mikubill/sd-webui-controlnet/discussions/1236).

|

||||

|

||||

# Technical Documents

|

||||

|

||||

See also the documents of ControlNet 1.1:

|

||||

|

||||

https://github.com/lllyasviel/ControlNet-v1-1-nightly#model-specification

|

||||

|

||||

# Default Setting

|

||||

|

||||

This is my setting. If you run into any problem, you can use this setting as a sanity check

|

||||

|

||||

|

||||

|

||||

# Use Previous Models

|

||||

|

||||

### Use ControlNet 1.0 Models

|

||||

|

||||

https://huggingface.co/lllyasviel/ControlNet/tree/main/models

|

||||

|

||||

You can still use all previous models in the previous ControlNet 1.0. Now, the previous "depth" is now called "depth_midas", the previous "normal" is called "normal_midas", the previous "hed" is called "softedge_hed". And starting from 1.1, all line maps, edge maps, lineart maps, boundary maps will have black background and white lines.

|

||||

|

||||

### Use T2I-Adapter Models

|

||||

|

||||

(From TencentARC/T2I-Adapter)

|

||||

|

||||

To use T2I-Adapter models:

|

||||

|

||||

1. Download files from https://huggingface.co/TencentARC/T2I-Adapter/tree/main/models

|

||||

2. Put them in "stable-diffusion-webui\extensions\sd-webui-controlnet\models".

|

||||

3. Make sure that the file names of pth files and yaml files are consistent.

|

||||

|

||||

*Note that "CoAdapter" is not implemented yet.*

|

||||

|

||||

# Gallery

|

||||

|

||||

The below results are from ControlNet 1.0.

|

||||

|

||||

| Source | Input | Output |

|

||||

|:-------------------------:|:-------------------------:|:-------------------------:|

|

||||

| (no preprocessor) | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/bal-source.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/bal-gen.png?raw=true"> |

|

||||

| (no preprocessor) | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/dog_rel.jpg?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/dog_rel.png?raw=true"> |

|

||||

|<img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/mahiro_input.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/mahiro_canny.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/mahiro-out.png?raw=true"> |

|

||||

|<img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/evt_source.jpg?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/evt_hed.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/evt_gen.png?raw=true"> |

|

||||

|<img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/an-source.jpg?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/an-pose.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/an-gen.png?raw=true"> |

|

||||

|<img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/sk-b-src.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/sk-b-dep.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/sk-b-out.png?raw=true"> |

|

||||

|

||||

The below examples are from T2I-Adapter.

|

||||

|

||||

From `t2iadapter_color_sd14v1.pth` :

|

||||

|

||||

| Source | Input | Output |

|

||||

|:-------------------------:|:-------------------------:|:-------------------------:|

|

||||

| <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222947416-ec9e52a4-a1d0-48d8-bb81-736bf636145e.jpeg"> | <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222947435-1164e7d8-d857-42f9-ab10-2d4a4b25f33a.png"> | <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222947557-5520d5f8-88b4-474d-a576-5c9cd3acac3a.png"> |

|

||||

|

||||

From `t2iadapter_style_sd14v1.pth` :

|

||||

|

||||

| Source | Input | Output |

|

||||

|:-------------------------:|:-------------------------:|:-------------------------:|

|

||||

| <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222947416-ec9e52a4-a1d0-48d8-bb81-736bf636145e.jpeg"> | (clip, non-image) | <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222965711-7b884c9e-7095-45cb-a91c-e50d296ba3a2.png"> |

|

||||

|

||||

# Minimum Requirements

|

||||

|

||||

* (Windows) (NVIDIA: Ampere) 4gb - with `--xformers` enabled, and `Low VRAM` mode ticked in the UI, goes up to 768x832

|

||||

|

||||

# Multi-ControlNet

|

||||

|

||||

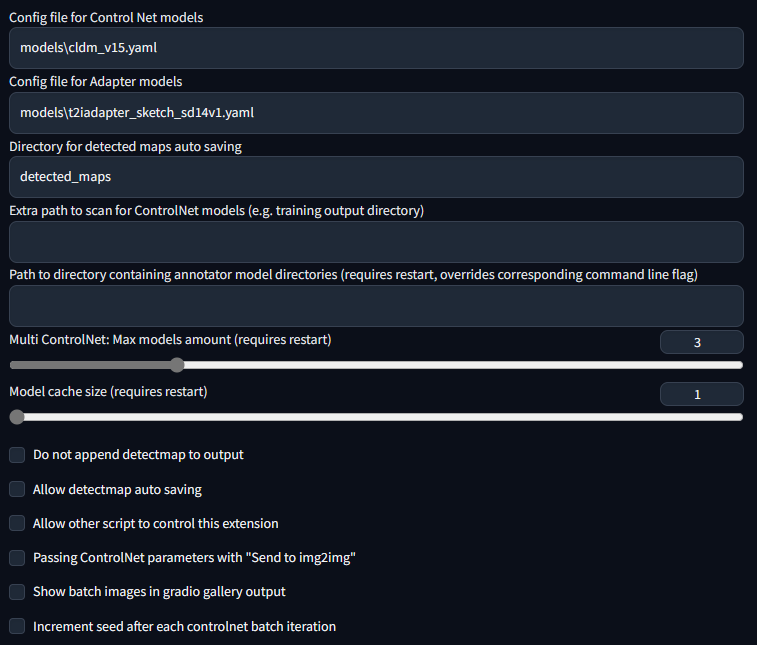

This option allows multiple ControlNet inputs for a single generation. To enable this option, change `Multi ControlNet: Max models amount (requires restart)` in the settings. Note that you will need to restart the WebUI for changes to take effect.

|

||||

|

||||

<table width="100%">

|

||||

<tr>

|

||||

<td width="25%" style="text-align: center">Source A</td>

|

||||

<td width="25%" style="text-align: center">Source B</td>

|

||||

<td width="25%" style="text-align: center">Output</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="25%" style="text-align: center"><img src="https://user-images.githubusercontent.com/31246794/220448620-cd3ede92-8d3f-43d5-b771-32dd8417618f.png"></td>

|

||||

<td width="25%" style="text-align: center"><img src="https://user-images.githubusercontent.com/31246794/220448619-beed9bdb-f6bb-41c2-a7df-aa3ef1f653c5.png"></td>

|

||||

<td width="25%" style="text-align: center"><img src="https://user-images.githubusercontent.com/31246794/220448613-c99a9e04-0450-40fd-bc73-a9122cefaa2c.png"></td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

# Control Weight/Start/End

|

||||

|

||||

Weight is the weight of the controlnet "influence". It's analogous to prompt attention/emphasis. E.g. (myprompt: 1.2). Technically, it's the factor by which to multiply the ControlNet outputs before merging them with original SD Unet.

|

||||

|

||||

Guidance Start/End is the percentage of total steps the controlnet applies (guidance strength = guidance end). It's analogous to prompt editing/shifting. E.g. \[myprompt::0.8\] (It applies from the beginning until 80% of total steps)

|

||||

|

||||

# Batch Mode

|

||||

|

||||

Put any unit into batch mode to activate batch mode for all units. Specify a batch directory for each unit, or use the new textbox in the img2img batch tab as a fallback. Although the textbox is located in the img2img batch tab, you can use it to generate images in the txt2img tab as well.

|

||||

|

||||

Note that this feature is only available in the gradio user interface. Call the APIs as many times as you want for custom batch scheduling.

|

||||

|

||||

# API and Script Access

|

||||

|

||||

This extension can accept txt2img or img2img tasks via API or external extension call. Note that you may need to enable `Allow other scripts to control this extension` in settings for external calls.

|

||||

|

||||

To use the API: start WebUI with argument `--api` and go to `http://webui-address/docs` for documents or checkout [examples](https://github.com/Mikubill/sd-webui-controlnet/blob/main/example/txt2img_example/api_txt2img.py).

|

||||

|

||||

To use external call: Checkout [Wiki](https://github.com/Mikubill/sd-webui-controlnet/wiki/API)

|

||||

|

||||

# Command Line Arguments

|

||||

|

||||

This extension adds these command line arguments to the webui:

|

||||

|

||||

```

|

||||

--controlnet-dir <path to directory with controlnet models> ADD a controlnet models directory

|

||||

--controlnet-annotator-models-path <path to directory with annotator model directories> SET the directory for annotator models

|

||||

--no-half-controlnet load controlnet models in full precision

|

||||

--controlnet-preprocessor-cache-size Cache size for controlnet preprocessor results

|

||||

--controlnet-loglevel Log level for the controlnet extension

|

||||

--controlnet-tracemalloc Enable malloc memory tracing

|

||||

```

|

||||

|

||||

# MacOS Support

|

||||

|

||||

Tested with pytorch nightly: https://github.com/Mikubill/sd-webui-controlnet/pull/143#issuecomment-1435058285

|

||||

|

||||

To use this extension with mps and normal pytorch, currently you may need to start WebUI with `--no-half`.

|

||||

|

||||

# Archive of Deprecated Versions

|

||||

|

||||

The previous version (sd-webui-controlnet 1.0) is archived in

|

||||

|

||||

https://github.com/lllyasviel/webui-controlnet-v1-archived

|

||||

|

||||

Using this version is not a temporary stop of updates. You will stop all updates forever.

|

||||

|

||||

Please consider this version if you work with professional studios that requires 100% reproducing of all previous results pixel by pixel.

|

||||

|

||||

# Thanks

|

||||

|

||||

This implementation is inspired by kohya-ss/sd-webui-additional-networks

|

||||

@@ -1,21 +0,0 @@

|

||||

MIT License

|

||||

|

||||

Copyright (c) 2021 Miaomiao Li

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

in the Software without restriction, including without limitation the rights

|

||||

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE.

|

||||

@@ -1,172 +0,0 @@

|

||||

import os

|

||||

import torch

|

||||

import torch.nn as nn

|

||||

import torch.nn.functional as F

|

||||

from PIL import Image

|

||||

import fnmatch

|

||||

import cv2

|

||||

|

||||

import sys

|

||||

|

||||

import numpy as np

|

||||

from modules import devices

|

||||

from einops import rearrange

|

||||

from annotator.annotator_path import models_path

|

||||

|

||||

import torchvision

|

||||

from torchvision.models import MobileNet_V2_Weights

|

||||

from torchvision import transforms

|

||||

|

||||

COLOR_BACKGROUND = (255,255,0)

|

||||

COLOR_HAIR = (0,0,255)

|

||||

COLOR_EYE = (255,0,0)

|

||||

COLOR_MOUTH = (255,255,255)

|

||||

COLOR_FACE = (0,255,0)

|

||||

COLOR_SKIN = (0,255,255)

|

||||

COLOR_CLOTHES = (255,0,255)

|

||||

PALETTE = [COLOR_BACKGROUND,COLOR_HAIR,COLOR_EYE,COLOR_MOUTH,COLOR_FACE,COLOR_SKIN,COLOR_CLOTHES]

|

||||

|

||||

class UNet(nn.Module):

|

||||

def __init__(self):

|

||||

super(UNet, self).__init__()

|

||||

self.NUM_SEG_CLASSES = 7 # Background, hair, face, eye, mouth, skin, clothes

|

||||

|

||||

mobilenet_v2 = torchvision.models.mobilenet_v2(weights=MobileNet_V2_Weights.IMAGENET1K_V1)

|

||||

mob_blocks = mobilenet_v2.features

|

||||

|

||||

# Encoder

|

||||

self.en_block0 = nn.Sequential( # in_ch=3 out_ch=16

|

||||

mob_blocks[0],

|

||||

mob_blocks[1]

|

||||

)

|

||||

self.en_block1 = nn.Sequential( # in_ch=16 out_ch=24

|

||||

mob_blocks[2],

|

||||

mob_blocks[3],

|

||||

)

|

||||

self.en_block2 = nn.Sequential( # in_ch=24 out_ch=32

|

||||

mob_blocks[4],

|

||||

mob_blocks[5],

|

||||

mob_blocks[6],

|

||||

)

|

||||

self.en_block3 = nn.Sequential( # in_ch=32 out_ch=96

|

||||

mob_blocks[7],

|

||||

mob_blocks[8],

|

||||

mob_blocks[9],

|

||||

mob_blocks[10],

|

||||

mob_blocks[11],

|

||||

mob_blocks[12],

|

||||

mob_blocks[13],

|

||||

)

|

||||

self.en_block4 = nn.Sequential( # in_ch=96 out_ch=160

|

||||

mob_blocks[14],

|

||||

mob_blocks[15],

|

||||

mob_blocks[16],

|

||||

)

|

||||

|

||||

# Decoder

|

||||

self.de_block4 = nn.Sequential( # in_ch=160 out_ch=96

|

||||

nn.UpsamplingNearest2d(scale_factor=2),

|

||||

nn.Conv2d(160, 96, kernel_size=3, padding=1),

|

||||

nn.InstanceNorm2d(96),

|

||||

nn.LeakyReLU(0.1),

|

||||

nn.Dropout(p=0.2)

|

||||

)

|

||||

self.de_block3 = nn.Sequential( # in_ch=96x2 out_ch=32

|

||||

nn.UpsamplingNearest2d(scale_factor=2),

|

||||

nn.Conv2d(96*2, 32, kernel_size=3, padding=1),

|

||||

nn.InstanceNorm2d(32),

|

||||

nn.LeakyReLU(0.1),

|

||||

nn.Dropout(p=0.2)

|

||||

)

|

||||

self.de_block2 = nn.Sequential( # in_ch=32x2 out_ch=24

|

||||

nn.UpsamplingNearest2d(scale_factor=2),

|

||||

nn.Conv2d(32*2, 24, kernel_size=3, padding=1),

|

||||

nn.InstanceNorm2d(24),

|

||||

nn.LeakyReLU(0.1),

|

||||

nn.Dropout(p=0.2)

|

||||

)

|

||||

self.de_block1 = nn.Sequential( # in_ch=24x2 out_ch=16

|

||||

nn.UpsamplingNearest2d(scale_factor=2),

|

||||

nn.Conv2d(24*2, 16, kernel_size=3, padding=1),

|

||||

nn.InstanceNorm2d(16),

|

||||

nn.LeakyReLU(0.1),

|

||||

nn.Dropout(p=0.2)

|

||||

)

|

||||

|

||||

self.de_block0 = nn.Sequential( # in_ch=16x2 out_ch=7

|

||||

nn.UpsamplingNearest2d(scale_factor=2),

|

||||

nn.Conv2d(16*2, self.NUM_SEG_CLASSES, kernel_size=3, padding=1),

|

||||

nn.Softmax2d()

|

||||

)

|

||||

|

||||

def forward(self, x):

|

||||

e0 = self.en_block0(x)

|

||||

e1 = self.en_block1(e0)

|

||||

e2 = self.en_block2(e1)

|

||||

e3 = self.en_block3(e2)

|

||||

e4 = self.en_block4(e3)

|

||||

|

||||

d4 = self.de_block4(e4)

|

||||

d4 = F.interpolate(d4, size=e3.size()[2:], mode='bilinear', align_corners=True)

|

||||

c4 = torch.cat((d4,e3),1)

|

||||

|

||||

d3 = self.de_block3(c4)

|

||||

d3 = F.interpolate(d3, size=e2.size()[2:], mode='bilinear', align_corners=True)

|

||||

c3 = torch.cat((d3,e2),1)

|

||||

|

||||

d2 = self.de_block2(c3)

|

||||

d2 = F.interpolate(d2, size=e1.size()[2:], mode='bilinear', align_corners=True)

|

||||

c2 =torch.cat((d2,e1),1)

|

||||

|

||||

d1 = self.de_block1(c2)

|

||||

d1 = F.interpolate(d1, size=e0.size()[2:], mode='bilinear', align_corners=True)

|

||||

c1 = torch.cat((d1,e0),1)

|

||||

y = self.de_block0(c1)

|

||||

|

||||

return y

|

||||

|

||||

|

||||

class AnimeFaceSegment:

|

||||

|

||||

model_dir = os.path.join(models_path, "anime_face_segment")

|

||||

|

||||

def __init__(self):

|

||||

self.model = None

|

||||

self.device = devices.get_device_for("controlnet")

|

||||

|

||||

def load_model(self):

|

||||

remote_model_path = "https://huggingface.co/bdsqlsz/qinglong_controlnet-lllite/resolve/main/Annotators/UNet.pth"

|

||||

modelpath = os.path.join(self.model_dir, "UNet.pth")

|

||||

if not os.path.exists(modelpath):

|

||||

from basicsr.utils.download_util import load_file_from_url

|

||||

load_file_from_url(remote_model_path, model_dir=self.model_dir)

|

||||

net = UNet()

|

||||

ckpt = torch.load(modelpath, map_location=self.device)

|

||||

for key in list(ckpt.keys()):

|

||||

if 'module.' in key:

|

||||

ckpt[key.replace('module.', '')] = ckpt[key]

|

||||

del ckpt[key]

|

||||

net.load_state_dict(ckpt)

|

||||

net.eval()

|

||||

self.model = net.to(self.device)

|

||||

|

||||

def unload_model(self):

|

||||

if self.model is not None:

|

||||

self.model.cpu()

|

||||

|

||||

def __call__(self, input_image):

|

||||

|

||||

if self.model is None:

|

||||

self.load_model()

|

||||

self.model.to(self.device)

|

||||

transform = transforms.Compose([

|

||||

transforms.Resize(512,interpolation=transforms.InterpolationMode.BICUBIC),

|

||||

transforms.ToTensor(),])

|

||||

img = Image.fromarray(input_image)

|

||||

with torch.no_grad():

|

||||

img = transform(img).unsqueeze(dim=0).to(self.device)

|

||||

seg = self.model(img).squeeze(dim=0)

|

||||

seg = seg.cpu().detach().numpy()

|

||||

img = rearrange(seg,'h w c -> w c h')

|

||||

img = [[PALETTE[np.argmax(val)] for val in buf]for buf in img]

|

||||

return np.array(img).astype(np.uint8)

|

||||

@@ -1,22 +0,0 @@

|

||||

import os

|

||||

from modules import shared

|

||||

|

||||

models_path = shared.opts.data.get('control_net_modules_path', None)

|

||||

if not models_path:

|

||||

models_path = getattr(shared.cmd_opts, 'controlnet_annotator_models_path', None)

|

||||

if not models_path:

|

||||

models_path = os.path.join(os.path.dirname(os.path.abspath(__file__)), 'downloads')

|

||||

|

||||

if not os.path.isabs(models_path):

|

||||

models_path = os.path.join(shared.data_path, models_path)

|

||||

|

||||

clip_vision_path = os.path.join(os.path.dirname(os.path.abspath(__file__)), 'clip_vision')

|

||||

# clip vision is always inside controlnet "extensions\sd-webui-controlnet"

|

||||

# and any problem can be solved by removing controlnet and reinstall

|

||||

|

||||

models_path = os.path.realpath(models_path)

|

||||

os.makedirs(models_path, exist_ok=True)

|

||||

print(f'ControlNet preprocessor location: {models_path}')

|

||||

# Make sure that the default location is inside controlnet "extensions\sd-webui-controlnet"

|

||||

# so that any problem can be solved by removing controlnet and reinstall

|

||||

# if users do not change configs on their own (otherwise users will know what is wrong)

|

||||

@@ -1,14 +0,0 @@

|

||||

import cv2

|

||||

|

||||

|

||||

def apply_binary(img, bin_threshold):

|

||||

img_gray = cv2.cvtColor(img, cv2.COLOR_RGB2GRAY)

|

||||

|

||||

if bin_threshold == 0 or bin_threshold == 255:

|

||||

# Otsu's threshold

|

||||

otsu_threshold, img_bin = cv2.threshold(img_gray, 0, 255, cv2.THRESH_BINARY_INV + cv2.THRESH_OTSU)

|

||||

print("Otsu threshold:", otsu_threshold)

|

||||

else:

|

||||

_, img_bin = cv2.threshold(img_gray, bin_threshold, 255, cv2.THRESH_BINARY_INV)

|

||||

|

||||

return cv2.cvtColor(img_bin, cv2.COLOR_GRAY2RGB)

|

||||

@@ -1,5 +0,0 @@

|

||||

import cv2

|

||||

|

||||

|

||||

def apply_canny(img, low_threshold, high_threshold):

|

||||

return cv2.Canny(img, low_threshold, high_threshold)

|

||||

@@ -1,133 +0,0 @@

|

||||

import os

|

||||

import cv2

|

||||

import torch

|

||||

|

||||

from modules import devices

|

||||

from modules.modelloader import load_file_from_url

|

||||

from annotator.annotator_path import models_path

|

||||

from transformers import CLIPVisionModelWithProjection, CLIPVisionConfig, CLIPImageProcessor

|

||||

|

||||

|

||||

config_clip_g = {

|

||||

"attention_dropout": 0.0,

|

||||

"dropout": 0.0,

|

||||

"hidden_act": "gelu",

|

||||

"hidden_size": 1664,

|

||||

"image_size": 224,

|

||||

"initializer_factor": 1.0,

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 8192,

|

||||

"layer_norm_eps": 1e-05,

|

||||

"model_type": "clip_vision_model",

|

||||

"num_attention_heads": 16,

|

||||

"num_channels": 3,

|

||||

"num_hidden_layers": 48,

|

||||

"patch_size": 14,

|

||||

"projection_dim": 1280,

|

||||

"torch_dtype": "float32"

|

||||

}

|

||||

|

||||

config_clip_h = {

|

||||

"attention_dropout": 0.0,

|

||||

"dropout": 0.0,

|

||||

"hidden_act": "gelu",

|

||||

"hidden_size": 1280,

|

||||

"image_size": 224,

|

||||

"initializer_factor": 1.0,

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 5120,

|

||||

"layer_norm_eps": 1e-05,

|

||||

"model_type": "clip_vision_model",

|

||||

"num_attention_heads": 16,

|

||||

"num_channels": 3,

|

||||

"num_hidden_layers": 32,

|

||||

"patch_size": 14,

|

||||

"projection_dim": 1024,

|

||||

"torch_dtype": "float32"

|

||||

}

|

||||

|

||||

config_clip_vitl = {

|

||||

"attention_dropout": 0.0,

|

||||

"dropout": 0.0,

|

||||

"hidden_act": "quick_gelu",

|

||||

"hidden_size": 1024,

|

||||

"image_size": 224,

|

||||

"initializer_factor": 1.0,

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 4096,

|

||||

"layer_norm_eps": 1e-05,

|

||||

"model_type": "clip_vision_model",

|

||||

"num_attention_heads": 16,

|

||||

"num_channels": 3,

|

||||

"num_hidden_layers": 24,

|

||||

"patch_size": 14,

|

||||

"projection_dim": 768,

|

||||

"torch_dtype": "float32"

|

||||

}

|

||||

|

||||

configs = {

|

||||

'clip_g': config_clip_g,

|

||||

'clip_h': config_clip_h,

|

||||

'clip_vitl': config_clip_vitl,

|

||||

}

|

||||

|

||||

downloads = {

|

||||

'clip_vitl': 'https://huggingface.co/openai/clip-vit-large-patch14/resolve/main/pytorch_model.bin',

|

||||

'clip_g': 'https://huggingface.co/lllyasviel/Annotators/resolve/main/clip_g.pth',

|

||||

'clip_h': 'https://huggingface.co/h94/IP-Adapter/resolve/main/models/image_encoder/pytorch_model.bin'

|

||||

}

|

||||

|

||||

|

||||

clip_vision_h_uc = os.path.join(os.path.dirname(os.path.abspath(__file__)), 'clip_vision_h_uc.data')

|

||||

clip_vision_h_uc = torch.load(clip_vision_h_uc, map_location=torch.device('cuda' if torch.cuda.is_available() else 'cpu'))['uc']

|

||||

|

||||

clip_vision_vith_uc = os.path.join(os.path.dirname(os.path.abspath(__file__)), 'clip_vision_vith_uc.data')

|

||||

clip_vision_vith_uc = torch.load(clip_vision_vith_uc, map_location=torch.device('cuda' if torch.cuda.is_available() else 'cpu'))['uc']

|

||||

|

||||

|

||||

class ClipVisionDetector:

|

||||

def __init__(self, config, low_vram: bool):

|

||||

assert config in downloads

|

||||

self.download_link = downloads[config]

|

||||

self.model_path = os.path.join(models_path, 'clip_vision')

|

||||

self.file_name = config + '.pth'

|

||||

self.config = configs[config]

|

||||

self.device = (

|

||||

torch.device("cpu") if low_vram else

|

||||

devices.get_device_for("controlnet")

|

||||

)

|

||||

os.makedirs(self.model_path, exist_ok=True)

|

||||

file_path = os.path.join(self.model_path, self.file_name)

|

||||

if not os.path.exists(file_path):

|

||||

load_file_from_url(url=self.download_link, model_dir=self.model_path, file_name=self.file_name)

|

||||

config = CLIPVisionConfig(**self.config)

|

||||

|

||||

self.model = CLIPVisionModelWithProjection(config)

|

||||

self.processor = CLIPImageProcessor(crop_size=224,

|

||||

do_center_crop=True,

|

||||

do_convert_rgb=True,

|

||||

do_normalize=True,

|

||||

do_resize=True,

|

||||

image_mean=[0.48145466, 0.4578275, 0.40821073],

|

||||

image_std=[0.26862954, 0.26130258, 0.27577711],

|

||||

resample=3,

|

||||

size=224)

|

||||

sd = torch.load(file_path, map_location=self.device)

|

||||

self.model.load_state_dict(sd, strict=False)

|

||||

del sd

|

||||

self.model.to(self.device)

|

||||

self.model.eval()

|

||||

|

||||

def unload_model(self):

|

||||

if self.model is not None:

|

||||

self.model.to('meta')

|

||||

|

||||

def __call__(self, input_image):

|

||||

with torch.no_grad():

|

||||

input_image = cv2.resize(input_image, (224, 224), interpolation=cv2.INTER_AREA)

|

||||

feat = self.processor(images=input_image, return_tensors="pt")

|

||||

feat['pixel_values'] = feat['pixel_values'].to(self.device)

|

||||

result = self.model(**feat, output_hidden_states=True)

|

||||

result['hidden_states'] = [v.to(self.device) for v in result['hidden_states']]

|

||||

result = {k: v.to(self.device) if isinstance(v, torch.Tensor) else v for k, v in result.items()}

|

||||

return result

|

||||

Binary file not shown.

Binary file not shown.

@@ -1,20 +0,0 @@

|

||||

import cv2

|

||||

|

||||

def cv2_resize_shortest_edge(image, size):

|

||||

h, w = image.shape[:2]

|

||||

if h < w:

|

||||

new_h = size

|

||||

new_w = int(round(w / h * size))

|

||||

else:

|

||||

new_w = size

|

||||

new_h = int(round(h / w * size))

|

||||

resized_image = cv2.resize(image, (new_w, new_h), interpolation=cv2.INTER_AREA)

|

||||

return resized_image

|

||||

|

||||

def apply_color(img, res=512):

|

||||

img = cv2_resize_shortest_edge(img, res)

|

||||

h, w = img.shape[:2]

|

||||

|

||||

input_img_color = cv2.resize(img, (w//64, h//64), interpolation=cv2.INTER_CUBIC)

|

||||

input_img_color = cv2.resize(input_img_color, (w, h), interpolation=cv2.INTER_NEAREST)

|

||||

return input_img_color

|

||||

@@ -1,57 +0,0 @@

|

||||

import torchvision # Fix issue Unknown builtin op: torchvision::nms

|

||||

import cv2

|

||||

import numpy as np

|

||||

import torch

|

||||

from einops import rearrange

|

||||

from .densepose import DensePoseMaskedColormapResultsVisualizer, _extract_i_from_iuvarr, densepose_chart_predictor_output_to_result_with_confidences

|

||||

from modules import devices

|

||||

from annotator.annotator_path import models_path

|

||||

import os

|

||||

|

||||

N_PART_LABELS = 24

|

||||

result_visualizer = DensePoseMaskedColormapResultsVisualizer(

|

||||

alpha=1,

|

||||

data_extractor=_extract_i_from_iuvarr,

|

||||

segm_extractor=_extract_i_from_iuvarr,

|

||||

val_scale = 255.0 / N_PART_LABELS

|

||||

)

|

||||

remote_torchscript_path = "https://huggingface.co/LayerNorm/DensePose-TorchScript-with-hint-image/resolve/main/densepose_r50_fpn_dl.torchscript"

|

||||

torchscript_model = None

|

||||

model_dir = os.path.join(models_path, "densepose")

|

||||

|

||||

def apply_densepose(input_image, cmap="viridis"):

|

||||

global torchscript_model

|

||||

if torchscript_model is None:

|

||||

model_path = os.path.join(model_dir, "densepose_r50_fpn_dl.torchscript")

|

||||

if not os.path.exists(model_path):

|

||||

from basicsr.utils.download_util import load_file_from_url

|

||||

load_file_from_url(remote_torchscript_path, model_dir=model_dir)

|

||||

torchscript_model = torch.jit.load(model_path, map_location="cpu").to(devices.get_device_for("controlnet")).eval()

|

||||

H, W = input_image.shape[:2]

|

||||

|

||||

hint_image_canvas = np.zeros([H, W], dtype=np.uint8)

|

||||

hint_image_canvas = np.tile(hint_image_canvas[:, :, np.newaxis], [1, 1, 3])

|

||||

input_image = rearrange(torch.from_numpy(input_image).to(devices.get_device_for("controlnet")), 'h w c -> c h w')

|

||||

pred_boxes, corase_segm, fine_segm, u, v = torchscript_model(input_image)

|

||||

|

||||

extractor = densepose_chart_predictor_output_to_result_with_confidences

|

||||

densepose_results = [extractor(pred_boxes[i:i+1], corase_segm[i:i+1], fine_segm[i:i+1], u[i:i+1], v[i:i+1]) for i in range(len(pred_boxes))]

|

||||

|

||||

if cmap=="viridis":

|

||||

result_visualizer.mask_visualizer.cmap = cv2.COLORMAP_VIRIDIS

|

||||

hint_image = result_visualizer.visualize(hint_image_canvas, densepose_results)

|

||||

hint_image = cv2.cvtColor(hint_image, cv2.COLOR_BGR2RGB)

|

||||

hint_image[:, :, 0][hint_image[:, :, 0] == 0] = 68

|

||||

hint_image[:, :, 1][hint_image[:, :, 1] == 0] = 1

|

||||

hint_image[:, :, 2][hint_image[:, :, 2] == 0] = 84

|

||||

else:

|

||||

result_visualizer.mask_visualizer.cmap = cv2.COLORMAP_PARULA

|

||||

hint_image = result_visualizer.visualize(hint_image_canvas, densepose_results)

|

||||

hint_image = cv2.cvtColor(hint_image, cv2.COLOR_BGR2RGB)

|

||||

|

||||

return hint_image

|

||||

|

||||

def unload_model():

|

||||

global torchscript_model

|

||||

if torchscript_model is not None:

|

||||

torchscript_model.cpu()

|

||||

@@ -1,347 +0,0 @@

|

||||

from typing import Tuple

|

||||

import math

|

||||

import numpy as np

|

||||

from enum import IntEnum

|

||||

from typing import List, Tuple, Union

|

||||

import torch

|

||||

from torch.nn import functional as F

|

||||

import logging

|

||||

import cv2

|

||||

|

||||

Image = np.ndarray

|

||||

Boxes = torch.Tensor

|

||||

ImageSizeType = Tuple[int, int]

|

||||

_RawBoxType = Union[List[float], Tuple[float, ...], torch.Tensor, np.ndarray]

|

||||

IntTupleBox = Tuple[int, int, int, int]

|

||||

|

||||

class BoxMode(IntEnum):

|

||||

"""

|

||||

Enum of different ways to represent a box.

|

||||

"""

|

||||

|

||||

XYXY_ABS = 0

|

||||

"""

|

||||

(x0, y0, x1, y1) in absolute floating points coordinates.

|

||||

The coordinates in range [0, width or height].

|

||||

"""

|

||||

XYWH_ABS = 1

|

||||

"""

|

||||

(x0, y0, w, h) in absolute floating points coordinates.

|

||||

"""

|

||||

XYXY_REL = 2

|

||||

"""

|

||||

Not yet supported!

|

||||

(x0, y0, x1, y1) in range [0, 1]. They are relative to the size of the image.

|

||||

"""

|

||||

XYWH_REL = 3

|

||||

"""

|

||||

Not yet supported!

|

||||

(x0, y0, w, h) in range [0, 1]. They are relative to the size of the image.

|

||||

"""

|

||||

XYWHA_ABS = 4

|

||||

"""

|

||||

(xc, yc, w, h, a) in absolute floating points coordinates.

|

||||

(xc, yc) is the center of the rotated box, and the angle a is in degrees ccw.

|

||||

"""

|

||||

|

||||

@staticmethod

|

||||

def convert(box: _RawBoxType, from_mode: "BoxMode", to_mode: "BoxMode") -> _RawBoxType:

|

||||

"""

|

||||

Args:

|

||||

box: can be a k-tuple, k-list or an Nxk array/tensor, where k = 4 or 5

|

||||

from_mode, to_mode (BoxMode)

|

||||

|

||||

Returns:

|

||||

The converted box of the same type.

|

||||

"""

|

||||

if from_mode == to_mode:

|

||||

return box

|

||||

|

||||

original_type = type(box)

|

||||

is_numpy = isinstance(box, np.ndarray)

|

||||

single_box = isinstance(box, (list, tuple))

|

||||

if single_box:

|

||||

assert len(box) == 4 or len(box) == 5, (

|

||||

"BoxMode.convert takes either a k-tuple/list or an Nxk array/tensor,"

|

||||

" where k == 4 or 5"

|

||||

)

|

||||

arr = torch.tensor(box)[None, :]

|

||||

else:

|

||||

# avoid modifying the input box

|

||||

if is_numpy:

|

||||

arr = torch.from_numpy(np.asarray(box)).clone()

|

||||

else:

|

||||

arr = box.clone()

|

||||

|

||||

assert to_mode not in [BoxMode.XYXY_REL, BoxMode.XYWH_REL] and from_mode not in [

|

||||

BoxMode.XYXY_REL,

|

||||

BoxMode.XYWH_REL,

|

||||

], "Relative mode not yet supported!"

|

||||

|

||||

if from_mode == BoxMode.XYWHA_ABS and to_mode == BoxMode.XYXY_ABS:

|

||||

assert (

|

||||

arr.shape[-1] == 5

|

||||

), "The last dimension of input shape must be 5 for XYWHA format"

|

||||

original_dtype = arr.dtype

|

||||

arr = arr.double()

|

||||

|

||||

w = arr[:, 2]

|

||||

h = arr[:, 3]

|

||||

a = arr[:, 4]

|

||||

c = torch.abs(torch.cos(a * math.pi / 180.0))

|

||||

s = torch.abs(torch.sin(a * math.pi / 180.0))

|

||||

# This basically computes the horizontal bounding rectangle of the rotated box

|

||||

new_w = c * w + s * h

|

||||

new_h = c * h + s * w

|

||||

|

||||

# convert center to top-left corner

|

||||

arr[:, 0] -= new_w / 2.0

|

||||

arr[:, 1] -= new_h / 2.0

|

||||

# bottom-right corner

|

||||

arr[:, 2] = arr[:, 0] + new_w

|

||||

arr[:, 3] = arr[:, 1] + new_h

|

||||

|

||||

arr = arr[:, :4].to(dtype=original_dtype)

|

||||

elif from_mode == BoxMode.XYWH_ABS and to_mode == BoxMode.XYWHA_ABS:

|

||||

original_dtype = arr.dtype

|

||||

arr = arr.double()

|

||||

arr[:, 0] += arr[:, 2] / 2.0

|

||||

arr[:, 1] += arr[:, 3] / 2.0

|

||||

angles = torch.zeros((arr.shape[0], 1), dtype=arr.dtype)

|

||||

arr = torch.cat((arr, angles), axis=1).to(dtype=original_dtype)

|

||||

else:

|

||||

if to_mode == BoxMode.XYXY_ABS and from_mode == BoxMode.XYWH_ABS:

|

||||

arr[:, 2] += arr[:, 0]

|

||||

arr[:, 3] += arr[:, 1]

|

||||

elif from_mode == BoxMode.XYXY_ABS and to_mode == BoxMode.XYWH_ABS:

|

||||

arr[:, 2] -= arr[:, 0]

|

||||

arr[:, 3] -= arr[:, 1]

|

||||

else:

|

||||

raise NotImplementedError(

|

||||

"Conversion from BoxMode {} to {} is not supported yet".format(

|

||||

from_mode, to_mode

|

||||

)

|

||||

)

|

||||

|

||||

if single_box:

|

||||

return original_type(arr.flatten().tolist())

|

||||

if is_numpy:

|

||||

return arr.numpy()

|

||||

else:

|

||||

return arr

|

||||

|

||||

class MatrixVisualizer:

|

||||

"""

|

||||

Base visualizer for matrix data

|

||||

"""

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

inplace=True,

|

||||

cmap=cv2.COLORMAP_PARULA,

|

||||

val_scale=1.0,

|

||||

alpha=0.7,

|

||||

interp_method_matrix=cv2.INTER_LINEAR,

|

||||

interp_method_mask=cv2.INTER_NEAREST,

|

||||

):

|

||||

self.inplace = inplace

|

||||

self.cmap = cmap

|

||||

self.val_scale = val_scale

|

||||

self.alpha = alpha

|

||||

self.interp_method_matrix = interp_method_matrix

|

||||

self.interp_method_mask = interp_method_mask

|

||||

|

||||

def visualize(self, image_bgr, mask, matrix, bbox_xywh):

|

||||

self._check_image(image_bgr)

|

||||

self._check_mask_matrix(mask, matrix)

|

||||

if self.inplace:

|

||||

image_target_bgr = image_bgr

|

||||

else:

|

||||

image_target_bgr = image_bgr * 0

|

||||

x, y, w, h = [int(v) for v in bbox_xywh]

|

||||

if w <= 0 or h <= 0:

|

||||

return image_bgr

|

||||

mask, matrix = self._resize(mask, matrix, w, h)

|

||||

mask_bg = np.tile((mask == 0)[:, :, np.newaxis], [1, 1, 3])

|

||||

matrix_scaled = matrix.astype(np.float32) * self.val_scale

|

||||

_EPSILON = 1e-6

|

||||

if np.any(matrix_scaled > 255 + _EPSILON):

|

||||

logger = logging.getLogger(__name__)

|

||||

logger.warning(

|

||||

f"Matrix has values > {255 + _EPSILON} after " f"scaling, clipping to [0..255]"

|

||||

)

|

||||

matrix_scaled_8u = matrix_scaled.clip(0, 255).astype(np.uint8)

|

||||

matrix_vis = cv2.applyColorMap(matrix_scaled_8u, self.cmap)

|

||||

matrix_vis[mask_bg] = image_target_bgr[y : y + h, x : x + w, :][mask_bg]

|

||||

image_target_bgr[y : y + h, x : x + w, :] = (

|

||||

image_target_bgr[y : y + h, x : x + w, :] * (1.0 - self.alpha) + matrix_vis * self.alpha

|

||||

)

|

||||

return image_target_bgr.astype(np.uint8)

|

||||

|

||||

def _resize(self, mask, matrix, w, h):

|

||||

if (w != mask.shape[1]) or (h != mask.shape[0]):

|

||||

mask = cv2.resize(mask, (w, h), self.interp_method_mask)

|

||||

if (w != matrix.shape[1]) or (h != matrix.shape[0]):

|

||||

matrix = cv2.resize(matrix, (w, h), self.interp_method_matrix)

|

||||

return mask, matrix

|

||||

|

||||

def _check_image(self, image_rgb):

|

||||

assert len(image_rgb.shape) == 3

|

||||

assert image_rgb.shape[2] == 3

|

||||

assert image_rgb.dtype == np.uint8

|

||||

|

||||

def _check_mask_matrix(self, mask, matrix):

|

||||

assert len(matrix.shape) == 2

|

||||

assert len(mask.shape) == 2

|

||||

assert mask.dtype == np.uint8

|

||||

|

||||

class DensePoseResultsVisualizer:

|

||||

def visualize(

|

||||

self,

|

||||

image_bgr: Image,

|

||||

results,

|

||||

) -> Image:

|

||||

context = self.create_visualization_context(image_bgr)

|

||||

for i, result in enumerate(results):

|

||||

boxes_xywh, labels, uv = result

|

||||

iuv_array = torch.cat(

|

||||

(labels[None].type(torch.float32), uv * 255.0)

|

||||

).type(torch.uint8)

|

||||

self.visualize_iuv_arr(context, iuv_array.cpu().numpy(), boxes_xywh)

|

||||

image_bgr = self.context_to_image_bgr(context)

|

||||

return image_bgr

|

||||

|

||||

def create_visualization_context(self, image_bgr: Image):

|

||||

return image_bgr

|

||||

|

||||

def visualize_iuv_arr(self, context, iuv_arr: np.ndarray, bbox_xywh) -> None:

|

||||

pass

|

||||

|

||||

def context_to_image_bgr(self, context):

|

||||

return context

|

||||

|

||||

def get_image_bgr_from_context(self, context):

|

||||

return context

|

||||

|

||||

class DensePoseMaskedColormapResultsVisualizer(DensePoseResultsVisualizer):

|

||||

def __init__(

|

||||

self,

|

||||

data_extractor,

|

||||

segm_extractor,

|

||||

inplace=True,

|

||||

cmap=cv2.COLORMAP_PARULA,

|

||||

alpha=0.7,

|

||||

val_scale=1.0,

|

||||

**kwargs,

|

||||

):

|

||||

self.mask_visualizer = MatrixVisualizer(

|

||||

inplace=inplace, cmap=cmap, val_scale=val_scale, alpha=alpha

|

||||

)

|

||||

self.data_extractor = data_extractor

|

||||

self.segm_extractor = segm_extractor

|

||||

|

||||

def context_to_image_bgr(self, context):

|

||||

return context

|

||||

|

||||

def visualize_iuv_arr(self, context, iuv_arr: np.ndarray, bbox_xywh) -> None:

|

||||

image_bgr = self.get_image_bgr_from_context(context)

|

||||

matrix = self.data_extractor(iuv_arr)

|

||||

segm = self.segm_extractor(iuv_arr)

|

||||

mask = np.zeros(matrix.shape, dtype=np.uint8)

|

||||

mask[segm > 0] = 1

|

||||

image_bgr = self.mask_visualizer.visualize(image_bgr, mask, matrix, bbox_xywh)

|

||||

|

||||

|

||||

def _extract_i_from_iuvarr(iuv_arr):

|

||||

return iuv_arr[0, :, :]

|

||||

|

||||

|

||||

def _extract_u_from_iuvarr(iuv_arr):

|

||||

return iuv_arr[1, :, :]

|

||||

|

||||

|

||||

def _extract_v_from_iuvarr(iuv_arr):

|

||||

return iuv_arr[2, :, :]

|

||||

|

||||

def make_int_box(box: torch.Tensor) -> IntTupleBox:

|

||||

int_box = [0, 0, 0, 0]

|

||||

int_box[0], int_box[1], int_box[2], int_box[3] = tuple(box.long().tolist())

|

||||

return int_box[0], int_box[1], int_box[2], int_box[3]

|

||||

|

||||

def densepose_chart_predictor_output_to_result_with_confidences(

|

||||

boxes: Boxes,

|

||||

coarse_segm,

|

||||

fine_segm,

|

||||

u, v

|

||||

|

||||

):

|

||||

boxes_xyxy_abs = boxes.clone()

|

||||

boxes_xywh_abs = BoxMode.convert(boxes_xyxy_abs, BoxMode.XYXY_ABS, BoxMode.XYWH_ABS)

|

||||

box_xywh = make_int_box(boxes_xywh_abs[0])

|

||||

|

||||

labels = resample_fine_and_coarse_segm_tensors_to_bbox(fine_segm, coarse_segm, box_xywh).squeeze(0)

|

||||

uv = resample_uv_tensors_to_bbox(u, v, labels, box_xywh)

|

||||

confidences = []

|

||||

return box_xywh, labels, uv

|

||||

|

||||

def resample_fine_and_coarse_segm_tensors_to_bbox(

|

||||

fine_segm: torch.Tensor, coarse_segm: torch.Tensor, box_xywh_abs: IntTupleBox

|

||||

):

|

||||

"""

|

||||

Resample fine and coarse segmentation tensors to the given

|

||||

bounding box and derive labels for each pixel of the bounding box

|

||||

|

||||

Args:

|

||||

fine_segm: float tensor of shape [1, C, Hout, Wout]

|

||||

coarse_segm: float tensor of shape [1, K, Hout, Wout]

|

||||

box_xywh_abs (tuple of 4 int): bounding box given by its upper-left

|

||||

corner coordinates, width (W) and height (H)

|

||||

Return:

|

||||

Labels for each pixel of the bounding box, a long tensor of size [1, H, W]

|

||||

"""

|

||||

x, y, w, h = box_xywh_abs

|

||||

w = max(int(w), 1)

|

||||

h = max(int(h), 1)

|

||||

# coarse segmentation

|

||||

coarse_segm_bbox = F.interpolate(

|

||||

coarse_segm,

|

||||

(h, w),

|

||||

mode="bilinear",

|

||||

align_corners=False,

|

||||

).argmax(dim=1)

|

||||

# combined coarse and fine segmentation

|

||||

labels = (

|

||||

F.interpolate(fine_segm, (h, w), mode="bilinear", align_corners=False).argmax(dim=1)

|

||||

* (coarse_segm_bbox > 0).long()

|

||||

)

|

||||

return labels

|

||||

|

||||

def resample_uv_tensors_to_bbox(

|

||||

u: torch.Tensor,

|

||||

v: torch.Tensor,

|

||||

labels: torch.Tensor,

|

||||

box_xywh_abs: IntTupleBox,

|

||||

) -> torch.Tensor:

|

||||

"""

|

||||

Resamples U and V coordinate estimates for the given bounding box

|

||||

|

||||

Args:

|

||||

u (tensor [1, C, H, W] of float): U coordinates

|

||||

v (tensor [1, C, H, W] of float): V coordinates

|

||||

labels (tensor [H, W] of long): labels obtained by resampling segmentation

|

||||

outputs for the given bounding box

|

||||

box_xywh_abs (tuple of 4 int): bounding box that corresponds to predictor outputs

|

||||

Return:

|

||||

Resampled U and V coordinates - a tensor [2, H, W] of float

|

||||

"""

|

||||

x, y, w, h = box_xywh_abs

|

||||

w = max(int(w), 1)

|

||||

h = max(int(h), 1)

|

||||

u_bbox = F.interpolate(u, (h, w), mode="bilinear", align_corners=False)

|

||||

v_bbox = F.interpolate(v, (h, w), mode="bilinear", align_corners=False)

|

||||

uv = torch.zeros([2, h, w], dtype=torch.float32, device=u.device)

|

||||

for part_id in range(1, u_bbox.size(1)):

|

||||

uv[0][labels == part_id] = u_bbox[0, part_id][labels == part_id]

|

||||

uv[1][labels == part_id] = v_bbox[0, part_id][labels == part_id]

|

||||

return uv

|

||||

|

||||

@@ -1,79 +0,0 @@

|

||||

import os

|

||||

import torch

|

||||

import cv2

|

||||

import numpy as np

|

||||

import torch.nn.functional as F

|

||||

from torchvision.transforms import Compose

|

||||

|

||||

from depth_anything.dpt import DPT_DINOv2

|

||||

from depth_anything.util.transform import Resize, NormalizeImage, PrepareForNet

|

||||

from .util import load_model

|

||||

from .annotator_path import models_path

|

||||

|

||||

|

||||

transform = Compose(

|

||||

[

|

||||

Resize(

|

||||

width=518,

|

||||

height=518,

|

||||

resize_target=False,

|

||||

keep_aspect_ratio=True,

|

||||

ensure_multiple_of=14,

|

||||

resize_method="lower_bound",

|

||||

image_interpolation_method=cv2.INTER_CUBIC,

|

||||

),

|

||||

NormalizeImage(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

|

||||

PrepareForNet(),

|

||||

]

|

||||

)

|

||||

|

||||

|

||||

class DepthAnythingDetector:

|

||||

"""https://github.com/LiheYoung/Depth-Anything"""

|

||||

|

||||

model_dir = os.path.join(models_path, "depth_anything")

|

||||

|

||||

def __init__(self, device: torch.device):

|

||||

self.device = device

|

||||

self.model = (

|

||||

DPT_DINOv2(

|

||||

encoder="vitl",

|

||||

features=256,

|

||||

out_channels=[256, 512, 1024, 1024],

|

||||

localhub=False,

|

||||

)

|

||||

.to(device)

|

||||

.eval()

|

||||

)

|

||||

remote_url = os.environ.get(

|

||||

"CONTROLNET_DEPTH_ANYTHING_MODEL_URL",

|

||||

"https://huggingface.co/spaces/LiheYoung/Depth-Anything/resolve/main/checkpoints/depth_anything_vitl14.pth",

|

||||

)

|

||||

model_path = load_model(

|

||||

"depth_anything_vitl14.pth", remote_url=remote_url, model_dir=self.model_dir

|

||||

)

|

||||

self.model.load_state_dict(torch.load(model_path))

|

||||

|

||||

def __call__(self, image: np.ndarray, colored: bool = True) -> np.ndarray:

|

||||

self.model.to(self.device)

|

||||

h, w = image.shape[:2]

|

||||

|

||||

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB) / 255.0

|

||||

image = transform({"image": image})["image"]

|

||||

image = torch.from_numpy(image).unsqueeze(0).to(self.device)

|

||||

@torch.no_grad()

|

||||

def predict_depth(model, image):

|

||||

return model(image)

|

||||

depth = predict_depth(self.model, image)

|

||||

depth = F.interpolate(

|

||||

depth[None], (h, w), mode="bilinear", align_corners=False

|

||||

)[0, 0]

|

||||

depth = (depth - depth.min()) / (depth.max() - depth.min()) * 255.0

|

||||

depth = depth.cpu().numpy().astype(np.uint8)

|